Trump orders all federal agencies to drop Anthropic after the AI company refused to bend its terms for the Pentagon

Key Points

- Anthropic CEO Dario Amodei stands firm on two red lines for his company's AI technology: it must not be used for mass domestic surveillance or fully autonomous weapons systems.

- Claude is already widely deployed across defense and intelligence functions, but Amodei argues current AI systems remain too unreliable to remove humans entirely from the decision-making loop.

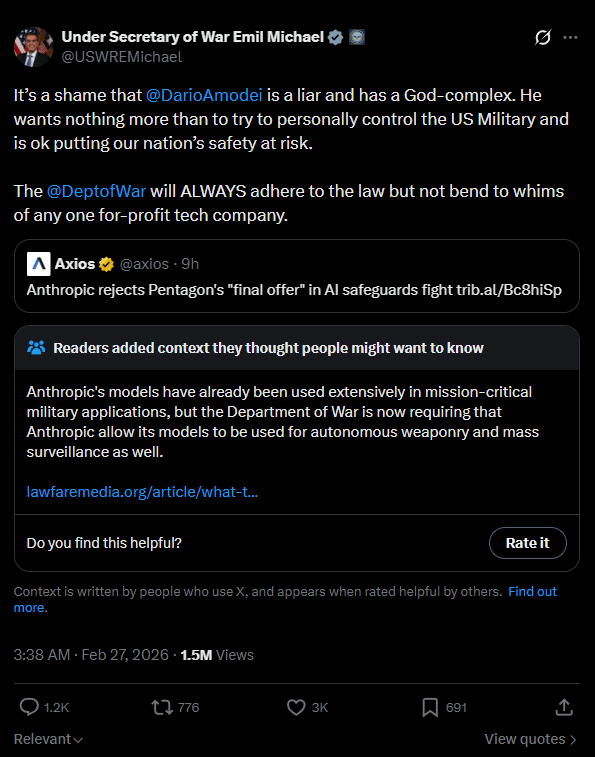

- The Pentagon has pushed back, maintaining that existing laws and guidelines are sufficient and declining to provide additional written assurances. Chief Technology Officer Emil Michael escalated the tension publicly, calling Amodei a "liar" with a "God complex."

Update:

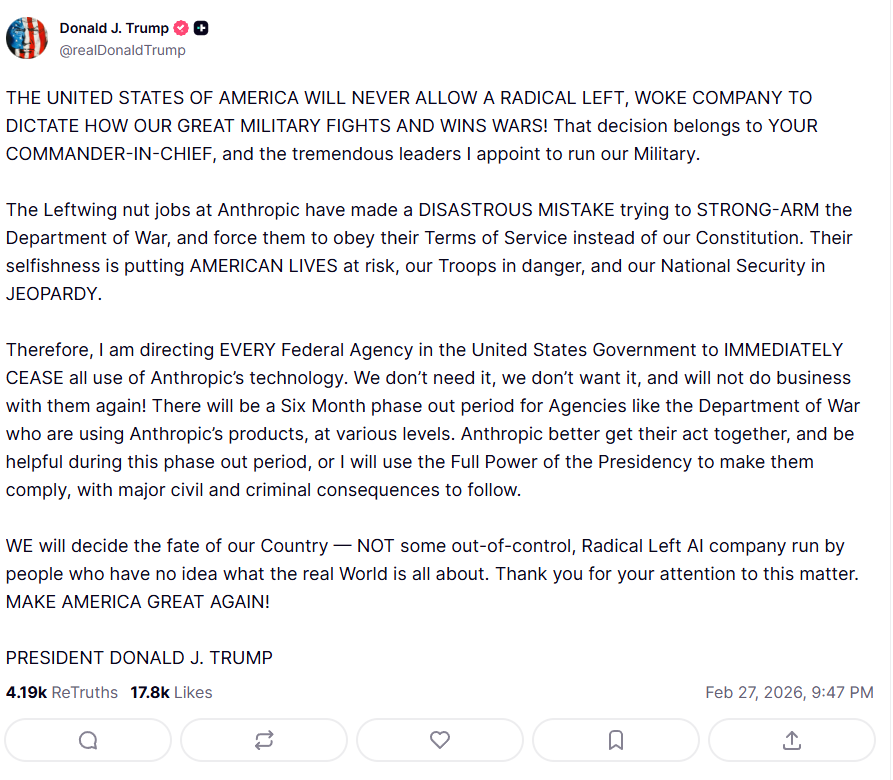

The Pentagon's deadline has passed, and Anthropic didn't back down. In response, President Donald Trump launched into a tirade on Truth Social, ordering all federal agencies to immediately stop using Anthropic's technology.

Trump accused the AI company of trying to impose its terms of service on the Department of Defense instead of following the Constitution. He called Anthropic a "RADICAL LEFT, WOKE COMPANY" and threatened the "full power of the presidency" along with civil and criminal consequences. Agencies like the Department of Defense get a six-month transition period to phase out Anthropic's technology.

Original article from February 27:

Anthropic holds firm against Pentagon on autonomous weapons and mass surveillance as deadline looms

Anthropic CEO Dario Amodei has publicly reaffirmed the company's position in the growing conflict with the Pentagon.

Anthropic is holding firm on its two red lines, Amodei says: no mass domestic surveillance (which notably means mass foreign surveillance is apparently fair game) and no fully autonomous weapons.

Amodei emphasizes that Anthropic was the first AI company to deploy its models in classified government networks, national labs, and for national security customers. Claude is already being used extensively for intelligence analysis, simulations, mission planning, and cyber operations, he says.

On autonomous weapons, Amodei's argument is straightforward: today's AI systems just aren't reliable enough to remove humans from the kill chain entirely. Anthropic offered to work with the Pentagon on improving that reliability, according to Amodei, but the Pentagon said no.

On domestic surveillance, Amodei warns that AI can take scattered, individually harmless data points and assemble a comprehensive profile of any citizen, automatically and at massive scale.

Amodei also points out a contradiction in the Pentagon's approach: you can't label Anthropic a supply chain risk and simultaneously invoke the Defense Production Act to declare it essential to national security. Those two things are mutually exclusive, he argues. Despite the threats, Anthropic isn't budging. If the Pentagon pulls Anthropic from its systems, the company says it will ensure a smooth transition to another provider.

Anthropic also says it gave up several hundred million dollars in revenue by cutting off Chinese firms with ties to the Communist Party from accessing Claude and is actively pushing for strict chip export controls. That said, Anthropic clearly has skin in the game here: chip export controls weaken Chinese competitors. And if Chinese firms use Claude to build their own models, that undercuts Anthropic's business long-term. Walking away from that revenue doubles as self-preservation.

Pentagon says it compromised; Anthropic disagrees

Pentagon technology chief Emil Michael told CBS the military made " some very good concessions." Specifically, Michael says the Pentagon offered to acknowledge existing laws formally against domestic surveillance and Pentagon guidelines on autonomous weapons in writing. It also offered Anthropic a seat on the military's AI ethics board, according to Michael. Anthropic called the concessions insufficient. Michael fired back on X, calling Amodei a "liar" with a "God-complex."

Asked why the Pentagon won't explicitly guarantee it won't use Anthropic's model for mass surveillance or fully autonomous weapons decisions, Michael said existing law and Pentagon policy already prohibit that. "You have to trust your military to do the right thing," Michael told CBS.

At the same time, Michael stressed the need to prepare for the future and for China's growing use of AI: "So we'll never say that we're not going to be able to defend ourselves in writing to a company." The deadline for Anthropic expires Friday at 5:01 p.m., according to Michael.

What the Defense Production Act can and can't actually do to Anthropic

Legal scholar Alan Z. Rozenshtein breaks down on Lawfare what the Pentagon can actually force using the Defense Production Act (DPA). The Korean War-era law gives the president broad authority to compel companies to deliver goods in the interest of national defense.

According to Rozenshtein, the legal question comes down to what exactly the Pentagon is demanding. There are two possible scenarios. In the first, the Pentagon demands Anthropic deliver Claude without contractual usage restrictions: the same model, just without the clauses barring mass surveillance and autonomous weapons. The government would have a strong case here, Rozenshtein argues, since the product itself stays the same.

In the second scenario, the Pentagon demands Anthropic retrain Claude from scratch and strip the safety guardrails out of the model itself. That's a much harder sell legally, Rozenshtein writes, since it would effectively create a new product and it's unclear whether the DPA can force a company to build something it doesn't offer.

Forced retraining would also raise First Amendment concerns, according to Rozenshtein. If training decisions qualify as editorial decisions, the government would essentially be compelling Anthropic to express values the company rejects.

Rozenshtein flags the same contradiction Amodei raised: the Pentagon can't simultaneously treat Anthropic as a security risk and invoke the DPA to declare it indispensable to national defense. If Anthropic refuses to comply, it faces criminal penalties. The most likely outcome, Rozenshtein writes, is that Anthropic would comply under protest and immediately challenge the order in court.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe now