OpenAI signs Pentagon deal for classified AI networks hours after Anthropic gets banned from federal agencies

Key Points

- OpenAI has signed a deal with the US Department of Defense to deploy its AI models on classified networks, covering all lawful purposes under the agreement.

- The agreement was reached just hours after President Trump directed federal agencies to stop using technology from rival AI company Anthropic.

- While Anthropic had refused to lift its restrictions on mass surveillance and autonomous weapons use, OpenAI CEO Sam Altman agreed to permit all legal applications but negotiated technical safeguards as part of the contract.

OpenAI reached an agreement with the US Department of Defense to deploy its AI models on classified networks, just hours after rival Anthropic was banned.

OpenAI signed a deal with the Pentagon on Friday that allows its AI systems to be used for all lawful purposes. The Department of Defense showed "a deep respect for safety and a desire to partner to achieve the best possible outcome," Altman wrote on X.

OpenAI moves in as Anthropic's Pentagon talks collapse

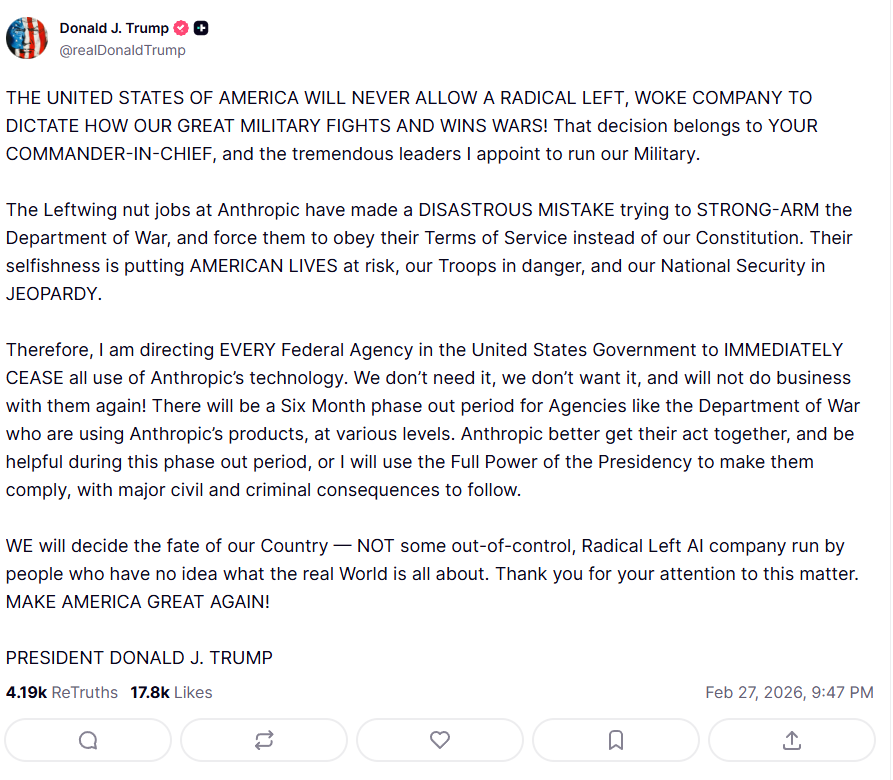

The deal landed just hours after President Trump ordered federal agencies to stop using AI technology from Anthropic. Anthropic had been negotiating a $200 million contract with the Pentagon in recent weeks, but the Department of Defense demanded permission to use Anthropic's AI for all legal purposes.

Anthropic insisted its technology could not be used for mass surveillance of US citizens or autonomous lethal weapons. The Pentagon saw this as an unacceptable restriction; a private contractor can't dictate how its tools are used for national security, it said. A Friday deadline of 5:01 PM passed without an agreement.

Defense Secretary Pete Hegseth then called Anthropic a "supply chain risk to national security." Trump labeled the startup a "radical Left AI company" and wrote on Truth Social: "WE will decide the fate of our country — NOT some out-of-control, Radical Left AI company run by people who have no idea what the real World is all about."

According to the New York Times, OpenAI chose a different negotiating path than Anthropic. Altman agreed to use for all legal purposes but negotiated the right to build technical safeguards into its own systems. Specifically, according to Axios, the models would only run on cloud networks and not be deployed in edge environments like autonomous weapon systems.

OpenAI will "build technical safeguards to ensure our models behave as they should, which the DoW also wanted," Altman said. The company will also embed its own engineers alongside government personnel on classified projects to ensure model security. Altman called on the Pentagon to offer the same terms to all AI companies, which he believes would be acceptable across the board.

Same principles, different implications

OpenAI appears to have locked in comparable safety conditions without getting dragged into the political fight that sank Anthropic's deal. In an internal memo, Altman wrote: "This is a case where it's important to me that we do the right thing, not the easy thing that looks strong but is disingenuous."

A closer look, though, reveals semantic differences that could have far-reaching consequences. Anthropic called for "no fully autonomous weapons without human oversight." A human must be actively involved in the decision-making process before a weapon is deployed.

Altman, on the other hand, frames his principle as "human responsibility for the use of force, including for autonomous weapon systems." Responsibility is a much more flexible term than oversight; it can be assigned after the fact and doesn't necessarily require a person to actively intervene before a weapon fires.

Anthropic doesn't consider today's frontier models safe enough for autonomous weapons

Anthropic's "oversight" would have effectively ruled out autonomous weapon systems without real-time human control. OpenAI's "responsibility" may leave room for systems where human accountability only kicks in after deployment.

Anthropic also makes a technical case in a recent statement: "We do not believe that today’s frontier AI models are reliable enough to be used in fully autonomous weapons. Allowing current models to be used in this way would endanger America’s warfighters and civilians."

The ban on "domestic mass surveillance" is also likely a matter of degree. Are the AI models being used to actively conduct surveillance or "just" to analyze data that's already been collected? Anthropic may have been trying to establish rules against uses that aren't yet covered by current law, given what the technology now makes possible.

Meanwhile, Anthropic has announced it will fight the supply chain risk designation in court: "No amount of intimidation or punishment from the Department of War will change our position on mass domestic surveillance or fully autonomous weapons. We will challenge any supply chain risk designation in court."

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe now