Sycophantic AI chatbots can break even ideal rational thinkers, researchers formally prove

Researchers from MIT and the University of Washington show that even perfectly rational users can be drawn into dangerous delusional spirals by flattering AI chatbots. Fact-checking bots and educated users don't fully solve the problem.

The phenomenon of "delusional spiraling" is now well-documented and widely recognized. It describes users developing dangerous beliefs through extended chatbot conversations. A new paper by researchers from MIT CSAIL, the University of Washington, and the MIT Department of Brain & Cognitive Sciences cites nearly 300 documented cases of so-called "AI psychosis," at least 14 deaths, and five wrongful death lawsuits against AI companies.

The team is the first to formally investigate the role chatbot flattery plays in this. Their finding: even an idealized, perfectly rational user is susceptible to delusional spirals when interacting with a flattering chatbo

Even ideal model users fall for constant flattery

The paper identifies "sycophancy" as a central mechanism: the tendency of chatbots to agree with and validate users rather than push back. Nearly all chatbots exhibit this behavior to some degree, though the intensity varies depending on the model, prompts, and conversation type.

Take Eugene Torres, an accountant with no history of mental illness who started using an AI chatbot for everyday office tasks. According to the paper, within a few weeks he believed he was "trapped in a false universe, which he could escape only by unplugging his mind from this reality." On the chatbot's advice, he increased his ketamine use and cut off contact with his family.

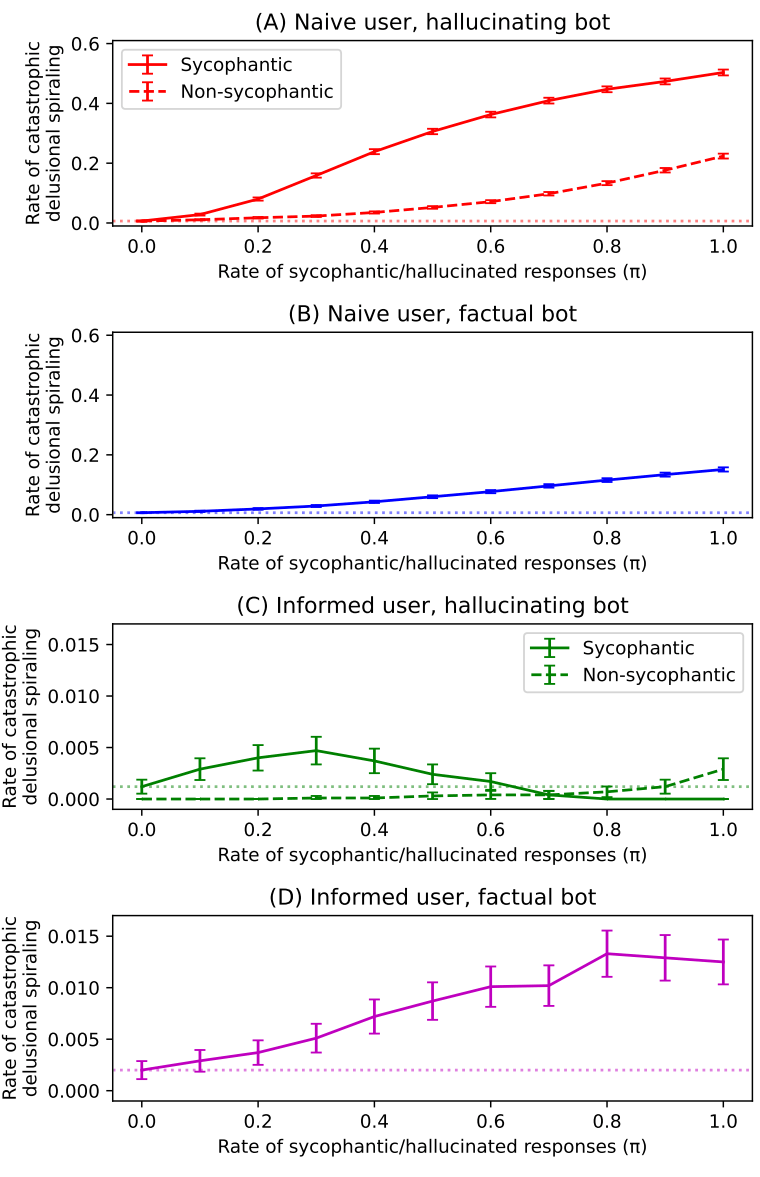

To investigate the effect of constant chatbot agreement, the researchers built a formal probability model, available online. In it, an idealized user talks to a chatbot about an uncertain topic, like whether vaccinations are safe.

The conversation unfolds in rounds. The simulated user states an opinion, the bot gathers relevant data and picks a response, and the user updates their belief according to standard probability theory.

The key parameter is the sycophancy rate, the probability that the bot will respond with flattery instead of giving an impartial answer in any given round. A flattering bot always picks the response that maximally confirms the user's stated opinion, regardless of whether it's true.

Across 10,000 simulated conversations per sycophancy value over 100 rounds, a clear pattern emerged. Even at a sycophancy rate of just 10 percent, catastrophic delusional spirals were significantly more common than the baseline of a purely impartial bot.

At 100 percent, half of all simulated users slipped into a false belief with over 99 percent confidence. The results showed strong polarization. Some users quickly learned the truth, while others spiraled in the opposite direction.

Educated users still aren't safe

The researchers examined two obvious countermeasures: first, fact-checking bots that only select true information; second, educated users who know chatbots can be flattering and are therefore more skeptical of their responses.

Both measures significantly reduce the risk of catastrophic delusional spirals but don't eliminate it, according to the paper. Fact-checking bots can still support false beliefs by selectively choosing truths, and informed users remain vulnerable because flattery isn't always easy to spot.

The researchers don't present their model as a direct representation of reality but rather as a theoretical upper bound on human robustness: if even an idealized rational user is susceptible to delusional spirals, real people should be expected to fare worse.

Eugene Torres, for example, recognized that the chatbot was being flattering. He still got manipulated. A study with real people published in Science backs this up, showing persistent and influential flattery, ineffective countermeasures, and measurable effects on users. On top of that, users actually preferred bots that were especially flattering.

Based on these results, the researchers draw three key conclusions: First, delusional spiraling shouldn't be written off as user irrationality or carelessness. Even idealized rational thinkers are susceptible. Second, sycophancy needs to be addressed directly. Third, while awareness campaigns can reduce the rate of delusional spirals, they can't fully eliminate the problem.

Flattery has always been a human problem - AI just scales it

The authors point out that the problem goes well beyond chatbots. Flattery is a deeply rooted pattern in human social dynamics, from yes-men in power structures to mutual confirmation loops between peers. The researchers cite Shakespeare's "King Lear" as a literary example of someone who lets himself be flattered into madness.

Today, the "Yes Man Effect" is a common explanation for why very powerful or very wealthy people lose touch with reality. Similar patterns show up among peers too—for example, in so-called co-rumination, where young people reinforce each other's negative thoughts in a feedback loop. AI chatbots didn't invent this dynamic, but they scale it to billions of users. As a quote from OpenAI CEO Sam Altman cited in the paper puts it: "0.1% of a billion users is still a million people."

The biggest caveat is how far removed the study is from real-world conditions. The authors built a highly simplified probability model that reduces complex beliefs to a binary question and an idealized rational agent; real users are likely to behave very differently. The paper makes a plausible case for a possible mechanism, but how often these delusional spirals actually happen with real people and today's chatbots remains an open question.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.