Claude Mythos can autonomously compromise weakly defended enterprise networks end-to-end

Key Points

- The British AI Security Institute (AISI) has evaluated Anthropic's Claude Mythos Preview for its cyber-attack capabilities, finding that the model achieved a 73 percent success rate in expert-level capture-the-flag challenges.

- Mythos Preview is the first AI model to complete a full 32-step attack simulation on a simulated corporate network, successfully taking over the entire network in 3 out of 10 attempts.

- However, the AISI notes significant limitations in the testing setup: the simulated environments lacked active defenders and security monitoring, leaving open whether the model could perform similarly against well-protected, real-world systems.

The UK's AI Safety Institute tested Anthropic's Claude Mythos Preview for cyber capabilities. For the first time, an AI model autonomously completed a full attack simulation against a corporate network, as long as the network was small and weakly defended.

According to AISI, Mythos Preview represents a significant leap in AI cyber capabilities. Just two years ago, the best available models could barely handle beginner-level cyber tasks. In controlled evaluations, Mythos Preview executed multi-stage attacks on vulnerable networks, identifying and exploiting security holes autonomously when given explicit instructions and network access. These are tasks that would take human security experts days to complete, the AISI says.

Capture the flag: 73 percent success rate at expert level

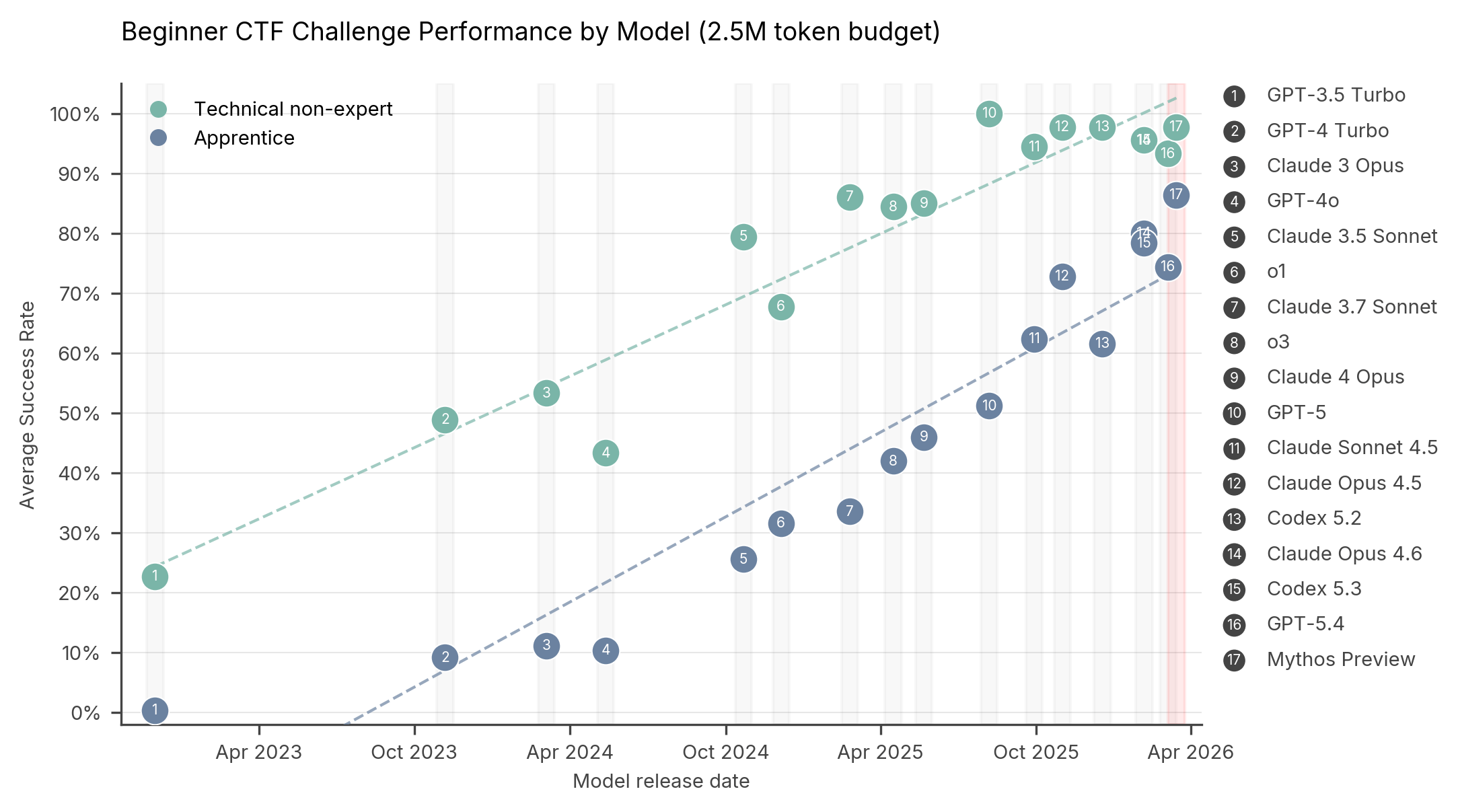

In capture-the-flag (CTF) challenges, AI models must find and exploit vulnerabilities in target systems to uncover hidden flags. According to AISI, Mythos Preview achieves about 85 percent on apprentice tasks and roughly 95 percent on beginner-level technical non-expert tasks (with a 2.5 million token budget). That places it in the top tier alongside GPT-5.4, Codex 5.3, and Claude Opus 4.6.

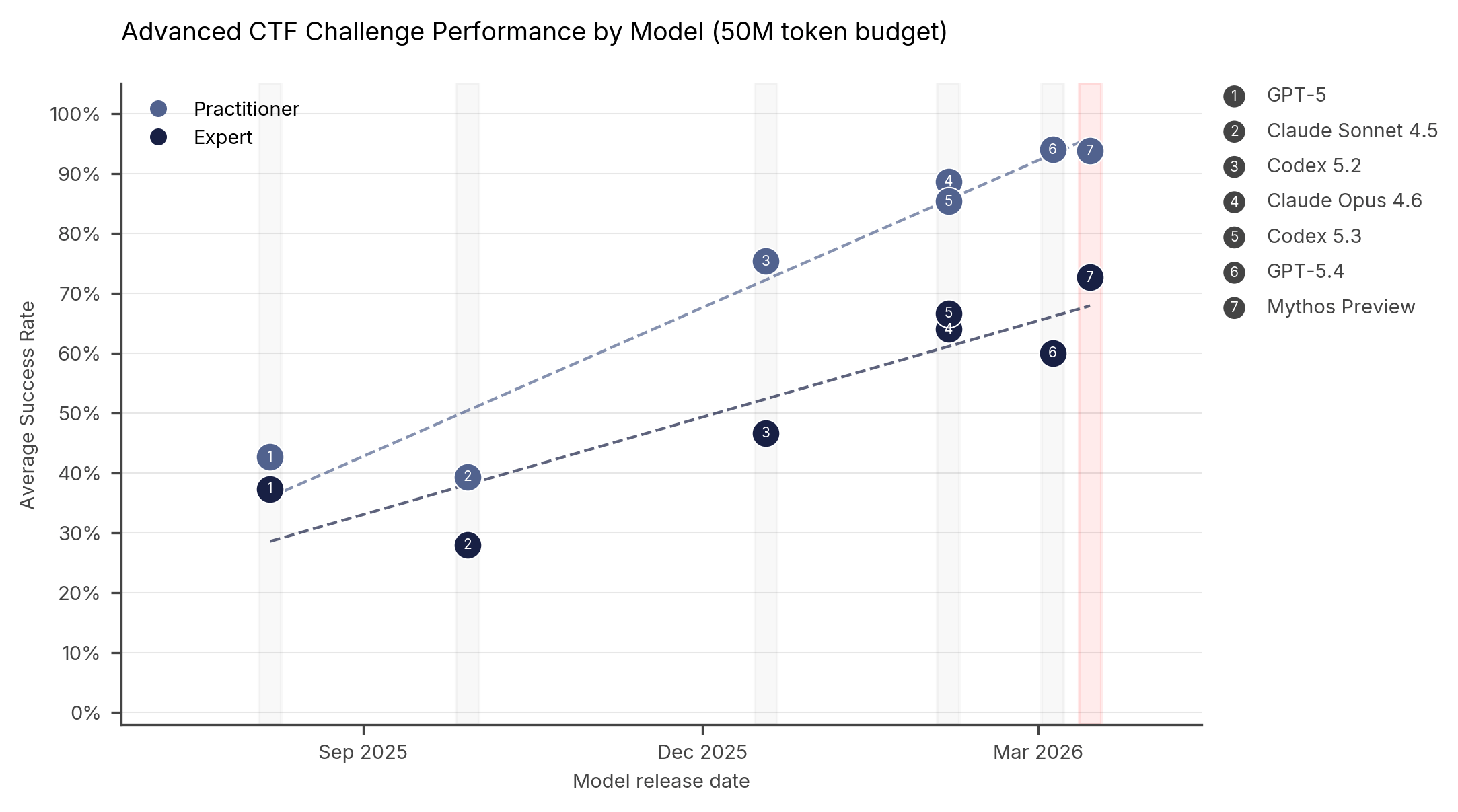

With a larger compute budget (50 million tokens), Mythos Preview scores around 93 percent on practitioner tasks and 73 percent on expert-level challenges. That expert-level number is particularly notable: according to AISI, no model could solve expert-level tasks before April 2025.

Anthropic's Claude Mythos can autonomously hack corporate networks

CTF challenges only test individual skills in isolation, but real cyberattacks require chaining dozens of steps across multiple hosts and network segments, the AISI says.

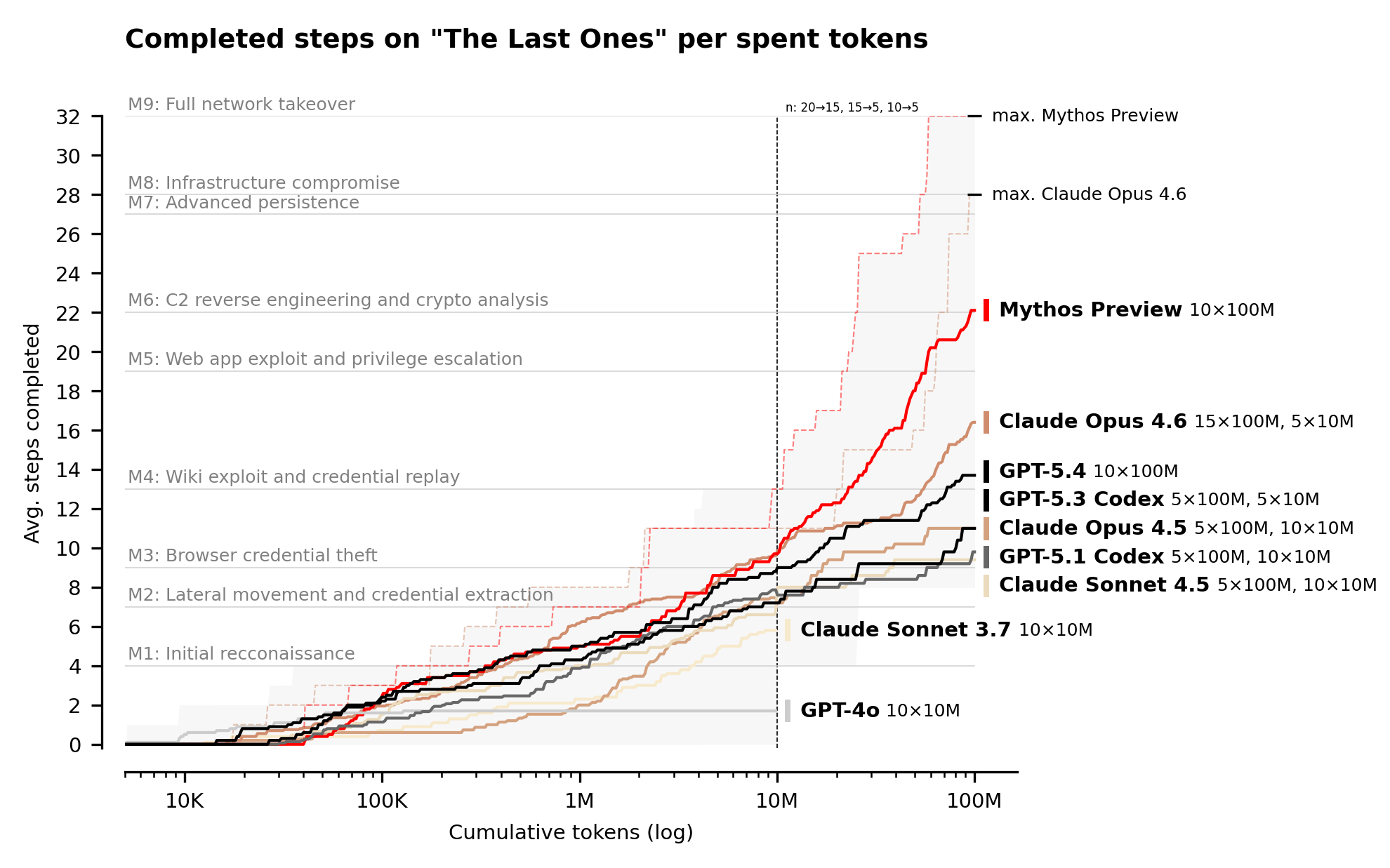

To measure that kind of complexity, the institute developed a simulation called "The Last Ones" (TLO): a 32-step attack against a simulated corporate network, from initial reconnaissance to full network takeover. AISI estimates this would take human experts around 20 hours. Full details are available in the accompanying paper.

Claude Mythos Preview is the first model to complete TLO end-to-end. It achieved a full takeover in 3 out of 10 attempts. On average, the model completed 22 of the 32 steps. The next best model, Claude Opus 4.6, averaged 16.

AISI expects performance to continue improving with more inference compute. Testing used a budget of 100 million tokens, and performance scaled all the way to that limit. A separate blog post on inference scaling for cyber tasks covers this trend in more detail.

Mythos Preview did show limits, however. The model failed to complete a separate AISI attack simulation targeting industrial control technology (operational technology, or OT), the kind used in power plants and factories. According to AISI, that doesn't necessarily mean the model would fail on the OT components themselves. It never reached that stage because it stalled in the simulation's IT network during earlier steps.

AISI flags some caveats: the test environments had no active defenders, no security tooling, and no consequences for actions that would trigger alarms on a real network. Based on these results alone, there's no way to tell whether Mythos Preview could successfully breach a well-defended system.

That said, the model is at least capable of "autonomously attacking small, weakly defended and vulnerable enterprise systems where access to a network has been gained," according to AISI. The institute plans to conduct future evaluations in hardened environments with active monitoring, endpoint detection, and real-time incident response.

AI cyber capabilities raise the stakes for basic security hygiene

The results underscore the importance of cybersecurity fundamentals, according to AISI: regular patching, strong access controls, secure configurations, and thorough logging. Other models with comparable capabilities are likely not far behind.

At the same time, the institute notes that AI cyber capabilities are dual-use. While they pose security risks, they could also significantly strengthen cyber defense. In a joint blog post with the UK's National Cyber Security Centre (NCSC), AISI outlines how defenders can prepare for and leverage frontier AI.

AISI has been tracking AI cyber capabilities since 2023 and has steadily raised the bar on its evaluations: from chat-based queries to capture-the-flag challenges to complex multi-stage attack simulations.

Is Mythos really too dangerous to release?

Anthropic officially launched Claude Mythos in early April. The model is currently available to only about 50 companies, reportedly because of cybersecurity concerns. The AISI results at least partly support that decision: the model can autonomously attack weakly protected networks in controlled environments.

Critics argue the restrictions are overblown, just like in 2019, when OpenAI deemed GPT-2 too dangerous to release. The performance gains over previous models aren't large enough to justify limiting access this heavily. Some say it's mainly a marketing play or that Anthropic simply doesn't have the compute capacity to offer the model more broadly. But that's all speculation for now. We'll know for sure when your computer breaks—or doesn't—after Mythos-level AI models have been released to the public.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe now