Deepmind suggests AI should occasionally assign humans busywork so we do not forget how to do our jobs

Key Points

- Researchers from Google Deepmind have proposed a framework for "intelligent AI delegation" covering how authority, responsibility, and accountability are transferred, whether between humans and AI or among AI agents themselves.

- A central ethical recommendation is to deliberately build in inefficiencies: the system should occasionally assign tasks to humans that AI could handle on its own, so that people retain the experience needed to intervene competently in critical situations.

- This is supposed to address the "paradox of automation," where over-reliance on AI leaves human supervisors unable to act when it matters most.

AI agents might soon autonomously distribute tasks to other agents and humans. Google Deepmind researchers argue in a new paper that current methods aren't up to the job and propose a framework to fix that.

The team's concept of "intelligent AI delegation" covers transferring authority, responsibility, and accountability, defining clear roles, and establishing mechanisms for trust. The framework applies to every combination: humans delegating to AI, AI agents delegating to each other, and AI systems handing tasks back to humans.

What organizational theory reveals about AI agent networks

The researchers borrow heavily from how human organizations work. They apply the principal-agent problem to AI systems: a principal delegates to an agent whose goals don't necessarily align with their own. With AI agents, this shows up less as deliberate deception and more as alignment issues like reward hacking, where a system exploits loopholes in its objective function.

How many agents an orchestrator or human supervisor can reliably monitor matters, too. The "authority gradient" concept from aviation shows that large competence gaps between superiors and subordinates stifle communication. For AI agents, sycophancy—the tendency to tell the user what they want to hear—can mean an agent won't even push back on tasks it shouldn't take on.

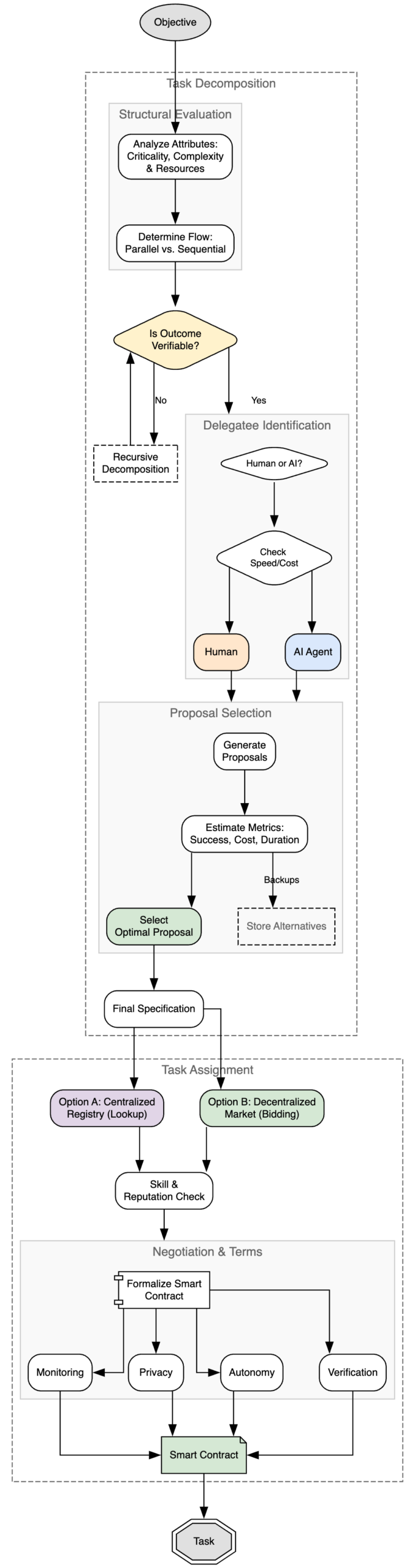

Verification as the core principle of AI delegation

The framework rests on five pillars: continuous agent evaluation, dynamic task redistribution when conditions change, traceable documentation of all decisions, reputation systems that coordinate open marketplaces, and safeguards that prevent individual errors from cascading through the entire network.

The key idea is "contract-first decomposition:" a task can only be delegated if its outcome can be verified. If a subtask is too subjective, too expensive, or too complex, it needs to be broken down further. Instead of centralized directories, the researchers argue for decentralized marketplaces with smart contracts that protect both sides.

Security is a major concern. The paper flags malicious agents that steal data or deliver falsified results, "agentic viruses"—self-propagating prompts—and "cognitive monoculture." If too many agents run on the same handful of foundation models, a single vulnerability could take down large parts of the network.

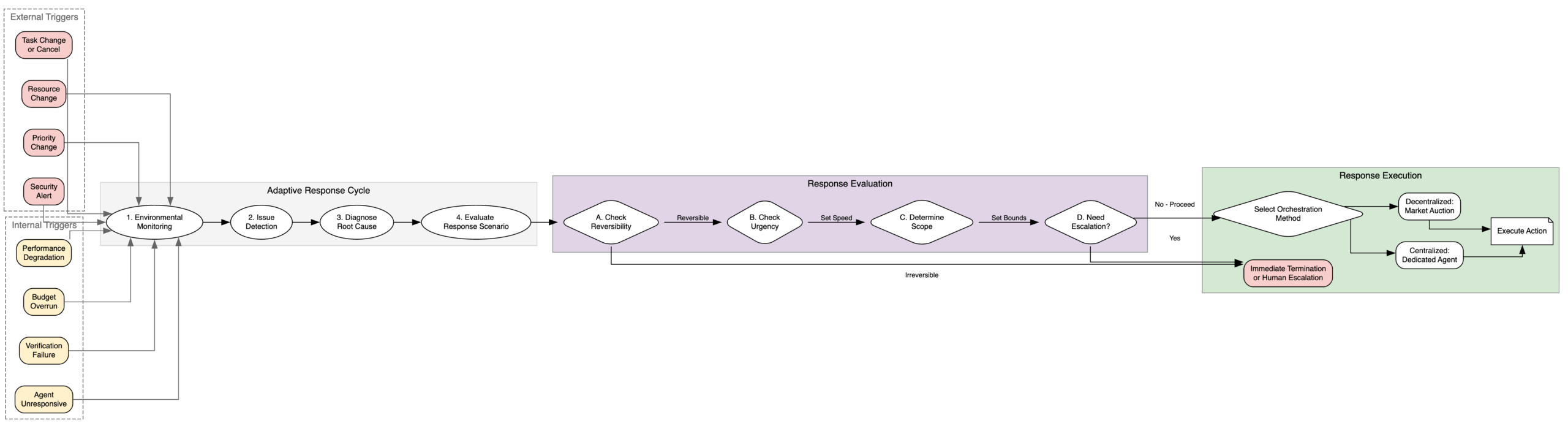

The adaptive coordination cycle: external and internal triggers kick off a multi-stage response chain. | Image: Deepmind

Deliberate inefficiency as a safeguard against human skill loss

One recommendation stands out: the researchers suggest deliberately building in inefficiencies. The system should occasionally assign tasks to people that it could handle on its own, specifically so humans don't lose their skills. The idea comes from the "Automation Paradox:" if AI takes over all routine tasks, human supervisors lose the hands-on experience they need to step in when something goes wrong. You end up with a fragile system where people are technically responsible but no longer understand what's actually happening.

The researchers also flag a "moral crumple zone," a setup where people have no real control over outcomes but sit in delegation chains specifically to absorb liability when things go wrong.

The team also looked at how well existing agent protocols like Anthropic's MCP, Google's A2A, and the Agent Payments Protocol hold up for this kind of delegation. According to the paper, none of them fully meet the requirements. MCP only offers binary access without finer-grained authorization levels, while A2A lacks support for cryptographic result verification.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now