Compress and reconstruct with AI: Meta Encodec can compress audio files much better than MP3 at 64 kb/s and maintain high quality comparable to the original.

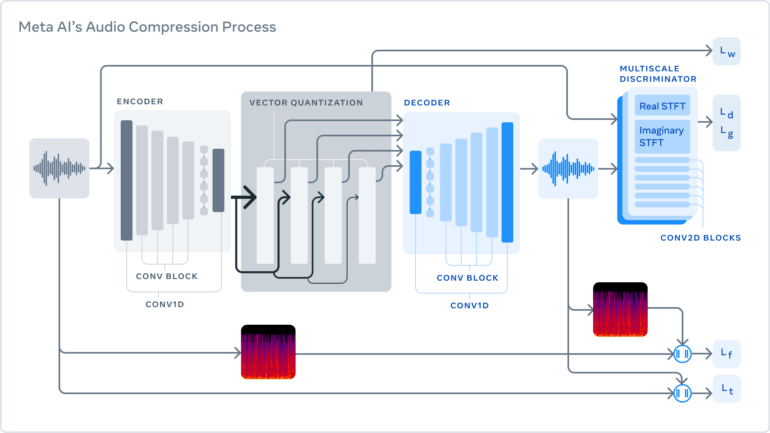

Meta calls Encodec "AI-powered hypercompression" for audio files. The three-stage system first compresses audio to a given target size and then reconstructs the waveform. All processes happen in real-time on a single CPU core.

- The encoder transforms raw data to higher dimensions at a lower frame rate.

- The quantizer compresses to the specified target size at MP3 level.

- The decoder converts the compressed signal back to a waveform that most closely resembles the original audio.

The key with the decoder is to identify those changes that cannot be perceived by humans, since "perfect reconstruction is impossible at low bit rates", Meta writes.

Meta relies on the discriminator approach known from GA networks for decoding: the compression model generates samples that are evaluated by a discriminator as real or generated. If the discriminator recognizes the sample as generated, the compression model changes its output until the discriminator deems the result to be genuine. This results in a "cat-and-mouse game" that pushes audio quality upward, according to Meta.

AI beats handwritten code

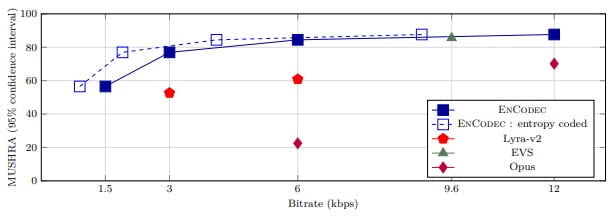

Classic human-written codecs for encoding and decoding such as MP3, Opus, and EVS are "probably reaching the limits of what they can give us," Meta AI writes. Encodec, on the other hand, can reconstruct low-bitrate audio (64 kb/s) without loss of quality and has the potential for further improvement, the researchers claim.

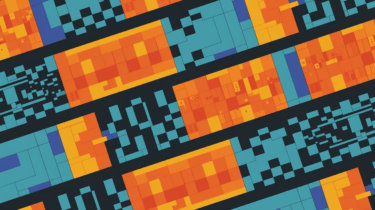

We achieve an approximate 10x compression rate compared with MP3 at 64 kbps, without a loss of quality. While such techniques have been explored before for speech, we are the first to make it work for 48 kHz sampled stereo audio (i.e., CD quality), which is the standard for music distribution.

The research team also trained a small Transformer-based language model, with the goal of running end-to-end compression and decompression faster than real-time on a single CPU core. Using the transformer could save an additional 40 percent bandwidth while maintaining quality if latency is not critical, as in music streaming, the researchers write.

In the human evaluation of the audio quality of various compression methods, including Google's AI-based Lyra-v2, Encodec performed best, here in particular the Transformer-based variant.

Meta AI believes AI compression can get even better

As HD music and video streaming services for mobile devices become more widespread, the importance of efficient compression is gaining momentum, the researchers write. AI compression has not yet reached its limits, according to the team. In addition, chips in smartphones or notebooks could be optimized to support the compression and decompression of files while consuming less power.

Encodec music compression compared to EVS and Opus. | Video: Meta AI

In the future, Meta plans to explore spatial audio compression for VR and AR, which involves compressing multiple audio channels while preserving spatial information. Earlier this summer, Meta already unveiled an open-source AI model for generating spatial audio for AR and VR.

Meta's Encodec code is available on Github. Meta also plans to use AI to compress video in an upcoming research project.