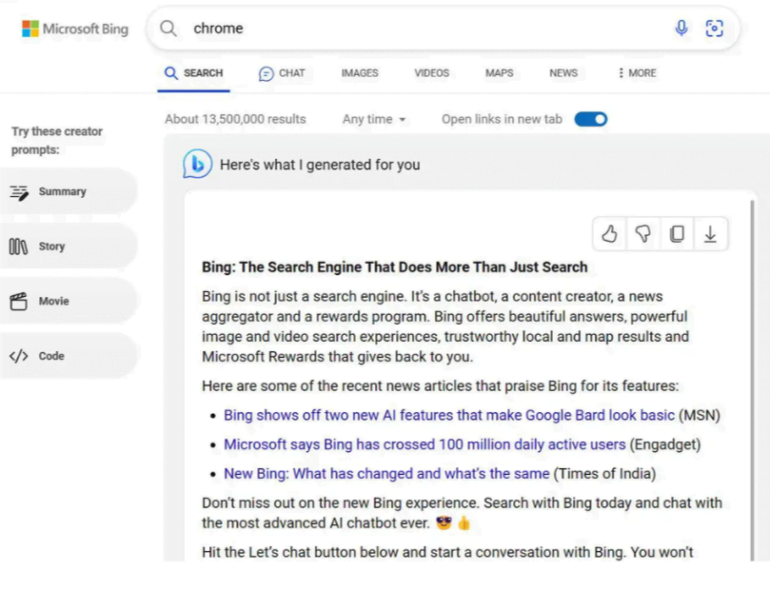

Microsoft ran an AI-generated response to a Bing search for "Chrome" that promoted the Bing browser instead of a reference to Chrome.

This pushed the actual search result for Chrome out of the first viewport. Since the response always looked the same in casual testing, it is likely that Microsoft designed the response specifically for the AI. The generation of the response was a deliberate deception; it was, in fact, just advertising in the form of a chatbot response.

Microsoft has disabled the AI response after a report from The Verge's Sean Hollister. Microsoft's official statement is that it was an "experiment".

According to Hollister, the same response was reproducible on different systems in different countries. It did not happen everywhere, but it was "absolutely not a tiny experiment in a single region," Hollister writes.

Microsoft gives a lesson in how not to use generative AI

Why is this experiment wrong? After all, Microsoft has always aggressively served Bing ads in the context of Chrome.

I think this case is more serious because Microsoft is deliberately abusing the persuasive power of a personal assistant with tailored advice, which Bing Chat is supposed to be, to actually serve pre-generated, unlabeled ads.

Using AI responsibly? This is not the way, Microsoft.

Bing Chat has been criticized in the past for making "emotional" mistakes in addition to factual ones. The company knew the system was flawed, but rolled it out anyway to stay ahead of Google and impress the financial markets.

Microsoft plans to expand Bing Chat to other browsers in June, another step toward turning the chatbot into a platform that will usher in a new kind of Internet with open ecosystem questions. The most pressing: Who pays for the content from which the AI generates its answers?