Microsoft unveils Fara-7B, a compact model for running AI-driven computer control locally

Microsoft's new Fara-7B model is a compact AI system built to operate user interfaces purely through visual input. Despite its small size, it aims to keep pace with far more complex systems while running locally on consumer devices.

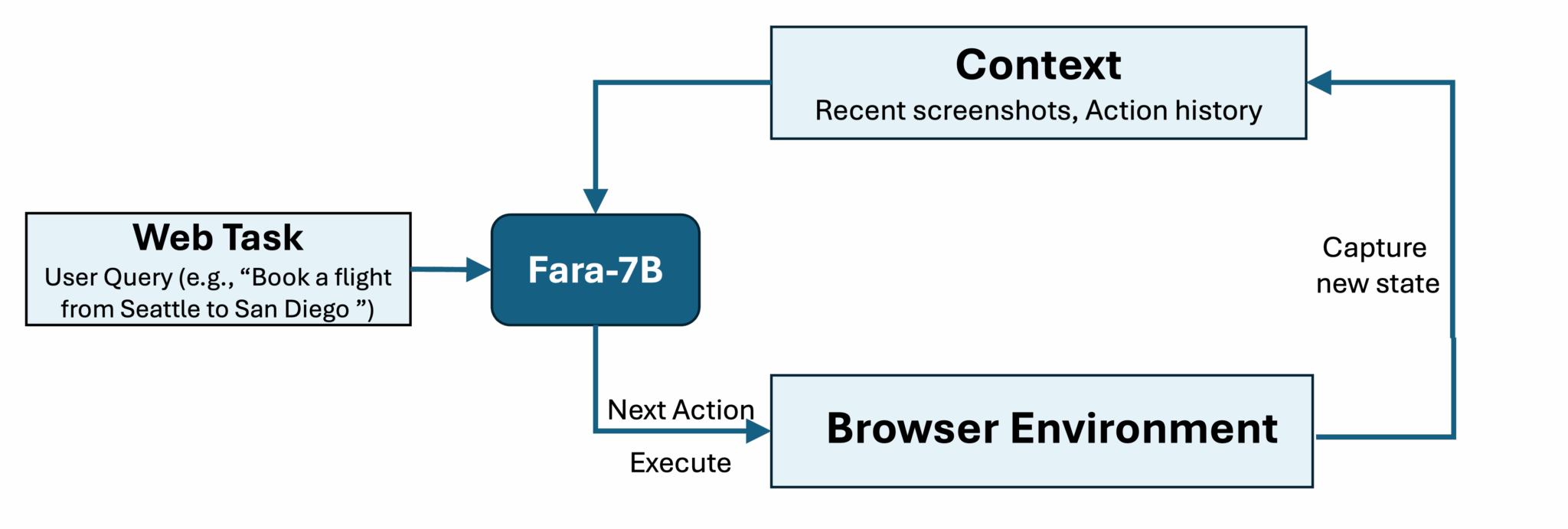

Fara-7B is based on Alibaba's Qwen2.5-VL-7B and, according to Microsoft, relies solely on visual information. Instead of tapping into accessibility trees or parsing HTML, it works directly off screenshots of the interface. The model runs in a loop of observing, thinking, and acting, predicting click coordinates or generating keystrokes as needed. It uses the last three screenshots, previous actions, and user input to decide what to do next.

With seven billion parameters, Fara-7B is lightweight enough to run directly on hardware. Microsoft says this setup reduces latency and improves privacy because all data stays on the device.

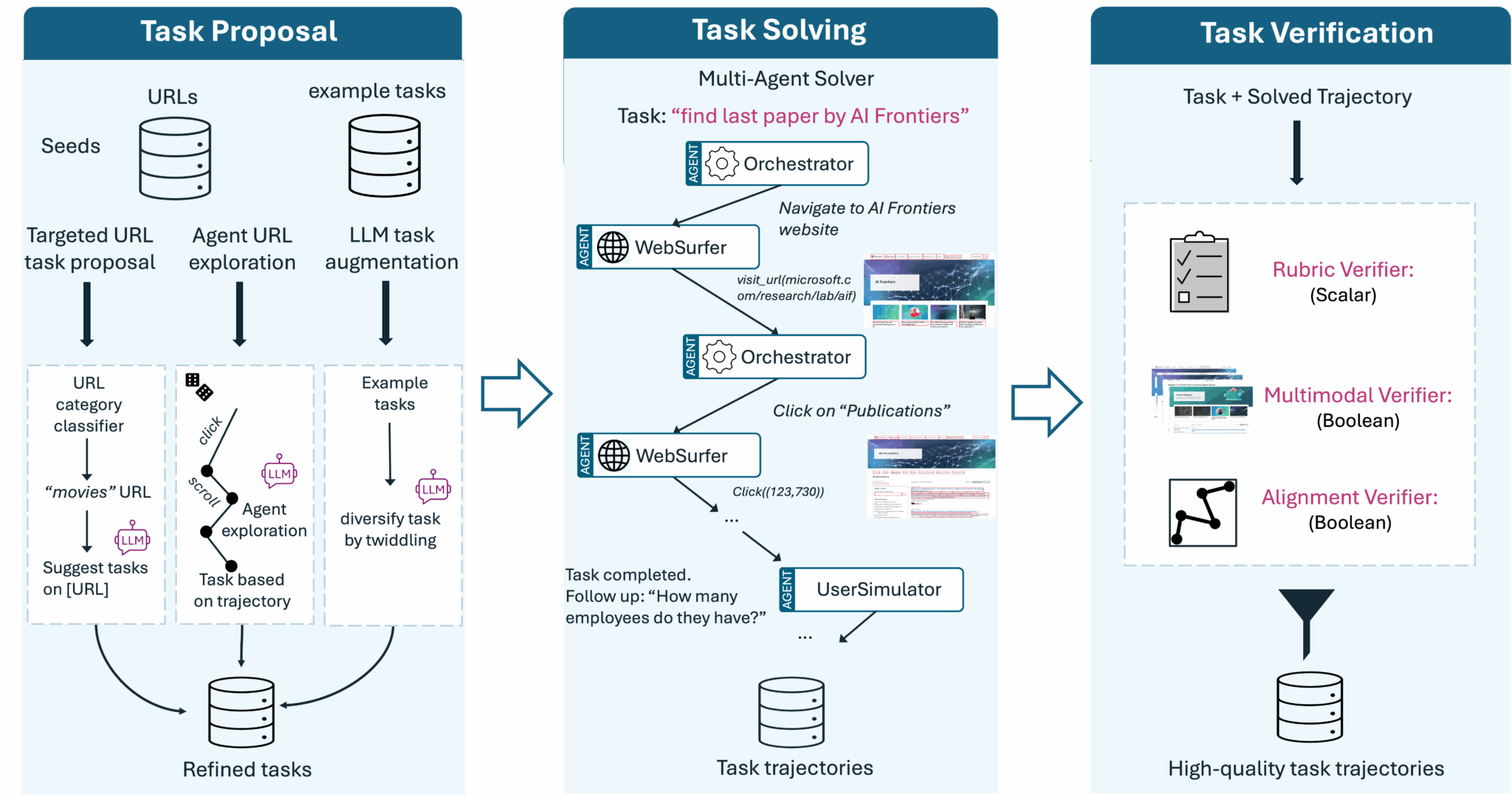

A major challenge for these kinds of computer-use agents is the lack of usable training data. Recording click paths manually is extremely time-consuming. Microsoft addressed this with a synthetic data pipeline.

The team used its in-house multi-agent framework Magentic-One to create task solutions automatically. An Orchestrator agent produces step-by-step plans, while a WebSurfer agent carries them out. Microsoft then collected the successful task runs - roughly 145,000 trajectories with one million total steps - and distilled the knowledge into the smaller Fara-7B model.

The company also introduced a new benchmark, WebTailBench, to cover task types that were underrepresented in older test suites, including price comparisons and job searches.

Efficiency that challenges larger models

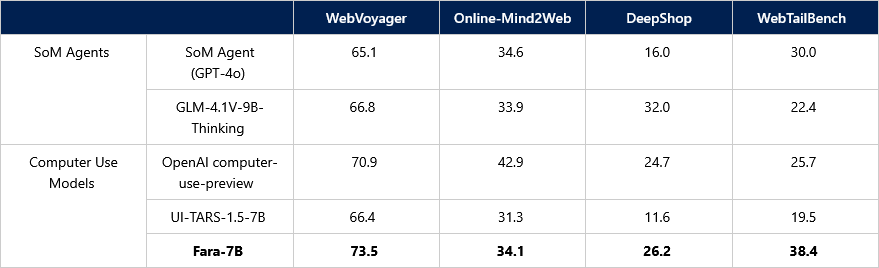

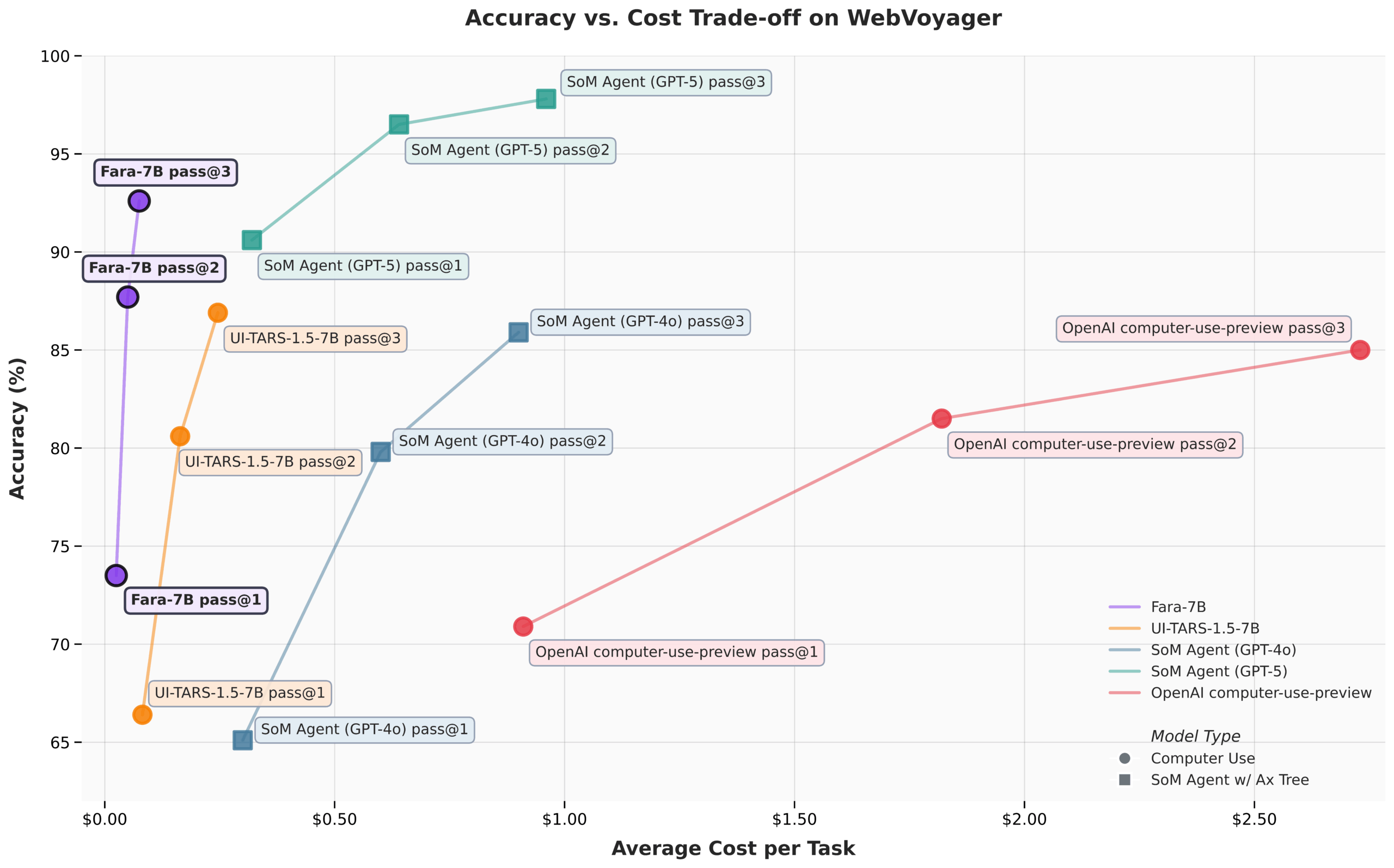

In Microsoft's benchmarks, the model performs strongly for its size. On the WebVoyager test, Fara-7B reaches a success rate of 73.5 percent. The team says this puts it ahead of the UI-TARS-1.5-7B model and even above OpenAI's commercial GPT-4o in this specific benchmark. An independent evaluation by Browserbase using human reviewers resulted in a 62 percent success rate.

Microsoft also highlights the model's efficiency. On average, Fara-7B completes tasks in about 16 steps, while competing models like UI-TARS average around 41 steps. This has a direct impact on cost during use.

Microsoft notes that the model still makes mistakes, can misunderstand instructions, and is vulnerable to hallucinations. To reduce risk, the system is trained to pause at certain critical points - for example, before sending an email or initiating a financial transaction - so the user can confirm the action.

The model is available as an experimental open-weight release under an MIT license on Hugging Face and Microsoft Foundry. Users can also test it locally on Copilot+ PCs running Windows 11.

Companies including OpenAI, Anthropic, Google, and Manus AI have been pursuing AI-driven interface agents for some time. So far, many of these agents handle tasks slowly or fail outright, often without delivering real efficiency gains. They also remain vulnerable to issues like prompt injection.

A possible path forward is to move beyond purely visual interfaces and instead provide agents with interaction surfaces designed specifically for them. Researchers are already exploring standardized agent interaction concepts, which could significantly boost both the efficiency and the safety of AI-driven computer-use systems.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- Over 20 percent launch discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.