Programmers using AI ask fewer questions and may learn less deeply than with peers

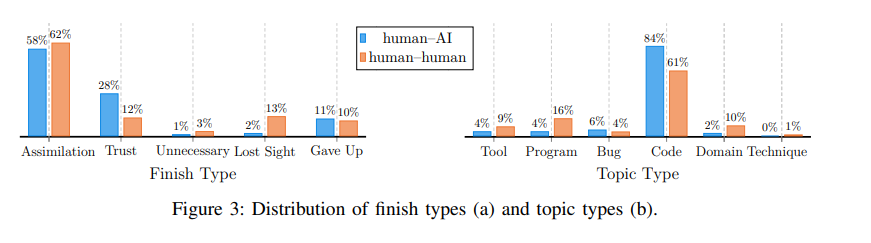

Programmers who rely on AI assistants tend to ask fewer questions and learn more superficially, according to new research from Saarland University. A team led by Sven Apel found that students were less critical of the code suggestions they received when working with tools like GitHub Copilot. In contrast, pairs of human programmers asked more questions, explored alternatives, and learned more from one another.

In the experiment, 19 students worked in pairs: six in human-only teams and seven in human-AI teams. According to Apel, many of the AI-assisted participants simply accepted code suggestions because they assumed the AI's output was already correct. He noted that this habit can introduce mistakes that later require significant effort to fix. Apel said AI tools can be helpful for straightforward tasks, but complex problems still benefit from real collaboration between humans.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.