RunwayML's Act-One model turns smartphone videos into lifelike facial animations

RunwayML has introduced a new AI model called Act-One that aims to streamline the facial animation process. For film production, the technology could have far-reaching implications beyond motion capture.

Traditional facial animation methods often involve complex workflows with specialized equipment. Act-One aims to simplify this by transferring an actor's performance directly to an animated character using only video and voice recordings. According to RunwayML, the model can capture and transfer even subtle details of a performance, and that a smartphone is all that is needed.

Demo video for Act One. | Video: RunwayML

One actor playing multiple roles

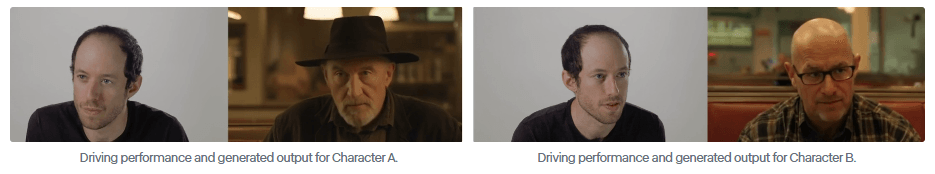

RunwayML highlights the flexibility of Act-One. The model can be applied to different reference images and is designed to maintain realistic facial expressions even when the target character's proportions differ from the original video source.

In a demonstration video, RunwayML shows how Act-One transfers a performance from a single input video to different character designs and styles. The company says the model produces cinematic, realistic results and works well with different camera angles and focal lengths.

While the obvious use case is transferring human facial expressions and voice to animated characters for games and animated movies, RunwayML also presents another scenario: applying acting performances to photorealistic avatars.

The company shows how a single actor can play two virtual avatars in a single scene, promising that "you can now create narrative content using nothing more than a consumer grade camera and one actor reading and performing different characters from a script."

Video: RunwayML

RunwayML says access to Act-One is being phased in for users now and will soon be available to everyone.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.