Stable Diffusion beats JPG and WebP in image compression

Large image models like Stable Diffusion can generate many graphics in a very short time. But that's not all, as developer Matthias Bühlmann shows.

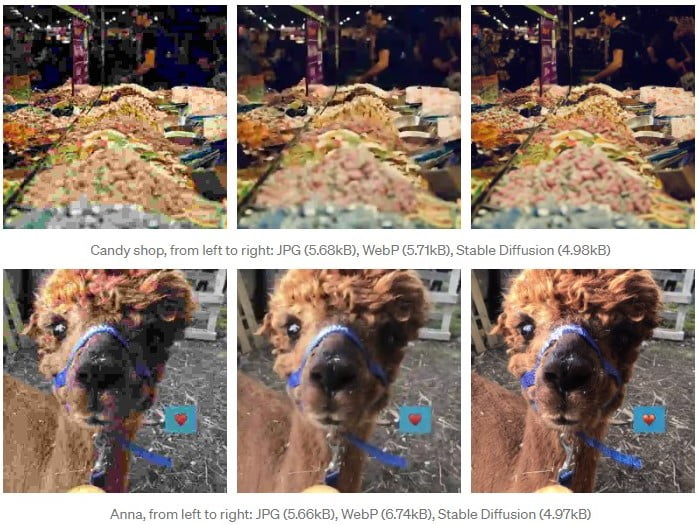

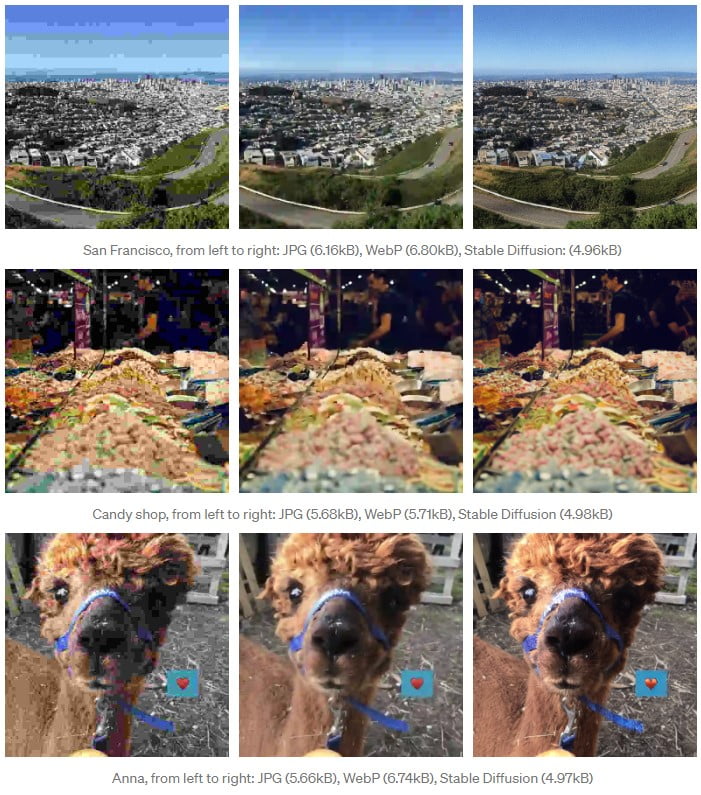

Bühlmann experimented with Stable Diffusion in application scenarios besides image generation. He found that the AI model can deliver better image quality at high compression than the JPG and WebP web standards at 512 x 512 pixel resolution.

According to Bühlmann, stable diffusion compression offers "vastly superior image quality" at a smaller file size compared to JPG and WebP.

Bühlmann compares his compression method to an artist with photographic memory who sees the uncompressed image and then reproduces it as accurately - and as reduced - as possible. The process can preserve even fine details such as the grain of the camera.

Deceptively real AI artifacts

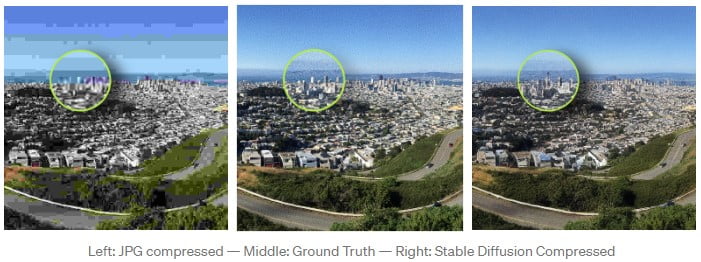

However, Bühlmann's method has one crucial drawback: it can alter the content of the image, such as the shape of buildings. The deceptive aspect of this is that the compressed image still looks high-quality and thus authentic.

Typical compression artifacts in JPG and WebP can also significantly alter the image, but usually, these are clearly identifiable as artifacts. Bühlmann illustrates the problem with the following image.

The stable diffusion model 1.4 used by Bühlmann also has issues with the compression of faces and text. Version 1.5 should already be able to handle faces better, and Bühlmann intends to update his method further.

He sees Stable Diffusion as "very promising as a basis for a lossy image compression scheme" with "a lot more potential" beyond his current experiments.

The programmer emphasizes as a major advantage of his approach that it builds on the Stable Diffusion model that has already been trained. This means that there are no additional training costs for special image compression models - even though these could possibly deliver even better results. Stable Diffusion's training cost about $600,000.

Bühlmann describes his method in detail on his Medium blog and makes his code available on Google Colab.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.