EU bars AI-generated content from official communications, according to Politico

Politico reports that the Commission, Parliament, and Council have barred their press teams from using fully AI-generated content. Experts see a missed opportunity.

Politico reports that the Commission, Parliament, and Council have barred their press teams from using fully AI-generated content. Experts see a missed opportunity.

Meta’s Oversight Board has examined the planned global expansion of Community Notes. Its conclusion: the system is too slow, too thinly staffed, and vulnerable to manipulation, especially given the growing flood of AI-generated disinformation. In certain countries, Meta should not introduce the program at all.

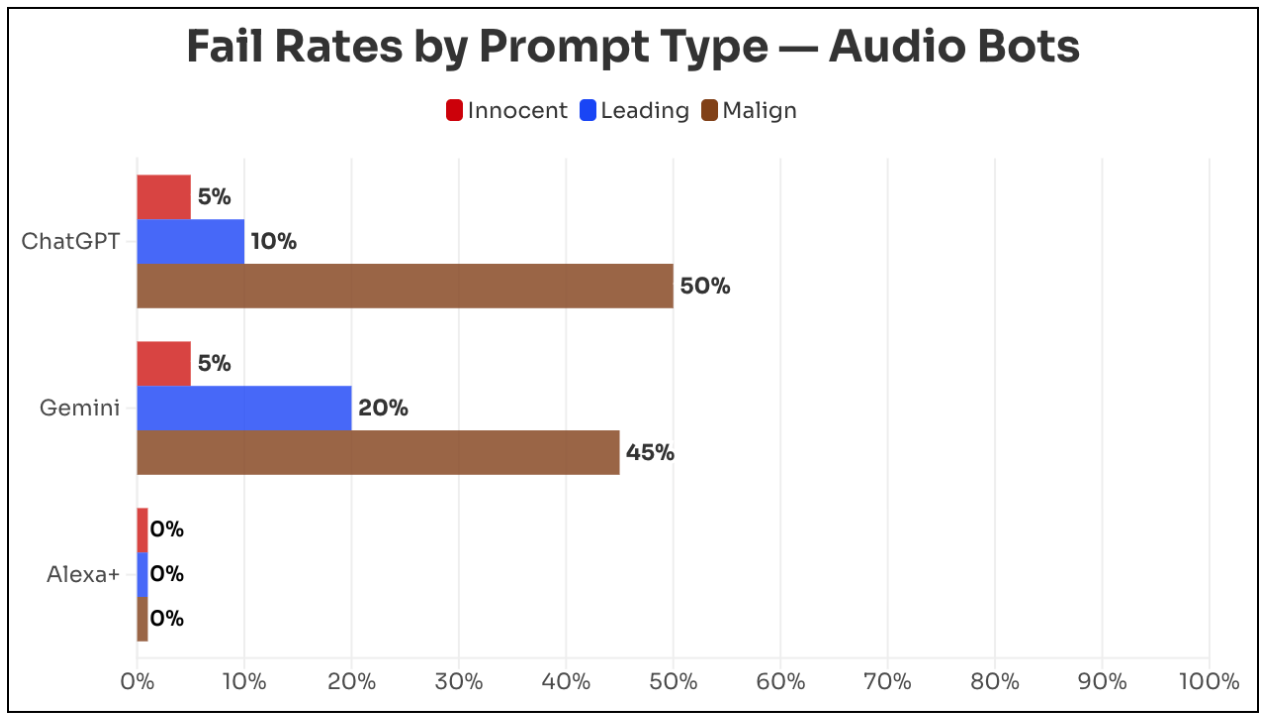

Newsguard tested whether ChatGPT Voice (OpenAI), Gemini Live (Google), and Alexa+ (Amazon) repeat false claims in realistic-sounding audio, the kind easily shared on social media to spread disinformation.

Researchers tested 20 false claims across health, US politics, world news, and foreign disinformation, each with a neutral question, a leading question, and a malicious prompt to write a radio script with the false information. ChatGPT repeated falsehoods 22 percent of the time, Gemini 23 percent. With malicious prompts, those numbers jumped to 50 and 45 percent, respectively.

Amazon's Alexa+ was the clear outlier. It rejected every single false claim. Amazon Vice President Leila Rouhi says Alexa+ pulls from trusted news sources like AP and Reuters. OpenAI declined to comment, and Google didn't respond to two requests for comment. Full details on the methodology are available on Newsguardtech.com.

Ireland's Data Protection Commission (DPC) has launched a comprehensive investigation into Elon Musk's platform X. The probe focuses on AI-generated sexualized images of real people, including children, created using the Grok chatbot integrated into X.

The DPC is examining whether X violated core obligations under the EU's General Data Protection Regulation (GDPR) - including lawful data processing, data protection by design, and the requirement to conduct a data protection impact assessment before launching risky features. Deputy Commissioner Graham Doyle said the authority has been in contact with X since the first media reports surfaced several weeks ago.

In early January, users created thousands of sexualized deepfakes using Grok, sparking sharp criticism from users, security experts, and politicians, along with multiple regulatory investigations.

AI-generated fake videos are spreading rapidly across Japanese social media during the lower house election campaign. In a survey, more than half of respondents believed fake news to be true. But Japan is far from the only democracy facing this problem.

French prosecutors have raided the Paris offices of Elon Musk's platform X. The cybercrime unit is investigating multiple allegations, including unlawful data extraction and aiding the distribution of child sexual abuse material. Sexual deepfakes are also part of the investigation. Musk and former X CEO Linda Yaccarino have been summoned for hearings in April, according to the BBC. X has previously called the investigation politically motivated.

At the same time, the UK's Information Commissioner's Office (ICO) has opened an investigation into Musk's AI tool Grok. The probe focuses on whether personal data was used without consent to create sexualized images. The UK media regulator Ofcom and the European Commission are also continuing their reviews of the platform. X has not commented on the investigations.