Math needs thinking time, everyday knowledge needs memory, and a new Transformer architecture aims to deliver both

Key Points

- A new transformer architecture lets each layer autonomously decide how many times it repeats its computing block, while additional memory banks supply factual knowledge, allowing the model to dynamically allocate compute where it's needed most.

- With only twelve layers, the looped model outperforms a conventional 36-layer model by 6.4 percent on math problems at the same computational cost. For everyday knowledge tasks, loops provide little benefit, but the memory banks effectively close the performance gap.

- During training, the system self-organizes: early layers rarely repeat, while late layers loop intensively and access memory more frequently. Notably, loops and memory function as complementary mechanisms rather than substitutes for each other.

A German research team lets Transformer models decide for themselves how many times they think about a problem. Combined with additional memory, the approach clearly outperforms larger models on math problems.

Language models can think step by step using chain-of-thought prompting, but each intermediate step requires additional tokens. Looped transformers offer an alternative: they run the same computation block multiple times on their internal representations without outputting intermediate steps as text. This saves parameters but costs storage capacity, because the model has fewer unique weights to store knowledge in.

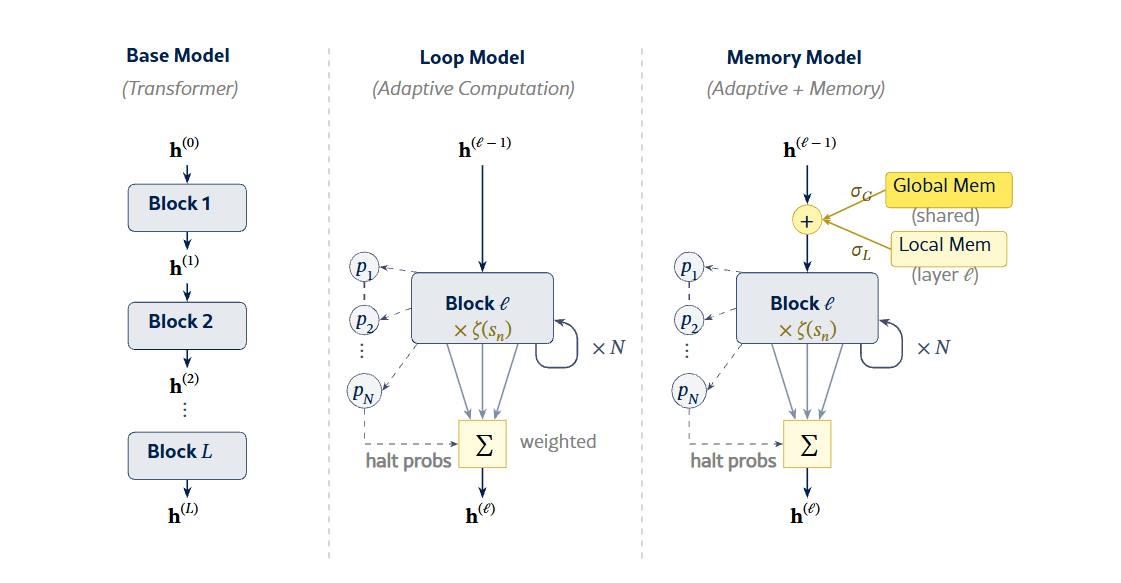

A research team from the Lamarr Institute, Fraunhofer IAIS, and the University of Bonn wanted to find out whether this tradeoff can be resolved. Their architecture combines two mechanisms: adaptive looping, where each transformer layer uses a learned halt mechanism to decide how many times it repeats its computation block, and learned memory banks that provide additional knowledge.

The base architecture is a decoder-only transformer with 12 layers and about 200 million parameters, trained on 14 billion tokens from the deduplicated FineWeb Edu dataset. The looped variants allow each layer up to 3, 5, or 7 iterations. The memory banks include 1,024 local slots per layer and 512 global, shared slots, adding roughly 10 million extra parameters, according to the study.

Looping boosts math, memory fills knowledge gaps

The results show that letting a model repeat its calculations up to three times makes it significantly better at math. The looped model scores 22 percent higher than the base model without loops. Demanding subcategories like Precalculus (31 percent improvement) and Intermediate Algebra (26 percent) benefit the most. For tasks that require everyday knowledge—like questions about social situations or physical intuition—the loops barely help. With additional iterations, performance actually drops slightly.

To put this in perspective, the researchers compared their 12-layer model with triple loops against a conventional 36-layer model that uses the same computational effort but no loops. Despite having only a third of the layers, the looped model performs 6.4 percent better on math benchmarks. Loops are more efficient than additional layers when it comes to mathematical reasoning, the researchers write.

The memory banks solve a different problem. Everyday knowledge can't be generated through repeated thinking: it has to be stored. The memory banks provide exactly this extra capacity, closing part of the knowledge gap that loops alone can't bridge. Together, the model improves by another 4.2 percent on math and two percent on everyday knowledge tasks compared to the variant without memory, according to the study.

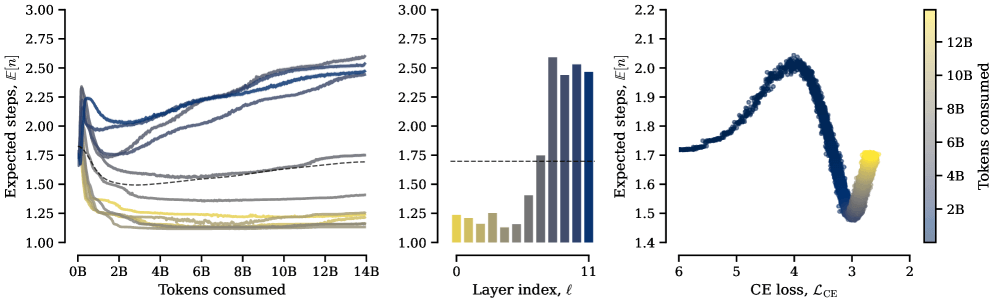

Early layers stay frugal, late layers work harder

Even though the model doesn't get an explicit penalty for the number of loop passes, a specialization shows up on its own: early layers learn to repeat their computation blocks only minimally and barely touch memory. Late layers, on the other hand, loop more intensively and tap into the memory banks more often.

This fits with earlier research showing that early transformer layers encode local syntactic patterns, while later layers handle more complex semantic and logical operations. Simple computations don't benefit from extra iterations, but the more sophisticated operations in deeper layers do.

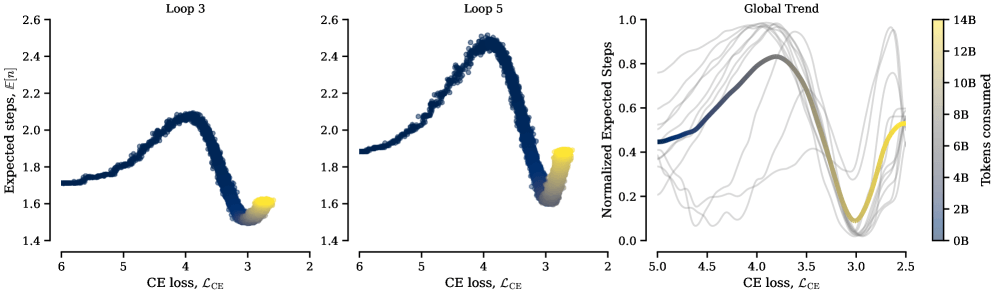

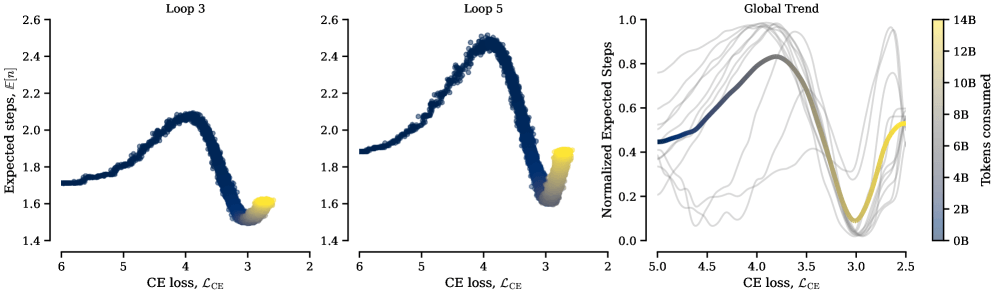

There's also a clear turning point during training: early on, the models barely use their loops even though they could. Only once the model gets good enough at understanding and predicting language does it start actually repeating its calculations. According to the researchers, this threshold kicks in at nearly the same point across all loop configurations. The model has to build up basic language skills first before it can benefit from repeated thinking.

More computation demands more knowledge

The researchers see their results as evidence of a fundamental division of labor within transformers. Feed-forward layers act as a kind of memory for factual associations, while attention layers route and manipulate information. Looping improves routing but can't make up for insufficient storage capacity.

The fact that layers looping more often also pull more from memory supports this reading: loops and memory complement each other. More computation requires more facts.

The authors point out some limitations: the experiments ran at a relatively small scale, about 200 million parameters and 14 billion training tokens. Whether the results hold for models with several billion parameters—which already have considerable built-in capacity—remains to be seen.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now