AI2's fully open web agent MolmoWeb navigates the web using only screenshots

Key Points

- The Allen Institute for AI has released MolmoWeb, a fully open web agent that can operate websites using only screenshots, without needing access to source code or underlying page structure.

- The model was trained on one of the largest public datasets of its kind, combining human browsing records, automatically generated runs, and millions of screenshot-question-answer pairs.

- Despite its compact size, MolmoWeb outperforms the best open model available on all tested benchmarks and approaches the performance of proprietary systems from OpenAI, with all training data, model weights, and tools made freely available.

AI2 releases MolmoWeb, a fully open web agent that navigates websites using only screenshots. Despite having just 4 and 8 billion parameters, the models beat several larger proprietary systems on standard benchmarks.

Today's best AI agents for searching flights, filling out forms, or browsing product listings all come from companies that won't share their training data or methods. The Allen Institute for AI (AI2) is trying to change that with MolmoWeb, a fully open web agent shipped with all training data, model weights, and evaluation tools. "Web agents today are where LLMs were before OLMo," the team writes, arguing the open-source community needs an open foundation to build on.

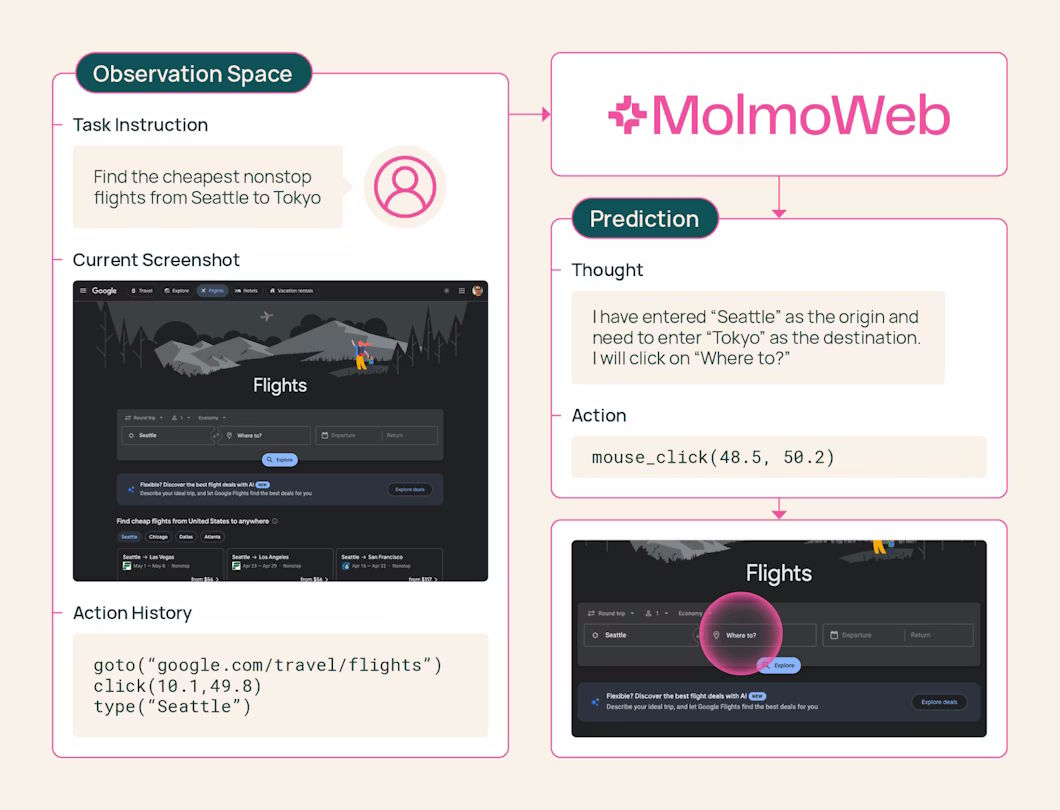

Training relies on a mix of human demonstrations and auto-generated browsing runs, using supervised fine-tuning on 64 H100 GPUs with no reinforcement learning and no distillation from proprietary systems. MolmoWeb runs on the Molmo2 architecture with Qwen3 as the language model and SigLIP2 as the vision encoder.

It only sees what you see

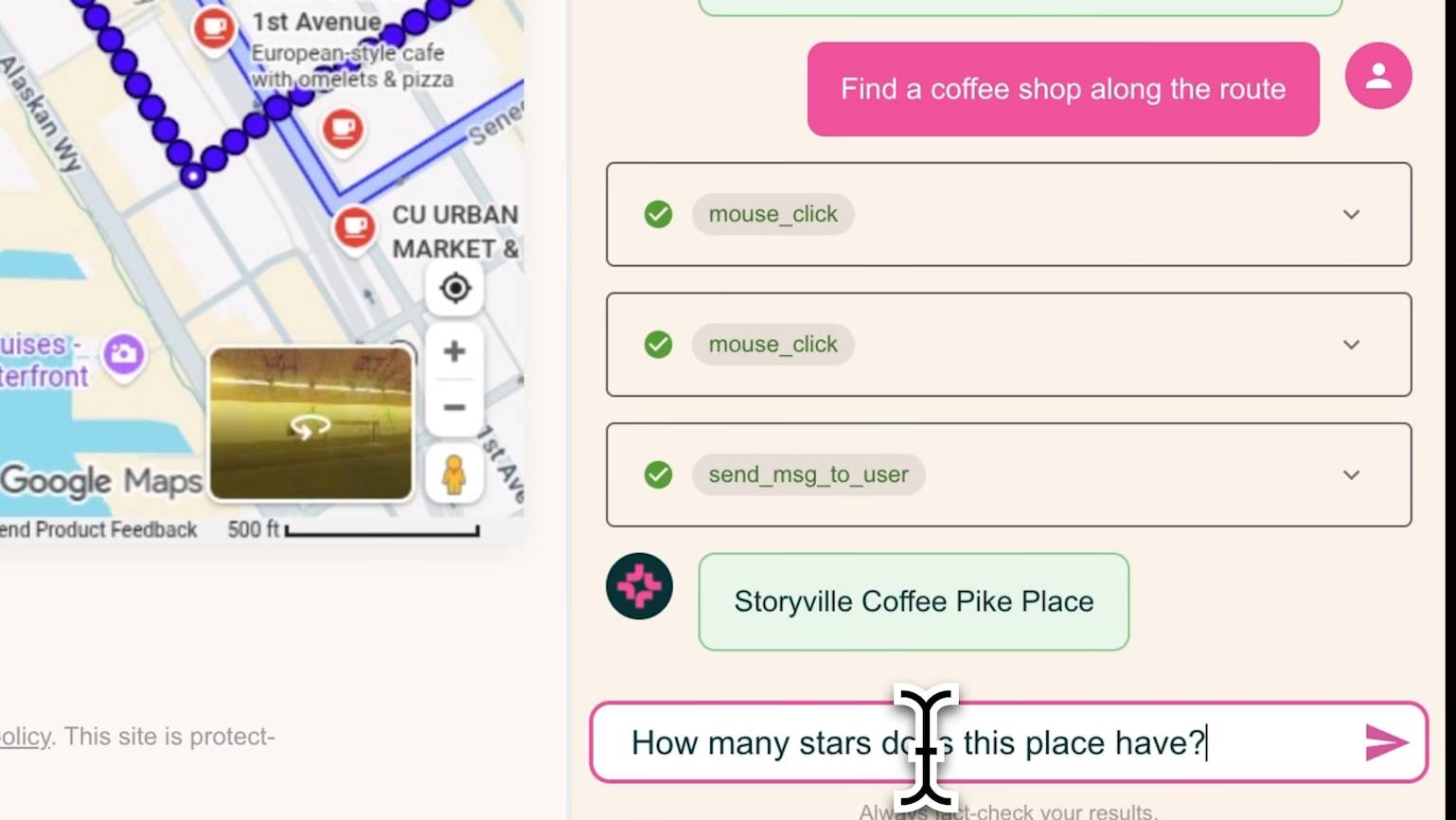

The agent takes a screenshot of the current browser view, decides what to do next, and performs an action - click, tap, scroll, switch tabs, or go to a URL. Then it grabs a new screenshot and repeats.

MolmoWeb doesn't read source code or access the page's DOM. It only works with what a human would see on screen. The developers say this makes the agent more robust since a website's appearance changes less often than its underlying code. It also makes the agent's decisions easier to follow.

The bigger contribution here might actually be the training dataset, MolmoWebMix. The main blocker for open web agents has been a lack of good data, and MolmoWebMix is an attempt to fix that.

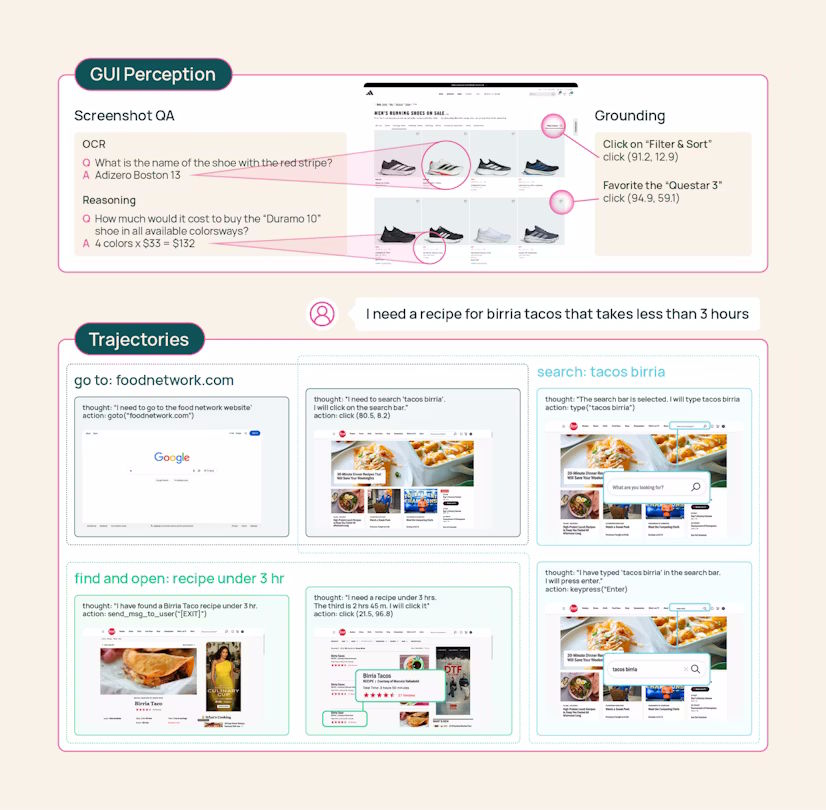

Crowdworkers completed real browsing tasks while the team recorded every click and page change - 36,000 complete task runs across more than 1,100 websites. The team says it's the largest public dataset of human web task execution available.

Moreover, automated agents generated additional runs to scale beyond what human annotation could produce. A three-role system handles this: a planner built on Gemini 2.5 Flash breaks tasks into sub-goals, an operator executes browser actions, and a verifier powered by GPT-4o checks screenshots to confirm each sub-goal was completed.

The dataset also includes more than 2.2 million screenshot-question-answer pairs for reading and understanding web content. UI element localization is trained separately with a grounding dataset of over seven million examples.

One counterintuitive finding from the associated paper is that MolmoWeb learns better from synthetic browsing runs than from human demonstrations on identical tasks. The researchers say humans tend to poke around on unfamiliar sites and take detours, while automated agents find more direct paths. Data ablations also show that just ten percent of the dataset delivers 85 to 90 percent of final performance.

Small models, big numbers

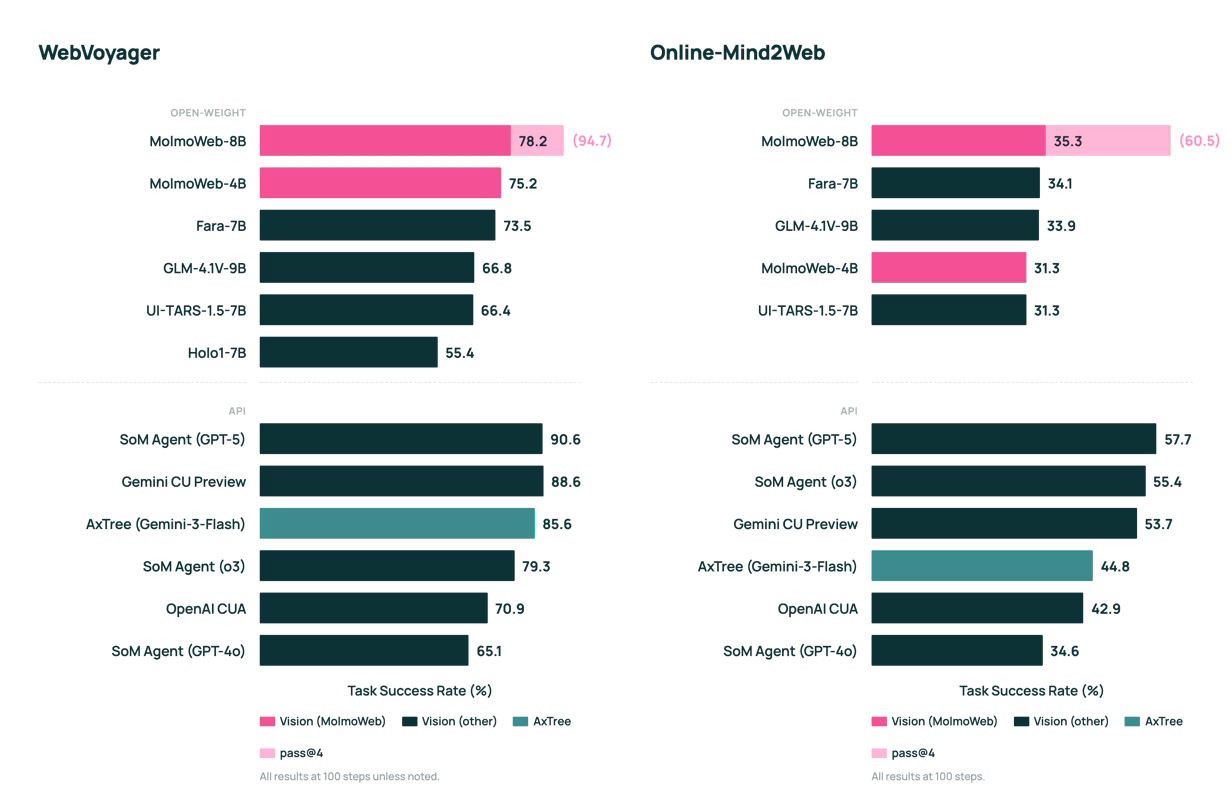

Despite their size, both MolmoWeb models post top scores among open web agents. On WebVoyager, which tests navigation across 15 popular sites like GitHub and Google Flights, the 8B model hits 78.2 percent. That beats the previous open-model leader, Fara-7B, on all four benchmarks tested and comes close to OpenAI's o3 at 79.3 percent. On DeepShop, MolmoWeb-8B trails GPT-5 by just six points.

MolmoWeb also outperforms agents built on the much larger GPT-4o that have access to annotated screenshots and structured page data, the developers say. A specialized 8B model beats Anthropic's Claude 3.7 and OpenAI's CUA on the ScreenSpot benchmarks for UI element localization, though AI2 picked older proprietary models for comparison.

Compared to its own "teacher," a Gemini-based agent with page structure access, MolmoWeb trails by five points. That's the trade-off of the screenshot-only approach: the agent has to figure out text recognition on its own instead of getting it handed to it. But there's a simple way to close the gap. When the agent runs a task multiple times and picks the best result, the WebVoyager success rate jumps from 78.2 to 94.7 percent—throwing more compute at inference time clearly pays off, the study shows.

No logins, no payments, and lots of unanswered questions

MolmoWeb can misread text in screenshots, and performance drops with vague instructions or lots of constraints. The team deliberately excluded tasks requiring logins or financial transactions during training. The hosted demo only allows certain websites, blocks password and credit card fields, and uses a Google interface to screen for problematic content. These guardrails are part of the demo, not the model itself.

Harder questions remain open: How should web agents handle terms of service? How do you prevent them from accessing illegal content or taking irreversible actions? The team argues that full openness is what lets more people work on these problems. MolmoWeb is available on Hugging Face and GitHub under an Apache 2.0 license.

OpenSeeker recently took a similar approach for AI search agents: fully open data, code, and model weights to counter big tech's data monopoly. MolmoWeb extends that trend to browser automation.

AI2, known for pushing transparent AI, recently took a hit when Microsoft hired several of its top researchers. They're joining Microsoft's new superintelligence team led by Deepmind co-founder Mustafa Suleyman.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now