Interview with Prof. Dr. Ahmad-Reza Sadeghi, Head of the System Security Lab at the TU Darmstadt and member of the German platform "Lernende Systeme" (learning systems).

THE DECODER:Mr. Sadeghi, AI systems and Deep Learning in particular have made enormous progress in recent years. Does this pose new challenges for IT security?

Prof. Dr. Ahmad-Reza Sadeghi: Yes. Algorithms or AI-based systems are fragile from a security perspective because they are very data-dependent. You can easily manipulate them, especially covertly. The more advanced the systems become, the more advanced the attacks are.

Recently, you presented a paper from the German platform "Lernende Systeme" (learning systems) on distributed machine learning. Here, primarily the training data and its processing are decentralized. Does that improve the security of AI systems?

Among other things, distributed machine learning improves privacy, for example in the medical context. Hospitals do not share medical data with each other, but can still use distributed machine learning to train the same systems with their data and thus collaborate.

But when data and AI models are spread across more systems, there are more points of attack.

Absolutely. Individual computers could be brought under control, for example, via software or because people in an institution are working with the attackers. When that happens, the entire model can be manipulated. This is called data and/or model poisoning.

New paths for AI security

Do you get the impression that AI developers are already considering security and privacy in their design?

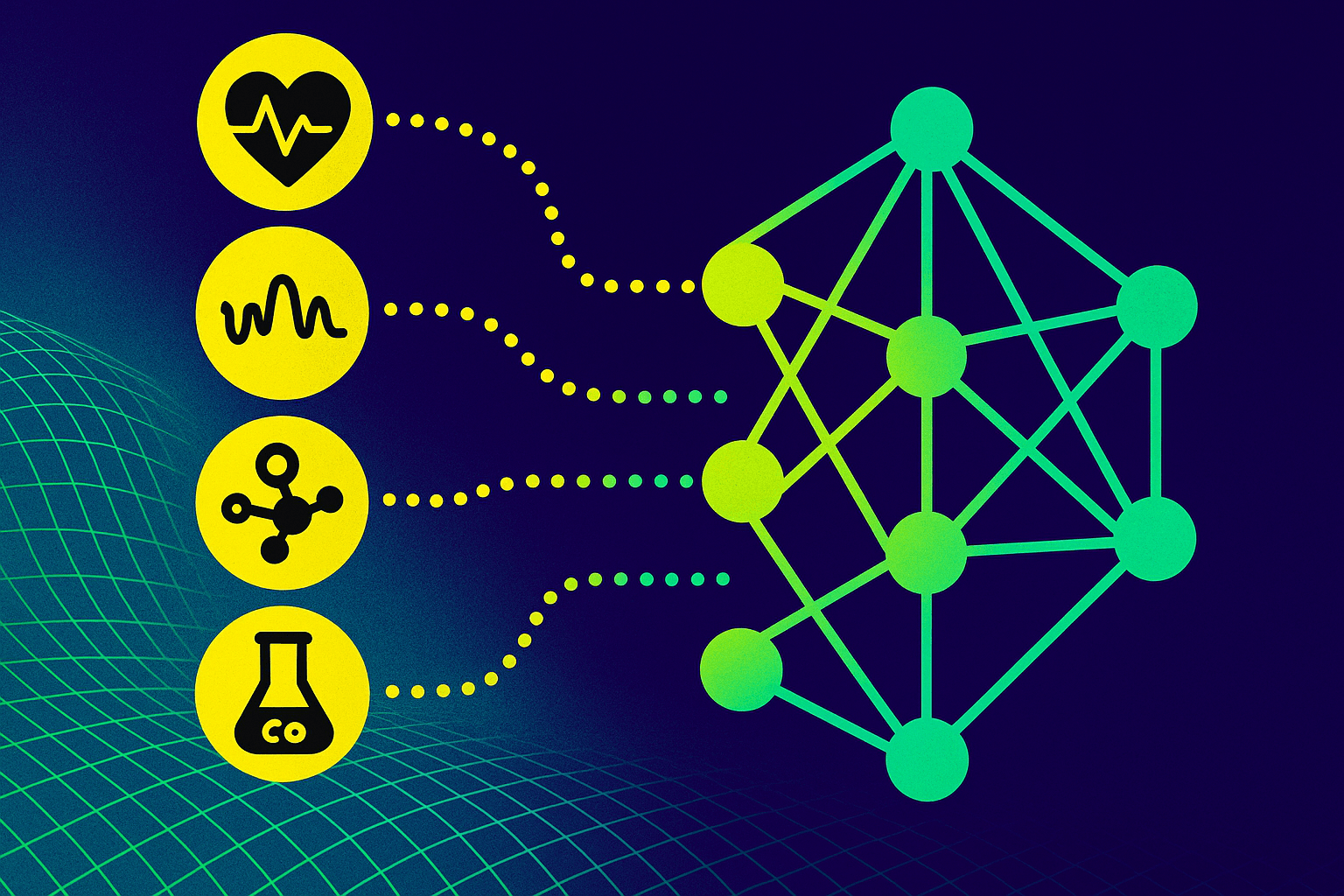

Yes, but the challenge is that you don't want to limit the performance of the models with security measures. Moreover, common IT security systems cannot be easily applied to AI systems.

You have to find new ways to secure AI algorithms. In my research, I have also worked extensively on applied cryptography, i.e., computing with encrypted data. Pure cryptographic solutions are not yet scalable, especially for huge AI models with sometimes billions of parameters. Therefore, research is also being done on algorithmic improvements as well as hardware-based solutions for AI security. Another interesting field is the use of AI for safety-critical systems, i.e. algorithms that protect against algorithmic attacks.

Does insecure AI pose a greater security risk than traditional insecure software?

I think so because the manipulation can be even more covert. I'm thinking of the financial markets or the medical field, for example. The biggest risk is our usage behavior: If AI systems one day really automate large parts of our everyday life and make decisions for us, our dependence on these systems will also be much greater. Potential attackers can therefore also cause much greater damage.

Cybersecurity in the AI age is more than insecure computers

Do we need to think cybersecurity bigger in the AI age?

Much bigger, yes. We need to incorporate societal aspects more and take an ideological philosophical view. What is the most effective AI at the moment? It's the recommendation algorithms of Facebook, Twitter, and Google. These are the echo chambers that are changing our society.

I'm not worried about terminators, I'm worried about the creeping influence of social media on democratic countries. There are estimates that half of US Americans no longer believe in their voting system. Voting is fraud anyway. Social media plays a role in this. Who is better at conducting experiments than Facebook, Twitter, and Google? They use the best algorithms available for classification. And they have access to billions of data sets.

That sounds like an appeal, especially to young people, to consciously decide what impact their work has on society.

Yes, I think that is important. Large tech companies make very attractive offers. But there are other factors besides money and status that you have to consider for your personal and professional future. We need good people everywhere, and there are many positive application scenarios for artificial intelligence, especially in cybersecurity

There is another important aspect here: Germany and Europe must position themselves better in the race for AI. We need to find niches where we are strong compared to the big U.S. tech companies, and these niches need to be promoted by economic policy.