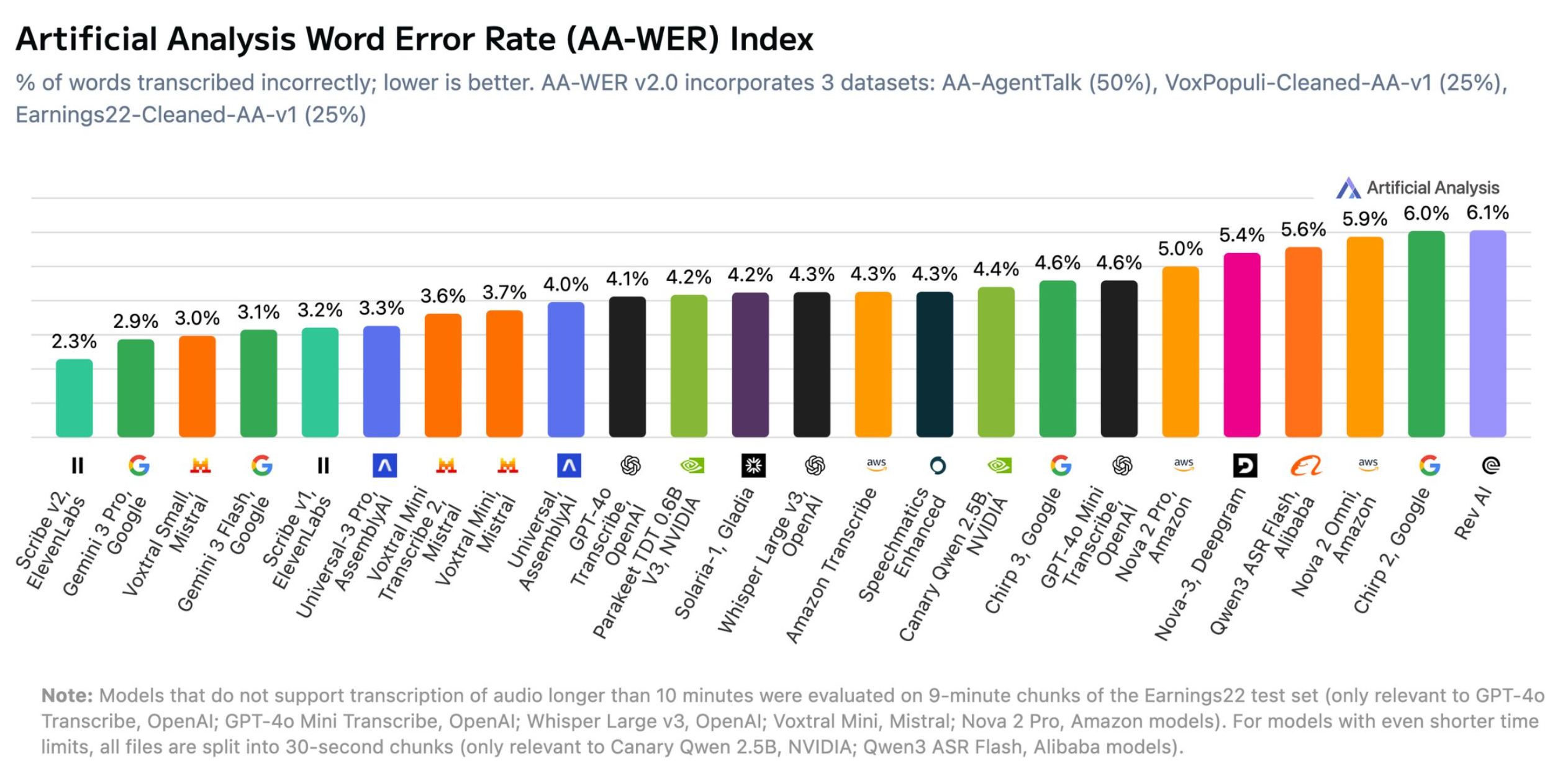

ElevenLabs and Google dominate Artificial Analysis' updated speech-to-text benchmark

Artificial Analysis has released version 2.0 of its AA-WER speech-to-text benchmark. ElevenLabs' Scribe v2 leads with a word error rate of just 2.3 percent, followed by Google's Gemini 3 Pro (2.9%) and Mistral's Voxtral Small (3.0%). Google's Gemini 3 Flash (3.1%) and ElevenLabs' older Scribe v1 (3.2%) are close behind. Notably, Google didn't specifically train for transcription—the strong results come from Gemini's general multimodal capabilities. OpenAI's popular open-source Whisper Large v3 (4.2%) lands mid-pack, while Alibaba's Qwen3 ASR Flash (5.9%), Amazon's Nova 2 Omni (6.0%), and Rev AI (6.1%) bring up the rear.

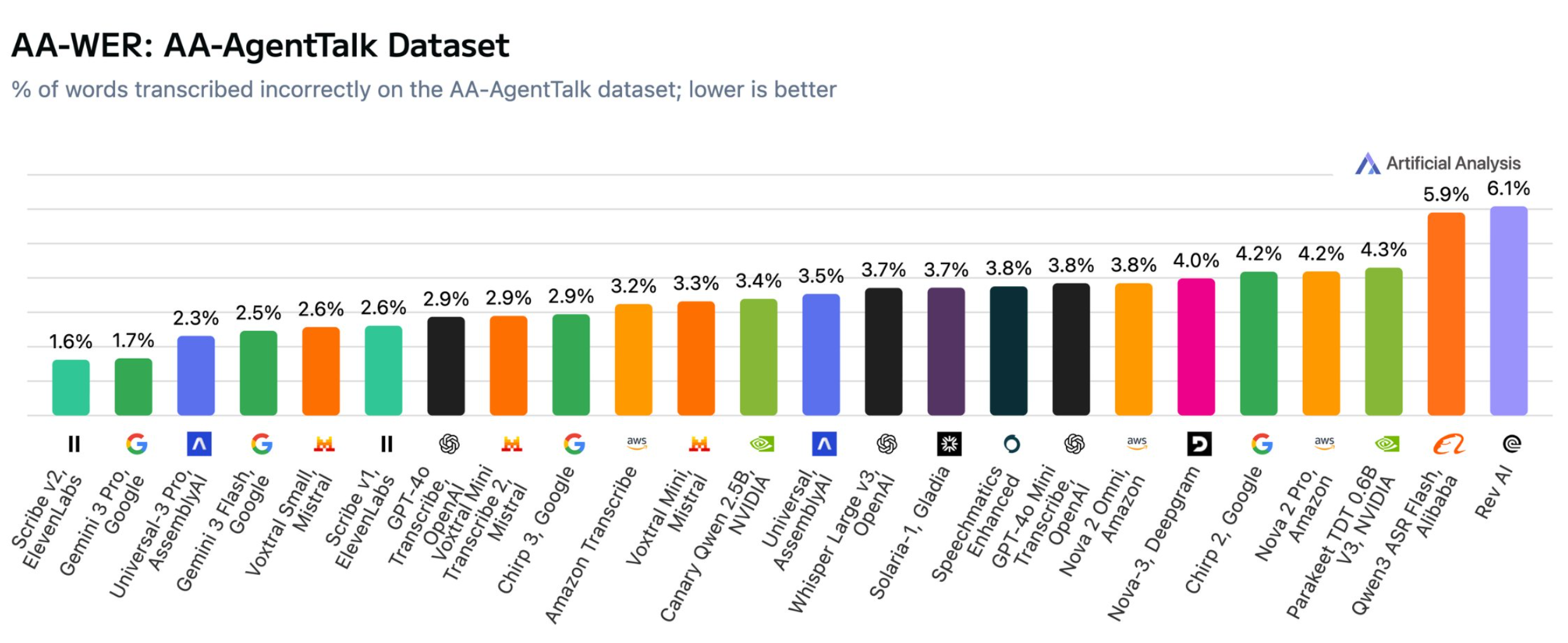

The results hold up in the separate AA-AgentTalk test for speech directed at voice assistants: Scribe v2 (1.6%) and Gemini 3 Pro (1.7%) pull well ahead, with AssemblyAI's Universal-3 Pro taking third at 2.3%.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe now