Google's Gemma 4 puts free agentic AI on your phone and no data ever leaves the device

Key Points

- Google's open-source model Gemma 4 can process text, images, and audio entirely on-device and autonomously use tools like Wikipedia, interactive maps, or QR code generators through built-in agent skills.

- The smaller smartphone variants E2B and E4B run on devices with just 6 and 8 GB of RAM respectively, deliver up to four times the speed of the previous generation according to Google, and serve as the foundation for the upcoming Gemini Nano 4 on Android.

- All models are released under the commercially friendly Apache 2.0 license, developers can create and share custom skills via GitHub, and the free "Google AI Edge Gallery" app is available for both Android and iOS.

Google's new open-source model, Gemma 4, processes text, images, and audio completely on-device. Using agent skills, the AI can independently tap into tools like Wikipedia or interactive maps, no cloud required.

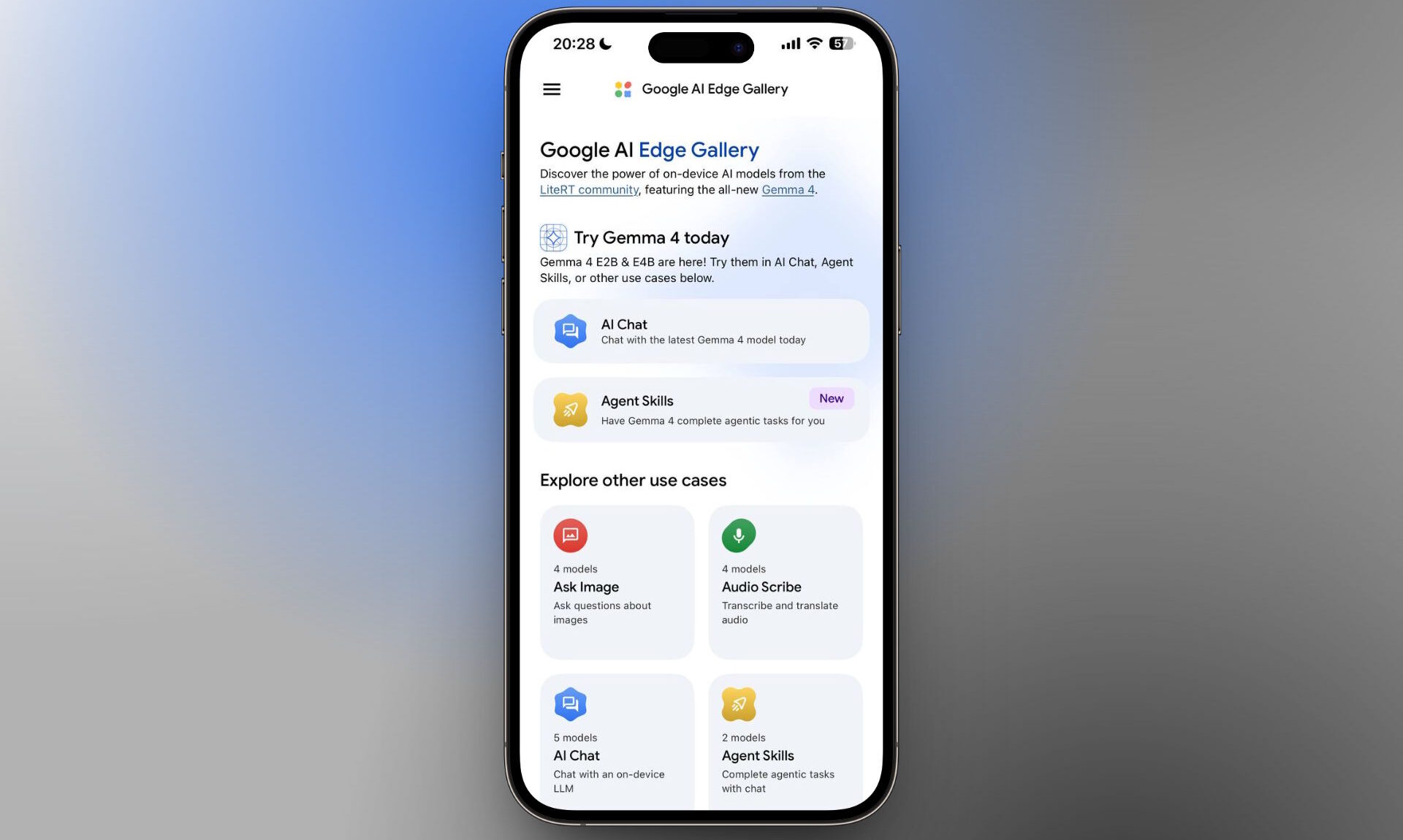

The Google AI Edge Gallery app needed to run the model is free on Android and iOS. Since Gemma 4 dropped, the app has shot up to fourth place among the most-downloaded free productivity apps in the iOS App Store, sitting right behind Claude, Gemini, and ChatGPT.

Gemma 4 is built on the same research as Google's proprietary Gemini 3 model but ships under the commercially friendly Apache 2.0 license. Google says the Gemma family has racked up over 400 million downloads since the first generation launched. All models handle text, images, and audio across more than 140 languages.

Four model sizes cover everything from phones to servers

The latest release comes in four variants. E2B and E4B are built specifically for smartphones. The "E" stands for "effective parameters," meaning the number of parameters actually active during inference. Quantized, E2B takes up about 1.3 GB on-device, while E4B needs roughly 2.5 GB.

The bigger 26B and 31B variants target servers and high-performance hardware. The 26B version uses a mixture-of-experts architecture with 128 experts, so only 3.8 billion parameters are active at any given time. The dense 31B model offers a context window of up to 256,000 tokens.

Google also teamed up with Arm and Qualcomm to optimize the phone variants for current mobile chips. According to Google, Gemma 4 on Android runs up to four times faster than the previous generation while cutting battery drain by up to 60 percent. Arm's own benchmarks show even bigger gains: an average 5.5x speedup in processing, provided the device packs a newer Arm chip with the SME2 instruction set, an extension that accelerates matrix math for AI models directly in silicon.

Agent skills bring tool use to on-device AI

The app requires Android 12 or iOS 17. The two phone-sized variants differ in RAM requirements: E2B uses about 1.3 GB quantized and runs on devices with 6 GB of RAM, while E4B needs around 2.5 GB of model memory and at least 8 GB of RAM.

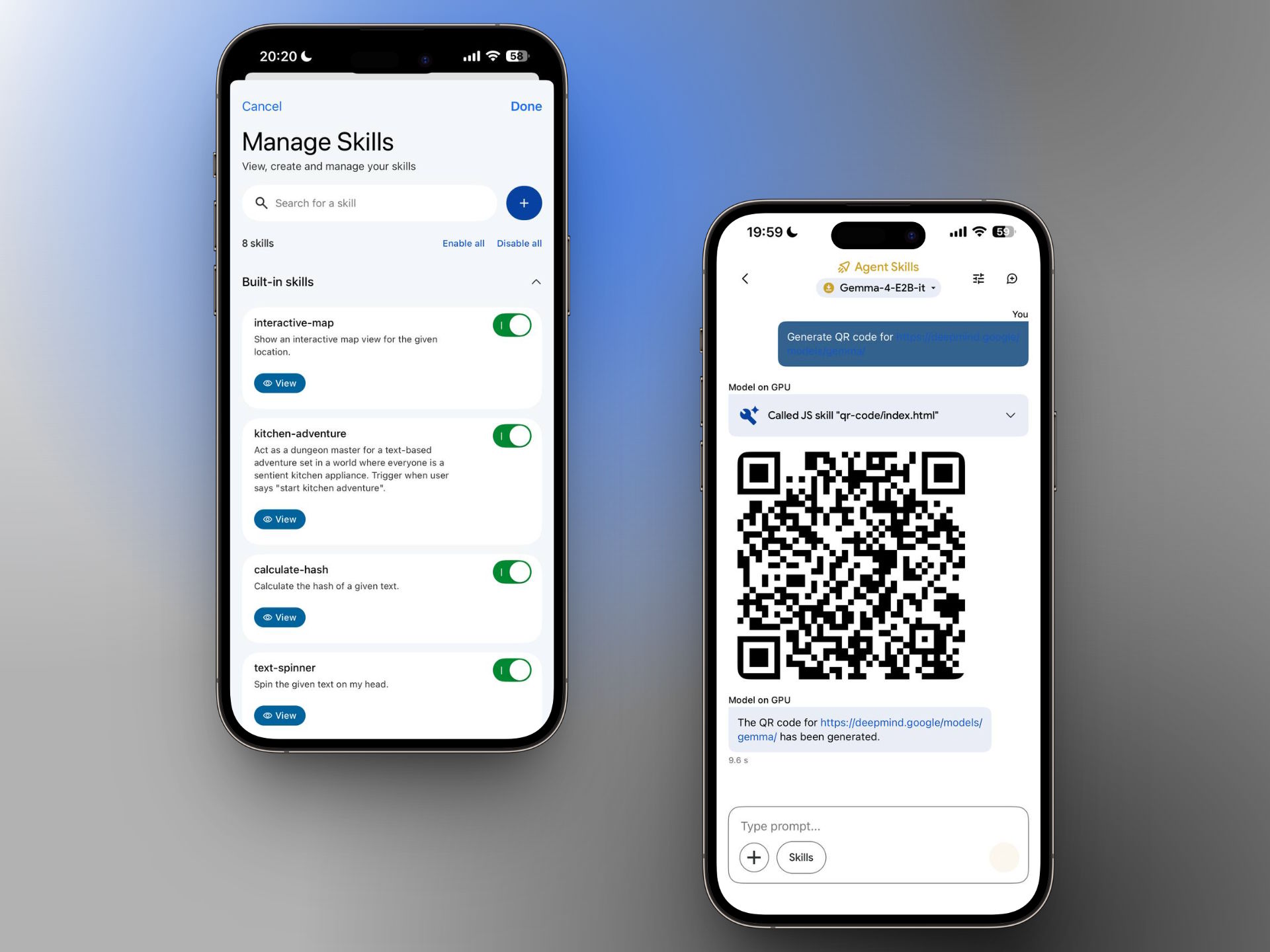

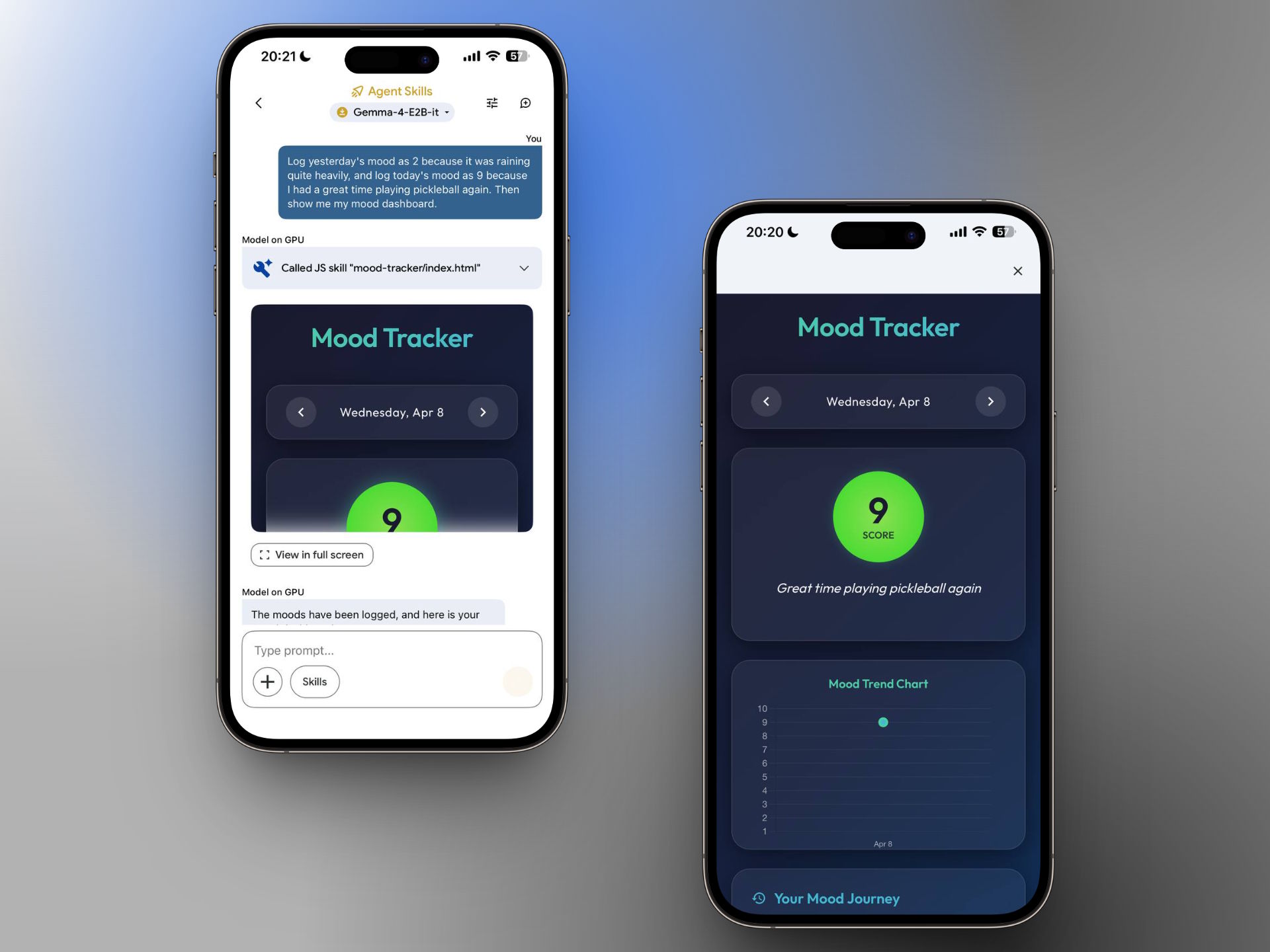

Beyond basic chat, image recognition, and audio transcription, the app ships with what Google calls "agent skills": Wikipedia search, interactive maps, auto-generated summaries, and flashcards. Gemma 4 can also describe photos, turn spoken input into diagrams and visualizations, and even team up with other local models for things like text-to-speech or image generation. Google shows this off with a demo skill that describes and plays animal calls.

Image recognition got a solid upgrade too, according to Google. OCR tasks, pulling text from images, diagrams, or handwriting, now deliver noticeably better results. The model also handles time-related information more reliably, which is important for calendars, reminders, and alarms.

Individually, none of these features break new ground compared to what cloud providers already offer. What stands out is that a demo app running a purely local model on a phone can now use these tools on its own. Developers can build custom skills through GitHub and share them with the community. The built-in tools do need an internet connection, but the model itself runs locally, and chats never get saved.

Gemma 4 sets the stage for the next Gemini Nano

According to Google, Gemma 4 E2B and E4B serve as the foundation for Gemini Nano 4, the next generation of Android's system-wide on-device model. Code written for Gemma 4 today will work with Gemini Nano 4 out of the box when it ships on new flagship devices later this year. Gemini Nano already runs on over 140 million Android devices, powering features like Smart Replies and audio summaries.

Back in December, Google previewed this direction with FunctionGemma, a tiny local model with just 270 million parameters that can route commands to other phone apps. It translates natural language into structured function calls: toggling the flashlight, creating contacts, sending emails, adding calendar entries, pulling up locations on a map, or opening Wi-Fi settings.

How much on-device AI matters strategically became clear earlier this year with the billion-dollar deal between Apple and Google. Since January, we've known that the next generation of Apple's Foundation Models will be built on Google's Gemini technology, powering a sweeping Siri upgrade over the course of 2025.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe now