GTC 2026: Nvidia wants to swap robotics' data problem for a compute problem

Key Points

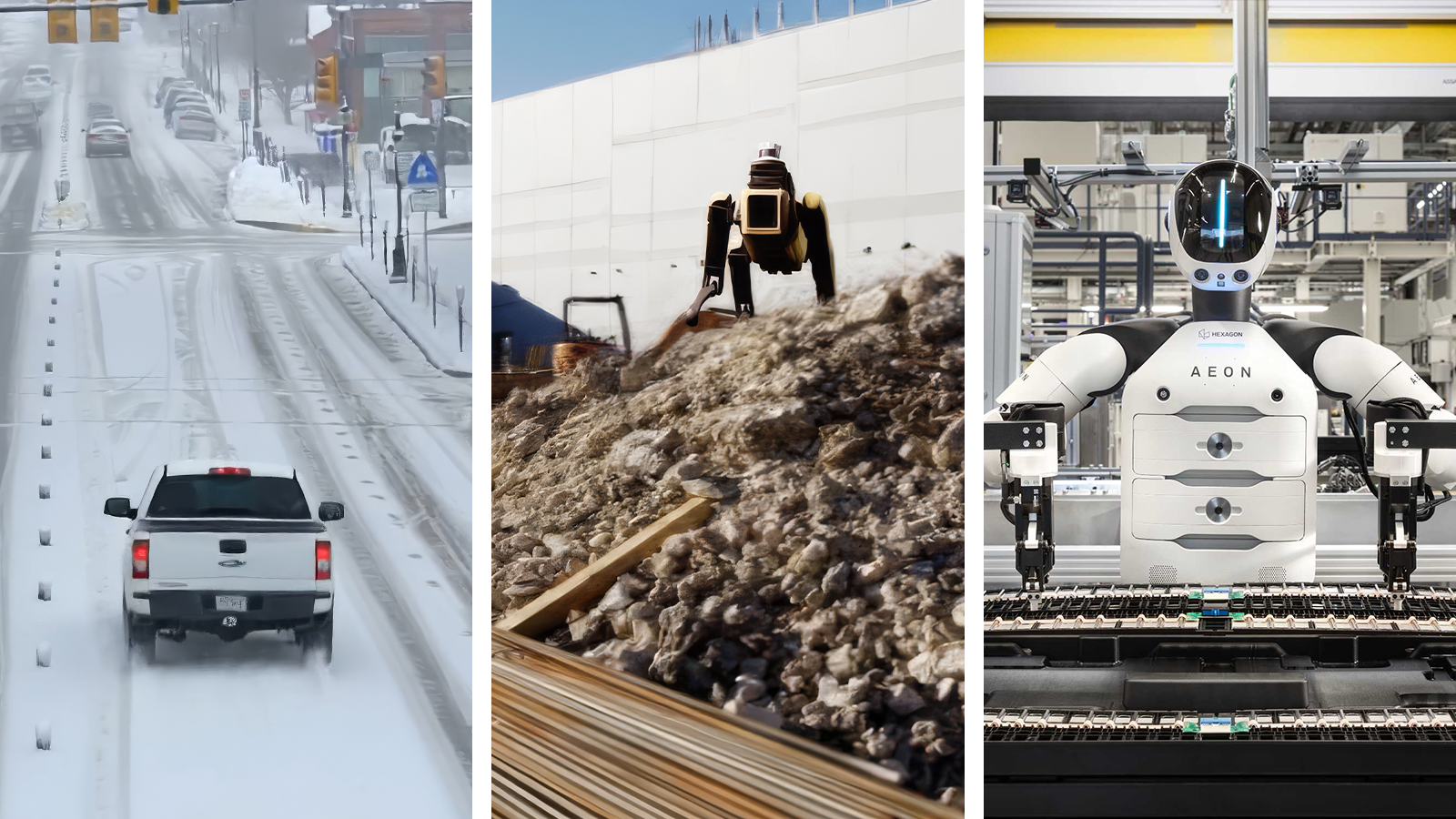

- At GTC 2026, Nvidia expanded its physical AI platform across autonomous driving, industrial robotics, and humanoid robots - with Uber robotaxis planned for Los Angeles by 2027 and major manufacturers like FANUC, ABB, and KUKA integrating Nvidia's simulation and inference tools.

- Nvidia unveiled new AI models including Alpamayo 1.5 for steerable autonomous driving, Cosmos 3 for synthetic world generation, and GR00T N2 for humanoid robots - which Nvidia says outperforms leading vision-language-action models by more than 2x on new tasks.

- A key strategic theme: Nvidia wants to turn robotics' data problem into a compute problem by using simulation pipelines and synthetic data generation to replace expensive real-world data collection, making raw compute power - not fleet size - the bottleneck for training better models.

At GTC 2026, Nvidia is massively expanding its platform for physical AI. Starting in 2027, autonomous vehicles are set to drive through Los Angeles with Uber, industrial robots from FANUC and ABB are getting Nvidia brains, and new models aim to make humanoid robots more capable.

Nvidia used its GTC 2026 developer conference to once again make a big push around "Physical AI." Behind the buzzword is a full platform strategy: Nvidia supplies the chips, models, simulation tools, and safety architectures that partners across the automotive, robotics, medical, and telecom industries can build on.

The announcements span practically the entire value chain of physical AI systems, from training data pipelines to 5G edge computing. CEO Jensen Huang called autonomous vehicles the first multi-trillion-dollar robotics industry, adding that everything that moves will eventually be autonomous.

Uber plans to launch Nvidia-powered robotaxis in Los Angeles by 2027

The most concrete announcement involves an expanded partnership with Uber. A fleet of autonomous vehicles running on the latest version of the DRIVE Hyperion platform and Nvidia's DRIVE AV software is supposed to operate in 28 cities across four continents by 2028. The launch is planned for the first half of 2027 in Los Angeles and the San Francisco Bay Area.

Whether that timeline holds remains to be seen. What's clear is that Nvidia is positioning DRIVE Hyperion as the standard architecture for Level 4 autonomy. Beyond Uber, Nvidia says BYD, Geely, and Nissan are also on board. Nissan is using software from British AI company Wayve. Japanese commercial vehicle manufacturer Isuzu is working with TIER IV on autonomous buses built on the DRIVE AGX Thor chip. Other mobility providers like Bolt, Grab, and Lyft are also listed as partners.

As a safety layer for these systems, Nvidia introduced Halos OS - a three-tier architecture based on the ASIL-D-certified DriveOS that includes an NCAP 5-star safety stack.

Alpamayo 1.5 brings steerable driving with language commands

Nvidia also unveiled Alpamayo 1.5, an open AI model for autonomous driving. It takes driving video, motion history, navigation data, and natural language instructions as inputs and outputs driving trajectories with traceable reasoning. Developers can steer driving behavior directly through text prompts.

The model supports flexible multi-camera configurations, making it easier to deploy the same AI stack across different vehicle lines. Since launch, the Alpamayo portfolio has been downloaded by more than 100,000 developers, according to Nvidia.

For training and validation, there's Omniverse NuRec, a simulation technology based on 3D Gaussian Splatting. It reconstructs real driving situations for interactive testing and is now generally available through the NGC catalog. Users include dSPACE, Foretellix, and the University of Michigan with its Mcity test facility.

Industrial robots are learning to think

Alongside the automotive push, Nvidia is expanding its robotics platform. FANUC, ABB Robotics, YASKAWA, and KUKA - which together operate well over two million installed robots worldwide, according to Nvidia - are integrating Omniverse libraries and Isaac simulation frameworks into their commissioning solutions. Jetson modules for AI inference at the edge are also being built into their controllers.

Nvidia announced Cosmos 3 as a new model that combines synthetic world generation, visual reasoning, and action simulation, aiming to surpass earlier versions of the Cosmos models.

For humanoid robots, Nvidia is making the foundation model GR00T N1.7 available in early access with a commercial license. It's designed to bring generalized skills including dexterity control. Huang also previewed GR00T N2, which is based on Nvidia's own DreamZero research and uses a new "World Action Model" architecture. According to Nvidia, robots running GR00T N2 complete new tasks in unfamiliar environments more than twice as often as leading vision-language-action models. The model currently ranks first on the MolmoSpaces and RoboArena benchmarks and is expected to ship by the end of 2026. The GR00T models are meant to serve as generalist models that can handle a wide range of tasks across different robot platforms.

Isaac Lab 3.0, also in early access, is designed to speed up robot learning on DGX infrastructure and builds on the new Newton Physics Engine 1.0.

The partner ecosystem spans both startups and established robotics giants: 1X, AGIBOT, Agility, Boston Dynamics, Figure, Hexagon Robotics, and NEURA Robotics are building humanoid robots on Nvidia's platform. Skild AI is working with major manufacturers ABB and Universal Robots on generalized robot intelligence for various industries, while also helping Foxconn with high-precision assembly on Nvidia's own Blackwell production lines.

Open-source blueprint tackles the training data problem

Nvidia is also taking on one of the central challenges in training physical AI: these models need massive amounts of training data, including rare edge cases that are difficult and expensive to capture in the real world. The Physical AI Data Factory Blueprint is designed to address this. The open reference architecture automates the pipeline from raw data to finished training datasets in three stages: Cosmos Curator for curation, Cosmos Transfer for augmentation, and Cosmos Evaluator for quality assessment.

With this and its other simulation platforms, Nvidia wants to turn robotics' data problem into a compute problem. The bottleneck for more powerful models would no longer be, say, the vehicle fleet of an automaker collecting data in the real world - instead, it becomes the raw compute power a company is willing to invest in simulation training.

The orchestration framework OSMO, also announced, integrates with coding agents like Claude Code, OpenAI Codex, and Cursor so that AI agents can independently manage resources and bottlenecks in the data pipeline. Microsoft Azure and Nebius are incorporating the blueprint into their cloud services. A GitHub release is planned for April.

IGX Thor targets operating rooms and satellites

Nvidia's edge computing platform IGX Thor - essentially an AI PC for edge applications - is now generally available and targets safety-critical use cases. Johnson & Johnson is using it for its Polyphonic digital surgery platform, Karl Storz is developing endoscopy tools with it, and Medtronic is evaluating it for surgical robotic systems.

Outside of medicine, Caterpillar is using IGX Thor for an AI cabin assistant, Hitachi Rail for predictive maintenance, and Planet Labs plans to process satellite data directly in orbit. CERN researchers are also using the platform for physics-inspired AI models, according to Nvidia.

T-Mobile and Nokia turn 5G networks into edge AI infrastructure

Together with T-Mobile and Nokia, Nvidia wants to transform 5G mobile networks into distributed edge AI infrastructure. T-Mobile is piloting Nvidia RTX PRO 6000 Blackwell Server Edition at network sites and testing physical AI applications over the cellular network.

Huang described telecom networks as evolving into AI infrastructure that enables billions of devices to see, hear, and act in real time.

The idea is straightforward: instead of sending heavy AI computations from cameras and robots to the cloud, the work gets offloaded to the nearest network edge site. Pilot projects include a "City Operations Agent" solution for San Jose that optimizes traffic signal control and, according to Nvidia, should cut incident response time by a factor of five. Levatas and Skydio are automating power line inspections over 5G.

Nvidia is also introducing the Metropolis VSS 3 Blueprint, which is designed to enable video AI agents that can search and summarize footage. Specific events should be findable in under five seconds. There are more than 1.5 billion cameras worldwide, and less than one percent of their footage is ever reviewed by humans, according to Nvidia.

AI agents come to chip design and manufacturing

On the software side, Nvidia is working with Cadence, Dassault Systemes, Siemens, and Synopsys. All four are building AI agents for chip and system workflows and served as examples during the presentation of how physical AI models can help in research, development, and manufacturing - and how Nvidia's GPUs accelerate those processes. Cadence is building the "ChipStack AI SuperAgent," Siemens the "Fuse EDA AI Agent," Synopsys the "AgentEngineer" framework, and Dassault role-based "Virtual Companions."

Honda is running aerodynamic simulations with Synopsys Ansys Fluent on the Grace Blackwell platform 34 times faster than with CPUs, according to Nvidia. JLR and Mercedes-Benz are using Siemens Simcenter STAR-CCM+ on Nvidia infrastructure. MediaTek is accelerating Cadence Spectre by a factor of six with H100 GPUs.

In semiconductor manufacturing, Samsung, SK hynix, and TSMC are using GPU-accelerated tools for lithography and physical verification. Siemens' new Digital Twin Composer, built on Omniverse libraries, is designed to give Foxconn, HD Hyundai, PepsiCo, and KION industrial digital twins. Cloud infrastructure comes from AWS, Google Cloud, Microsoft Azure, and Oracle.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now