Luma AI's Uni-1 could be the first real challenger to Google's Nano Banana image dominance

Update March 23, 2026:

Uni-1 (see below) is now available. In human preference tests (Elo rating), Uni-1 takes first place in the overall, style/editing, and reference-based generation categories, according to Luma Labs. For pure text-to-image generation, it ranks second behind Google's Nano Banana.

The model nails my benchmark prompt, on par with Nano Banana Pro, possibly even better. That's a noticeable step up from the new Midjourney v8, which struggled with the same prompt. One caveat: the generated image went through a Luma image generation agent, so the results might differ slightly from the upcoming API (see below). You can try Uni-1 for free at Luma Labs.

Overall, Uni-1 gets close to Google's flagship image model while coming in cheaper at comparable resolution: at 2K, the average cost through the upcoming API lands at about $0.09 per image, depending on how many reference images you feed it.

| Feature | Uni-1 | Nano Banana 2 | Nano Banana Pro |

|---|---|---|---|

| Text to Image (2048px) | $0.0909 | $0.101 | $0.134 |

| Image edit / i2i (2048px) | $0.0933 | $0.101 | $0.134 |

| Multi-ref, 1 img (2048px) | $0.0933 | $0.101 | $0.134 |

| Multi-ref, 2 imgs (2048px) | $0.0957 | $0.101 | $0.134 |

| Multi-ref, 8 imgs (2048px) | $0.1101 | $0.101 | $0.134 |

Nano Banana 2 does offer lower resolutions at cheaper prices, though: a 0.5K image costs about $0.045, and a 1K image runs about $0.067.

Original article from March 8, 2026:

Luma AI's new Uni-1 image model tops Nano Banana 2 and GPT Image 1.5 on logic-based benchmarks

Luma AI introduces Uni-1, its first model to combine image understanding and image generation in a single architecture.

Like Google's Nano Banana Pro and GPT Image 1.5, Uni-1 is built on an autoregressive transformer, an AI model that generates content token by token in sequence, instead of pulling images out of noise the way traditional diffusion models do. Text and images share the same processing pipeline.

Luma says the model can reason through prompts before and during generation, breaking down complex instructions and planning out scenes. This approach typically leads to much more accurate prompt following, and Uni-1 is no exception. It can, for example, take several photos and merge them into an entirely new composition.

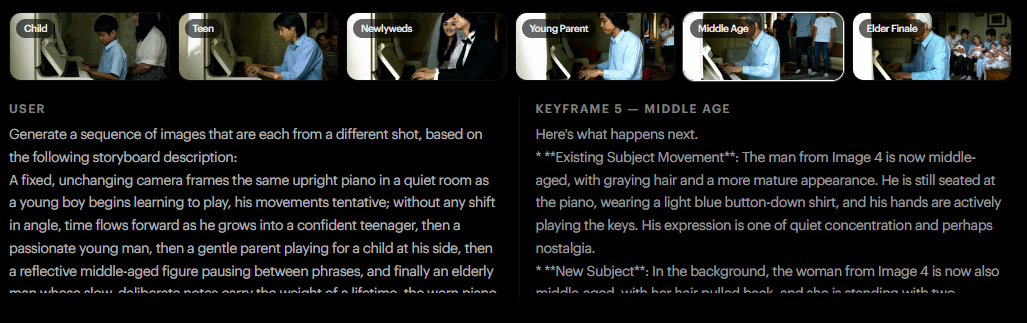

Beyond basic generation, Luma says Uni-1 can refine subjects across multiple conversation turns while keeping context intact, convert images into over 76 art styles, accept sketches and visual instructions as input, and transfer identities, poses, and compositions into new images from reference photos. In one demo, the model generated an entire sequence from a single reference image, gradually aging a pianist from childhood to old age.

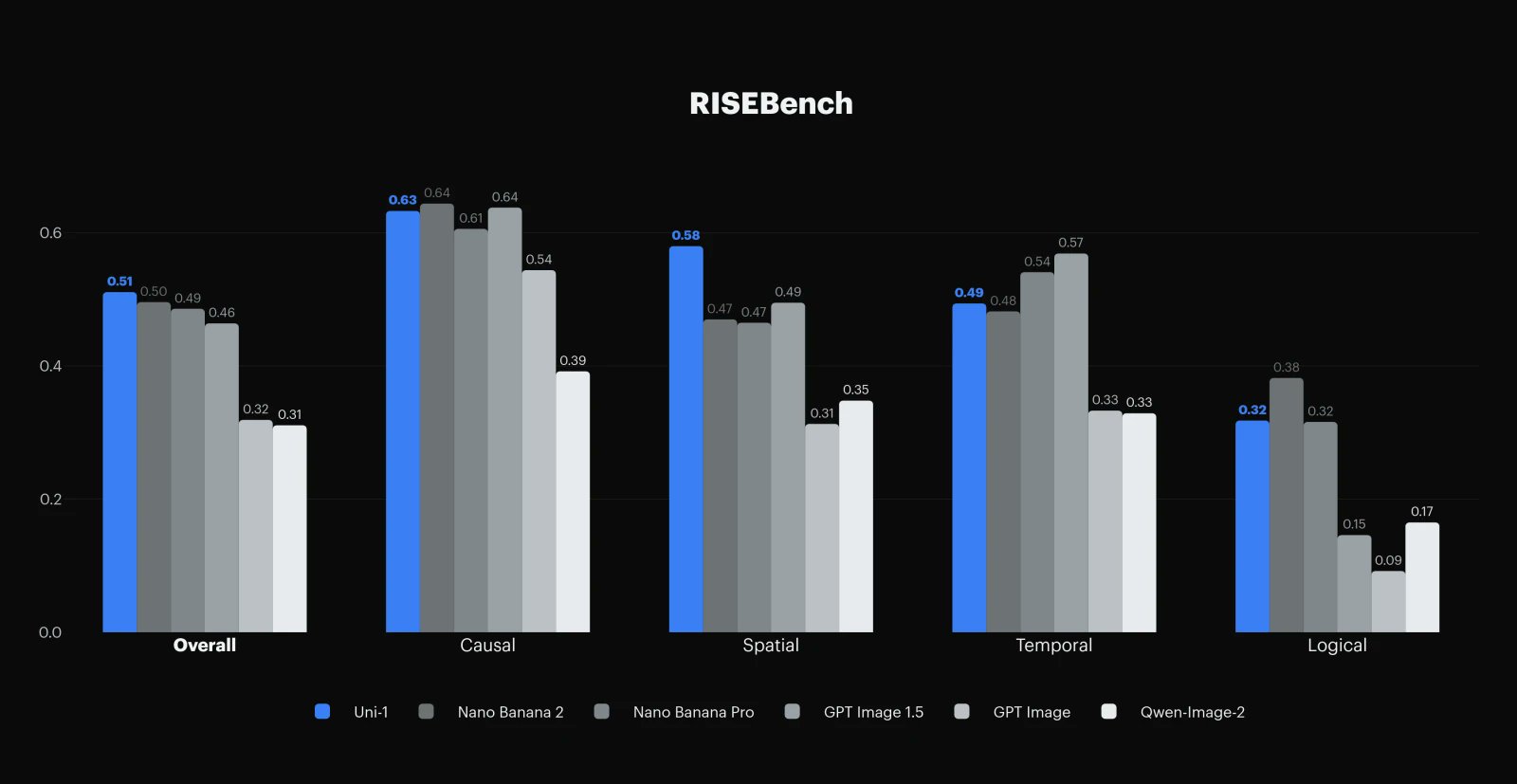

According to Luma, Uni-1 scores highest on the RISEBench test for logic-based image processing, narrowly beating both Nano Banana 2 and GPT Image 1.5. The image generation capability also boosts the model's visual understanding. In object recognition, for instance, it nearly matches Google's Gemini 3 Pro. The model supports multiple languages.

Uni-1 will soon be available through Luma Agents, a newly launched creative assistant, and the Luma API. No pricing has been announced yet.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.