Meta's AI researchers are studying the human brain to learn how to develop better artificial intelligence. AI, in turn, could first help us better understand the human brain.

Despite the massive progress of artificial intelligence in recent years, especially in speech and image processing, the learning process of AI systems is far from human efficiency.

Large language models process gigantic amounts of data to learn a language. The more data, the better: language models are trained with billions of sentences until they more or less master a language. For humans, a few million is roughly enough.

"Humans and kids, in particular, acquire language extremely efficiently. They learn to do this rapidly and with an extremely small amount of data. This requires a skill which at the moment remains unknown," says Jean-Rémi-King, senior AI researcher at Meta AI.

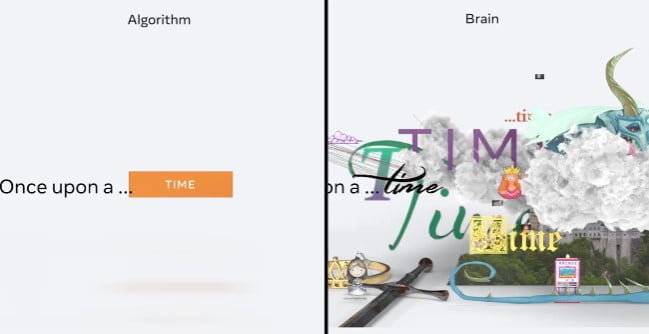

Despite training with enormous amounts of data, AI systems have significant weaknesses in consistency and logic when generating longer texts up to entire stories. There is a reason for this: AI usually only predicts the next word; humans, on the other hand, design ideas, actions, and entire narratives based on individual words.

On the trail of human intelligence

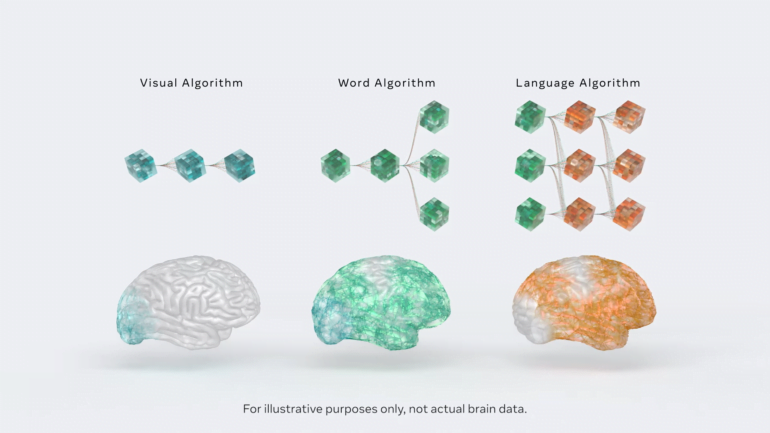

In a long-term study, meta-researchers therefore want to compare the activations in neuronal networks with those of the human brain when processing natural language. They hope to find out more about the predictive differences between the brain and the AI model.

Meta's AI team reports initial findings based on a comparison of brain activity on 345 fMRI scans of people listening to a narrative and neural activation in language models that had that same narrative as input.

Language models that are more comparable to brain activity would predict new words particularly well on a context basis ("Once upon … a time"), the researchers found. To them, this input-based prediction is at the heart of self-supervised learning and could be the key to human language understanding.

Meta's goal: human-level AI

"Our results show that specific brain regions, such as the prefrontal and parietal cortices, are best accounted for by language models enhanced with deep representations of far-off words in the future," Meta's AI team writes.

These initial results would shed light on the "computational organization of the human brain and its inherently predictive nature," paving the way for better AI models.

Still, human prediction goes far beyond that of AI language models, and many of the workings of the human brain remain unclear.

However, the research to date showed that there are quantifiable similarities between brains and AI models, from which it may be possible to draw conclusions about the functions of the human brain. "Deep learning tools have made it possible to clarify the hierarchy of the brain in ways that wasn’t possible before," the team writes. That, in turn, would create new opportunities in neuroscience.

The long-term study on the human brain is part of an effort to create human-level artificial intelligence, according to Meta. Meta's head of AI, Yann LeCun, recently shared his vision of the AI architecture of the future, which is also modeled after the human brain.