Midjourney V8 rolls out with 5x faster generation but charges 4x more for its best features

Key Points

- Midjourney has released an early version of its V8 model for community testing, with image generation running roughly five times faster than before and a new

--hdmode that renders images natively at 2K resolution. - The updated model is reported to follow detailed instructions more accurately, produce more coherent images, and handle text rendering within images more reliably than its predecessor.

- Despite these improvements, Midjourney V8 remains a 1000% diffusion-based model and still falls short of autoregressive approaches when it comes to precise prompt adherence.

Midjourney is rolling out an early version of its new V8 model for community testing. Image generation is reportedly much faster and more detailed, but some features cost four times as much.

Midjourney has shipped an early version of its V8 model for testing on the Alpha website, asking the community to kick the tires and share feedback. The company calls this a fundamentally new model with its own strengths and weaknesses, one that may require entirely new prompting strategies.

Image generation is roughly five times faster than before, according to Midjourney. The update also introduces a new --hd mode that renders images natively at 2K resolution, plus a --q 4 mode for better image coherence. V8 ships with support for multiple aspect ratios and parameters like --chaos, --weird, --exp, and --raw. Existing V7 personalization profiles, moodboards, and style references (srefs) 2"should" carry over and remain backward compatible.

Midjourney says V8 is significantly better at following detailed instructions. The model's grasp of individual aesthetics through personalization, style references, and mood boards has noticeably improved, and generated images come out more coherent and detailed. Text rendering—putting readable text inside generated images—also works more reliably than in previous versions, as long as users wrap the desired text in quotation marks in the prompt, the company claims.

Diffusion model still trips up on complex prompts

That said, as a pure diffusion-based model, Midjourney still falls short of competitors that have started blending autoregressive components into their image generation pipelines. Models like Google's Nano Banana and OpenAI's GPT image 1.5 use these hybrid architectures to boost prompt accuracy, and the difference has been obvious with older Midjourney models.

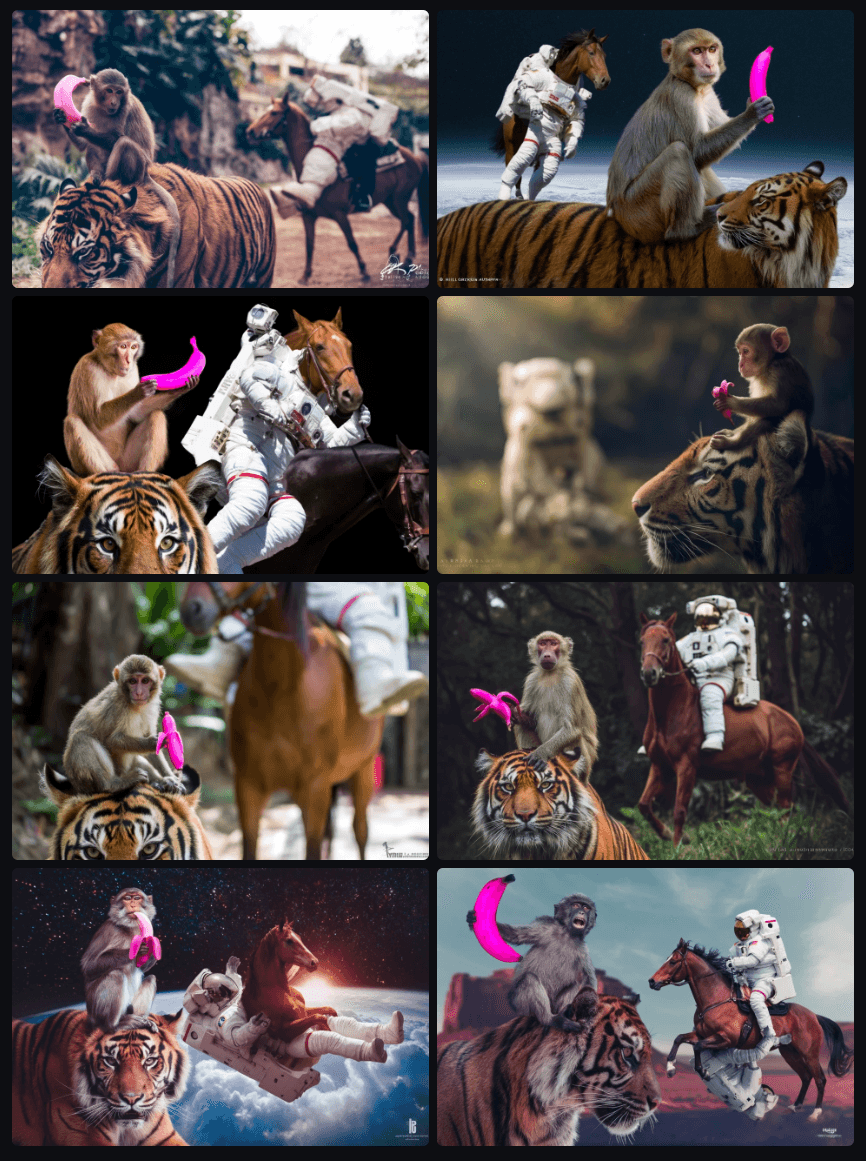

Early signs suggest V8 hasn't fully closed that gap, though it's too soon to say for sure. In an initial test using a complex astronaut prompt that has become something of my informal benchmark, Midjourney performed significantly worse than both AR models. The abstract concept—a horse riding an astronaut, not the other way around—is something Midjourney just couldn't nail. Even Midjourney's more direct competitor, Flux, did a slightly better job. As more image generators adopt these mixed architectures, Midjourney's diffusion-only strategy could become an increasingly tough sell for users who need precise prompt control.

A hyper-realistic DSLR photo. A monkey holding a pink banana is sitting on a tiger in the foreground. In the background, a HORSE is RIDING AN ASTRONAUT. The astronaut is underneath like a living "spacesuit horse saddle," and the HORSE is clearly on top, in control, as the rider. Make it 100% unambiguous: the HORSE is the rider and the ASTRONAUT is being ridden, NOT the other way around. High-resolution, sharp focus, realistic lighting.

Midjourney says the standard V8 aesthetic isn't finished yet and recommends that users looking for a photorealistic or more controlled look jump straight into --raw mode or work with mood boards and style references. The company also claims that cranking up personalization (--stylize 1000) currently gets "the most" out of the model, and that V8 really shines when users lean heavily into the stylization systems and write longer, more specific prompts.

Premium features cost four times as much with no relax mode at launch

The pricing is likely to sting for some users. Midjourney says jobs using --hd, --q 4, style references, or mood boards currently run four times slower than standard jobs and cost four times as much. Relax mode, a popular option that lets users generate images more slowly but at no extra cost, isn't available at launch. Midjourney says it's building out a new server cluster for Relax and working on cheaper render modes.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now