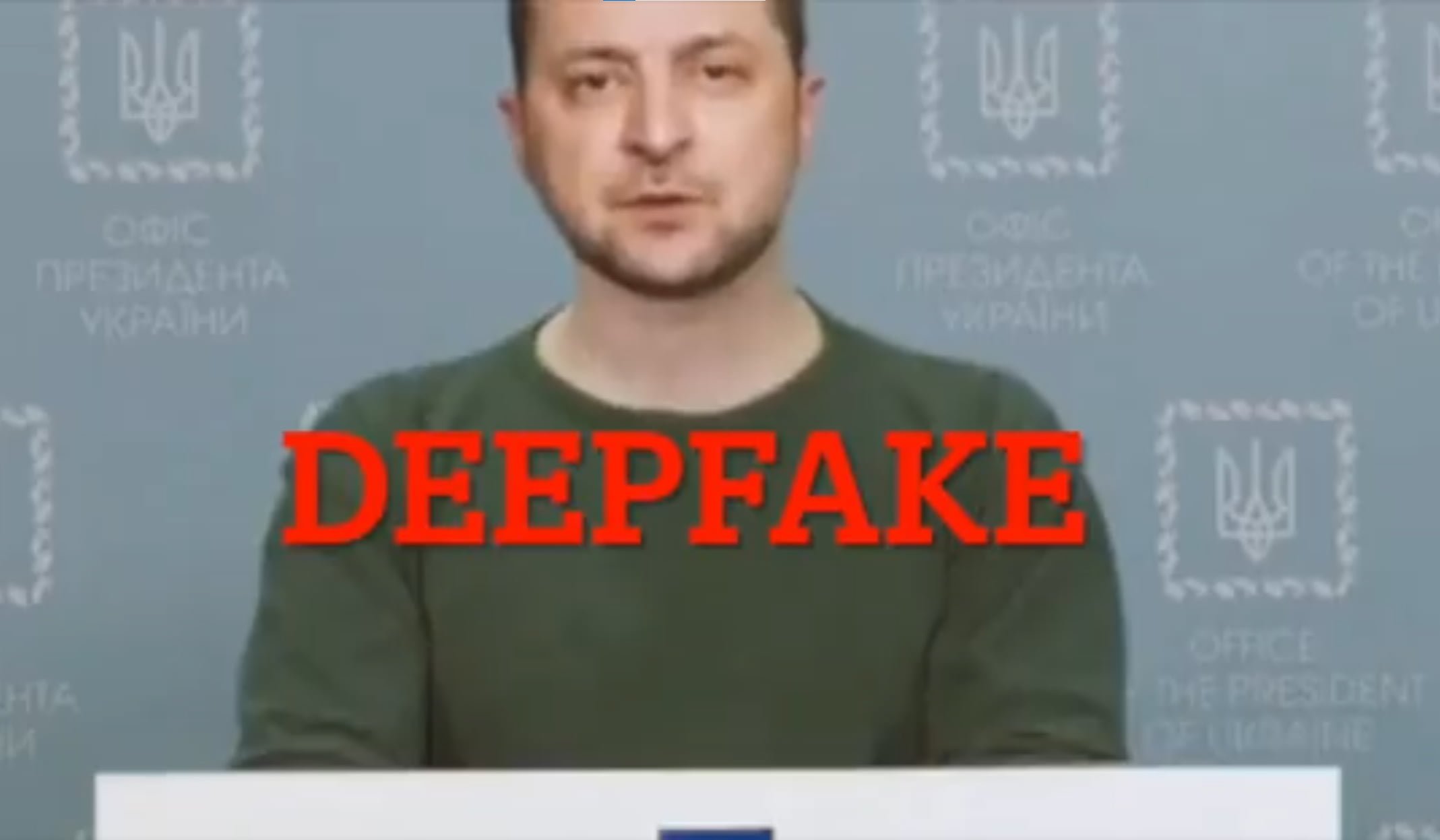

In a video, Ukrainian President Volodymyr Zelenskyy calls on his people to lay down their arms. It could be the first attempt at major political manipulation using deepfakes.

He has to make difficult decisions, says Fake Zelenskyy, but the Ukrainian people should face the truth: Defense has failed and there is no tomorrow. He resigns from his post and advises Ukraine to lay down their weapons and return to their families. "You should not die in this war," Fake-Zelenskyy says.

Parallel attack via news site and social media

The creators of the fake video first hacked the website of Ukraine24 and spread the video through its channels. It then ended up on social networks such as Twitter, Facebook and Instagram.

However, it did not have a serious chance of going viral: the fake is poorly made. Zelenskyy's head looks like it's glued to his neck, and the lighting on his face and body is strikingly different. Even laymen notice that something is wrong here.

Fake Zelenskyy is also supposed to speak with a Russian accent, and his voice also has the typical slightly echoey and choppy sound of a machine-generated voice. The facial expressions, on the other hand, are convincing.

Video: via Mikael Thalen / Twitter

The Ukrainian president promptly reacted to the clumsy fake attempt and published a short smartphone video with a rebuttal via his official channels. In addition, the Ukrainian government prepared citizens for possible deepfake video attacks as early as the beginning of March, in which Zelenskyy could announce surrender to the Russian military.

"This will not be a real video, but created by machine learning algorithms. Videos created using such technologies are almost indistinguishable from real videos," the Ukrainian Centre for Strategic Communications and Information Security warned on Facebook on March 2.

Zelenskyy video: Deepfake or not?

The Center for Security and Emerging Technology and Nathaniel Gleicher, responsible for security policies at Meta, call the Zelenskyy fake video a deepfake. Meta removed the video from its platforms.

Mounir Ibrahim of video authenticity verification firm Truepic is leaving open the question of whether the video is a deepfake, i.e., a video forged with AI technology, or a fake video created by other means, before conducting an in-depth review. As soon as there is a consensus on this, I will update the article.

If the deepfake suspicion proves to be correct, the Zelenskyy fake video would be historic, despite its poor quality: it would be the first documented attempt at profound political manipulation using a deepfake. Researchers and politicians have been warning about this potential danger for years.

The fact that the Zelenskyy fake was quickly discovered and deleted due to its botched execution, and subsequently did no damage, should not be taken as an all-clear or a sign of social media's resilience to fake videos.

Demonstrations show that high quality deepfakes, barely recognizable as forgeries, are possible and probably have a very different effect. The Zelenskyy forgery is also only a few hours of work away from being much better quality.

Recent studies conclude that people can be fooled by better quality deepfakes even when they specifically look for them.