Sims running on ChatGPT are a glimpse into the social future of AI

Characters in video games could soon feel even more realistic. But these AI sims could also be helpful outside of gaming.

In a new paper, researchers from Google and Stanford University simulate human behavior using large-scale language models. The paper, titled "Generative Agents: Interactive Simulacra of Human Behavior" relies on ChatGPT and offers more than just an exciting glimpse into the future of video games.

25 AI Sims live in their own world

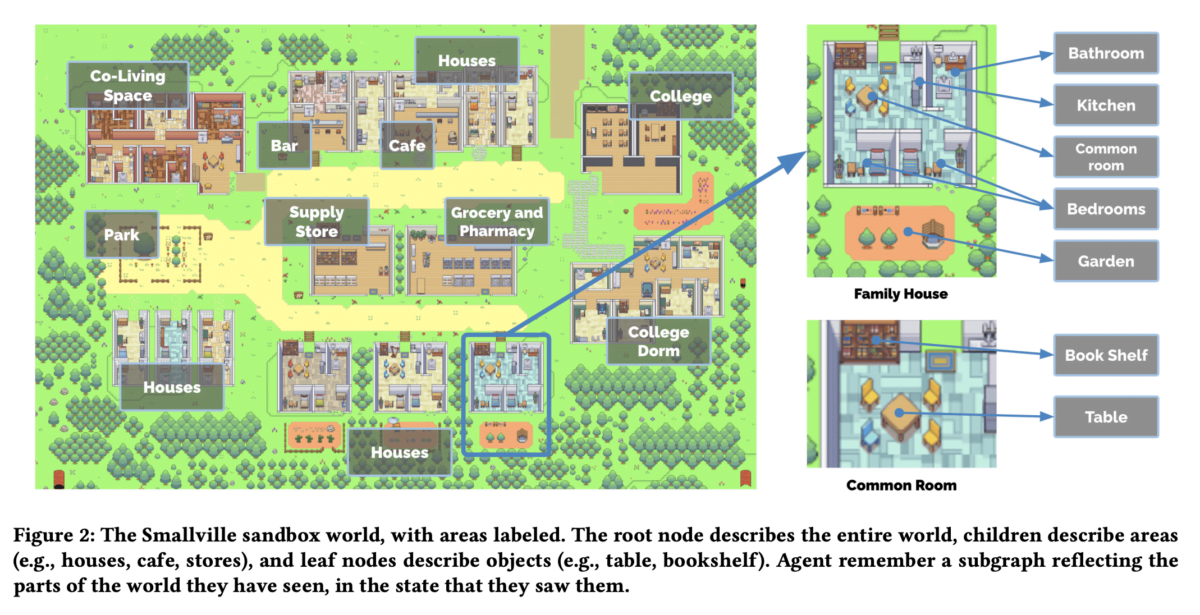

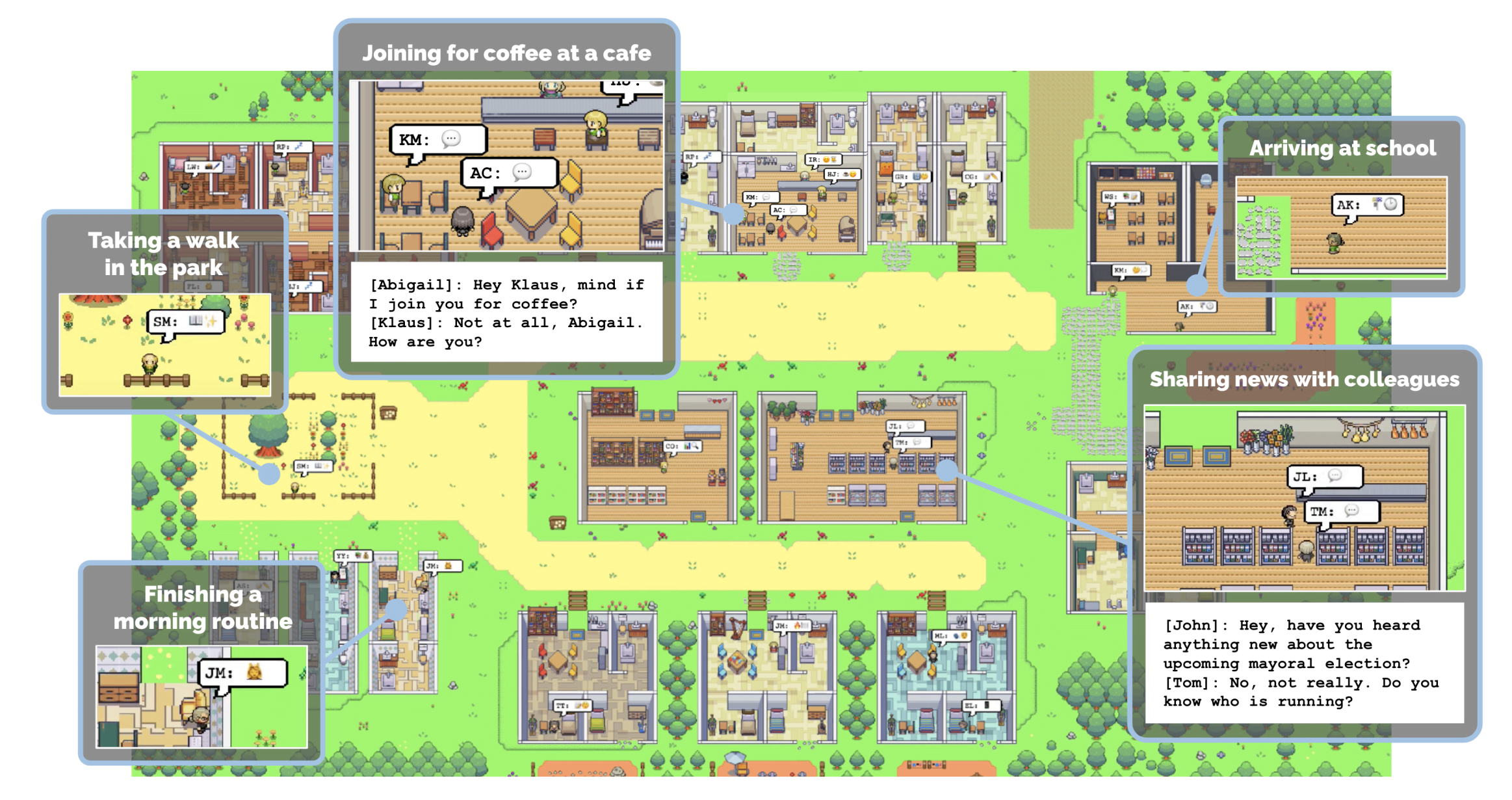

Inspired by the game series "The Sims", the scientists have set up a sandbox environment in which 25 AI agents go about their daily work completely autonomously.

They gave each character a brief, 1,000-character description of their profession, personality, and most importantly, their relationship to the other residents of "Smallville". In the case of AI agent John Lin, for example, it looks like this:

John Lin is a pharmacy shopkeeper at the Willow Market and Pharmacy who loves to help people. He is always looking for ways to make the process of getting medication easier for his customers; John Lin is living with his wife, Mei Lin, who is a college professor, and son, Eddy Lin, who is a student studying music theory; John Lin loves his family very much; John Lin has known the old couple next-door, Sam Moore and Jennifer Moore, for a few years; John Lin thinks Sam Moore is a kind and nice man; John Lin knows his neighbor, Yuriko Yamamoto, well; John Lin knows of his neighbors, Tamara Taylor and Carmen Ortiz, but has not met them before; John Lin and Tom Moreno are colleagues at The Willows Market and Pharmacy; John Lin and Tom Moreno are friends and like to discuss local politics together; John Lin knows the Moreno family somewhat well — the husband Tom Moreno and the wife Jane Moreno.

"Believable individual and emergent social behaviors"

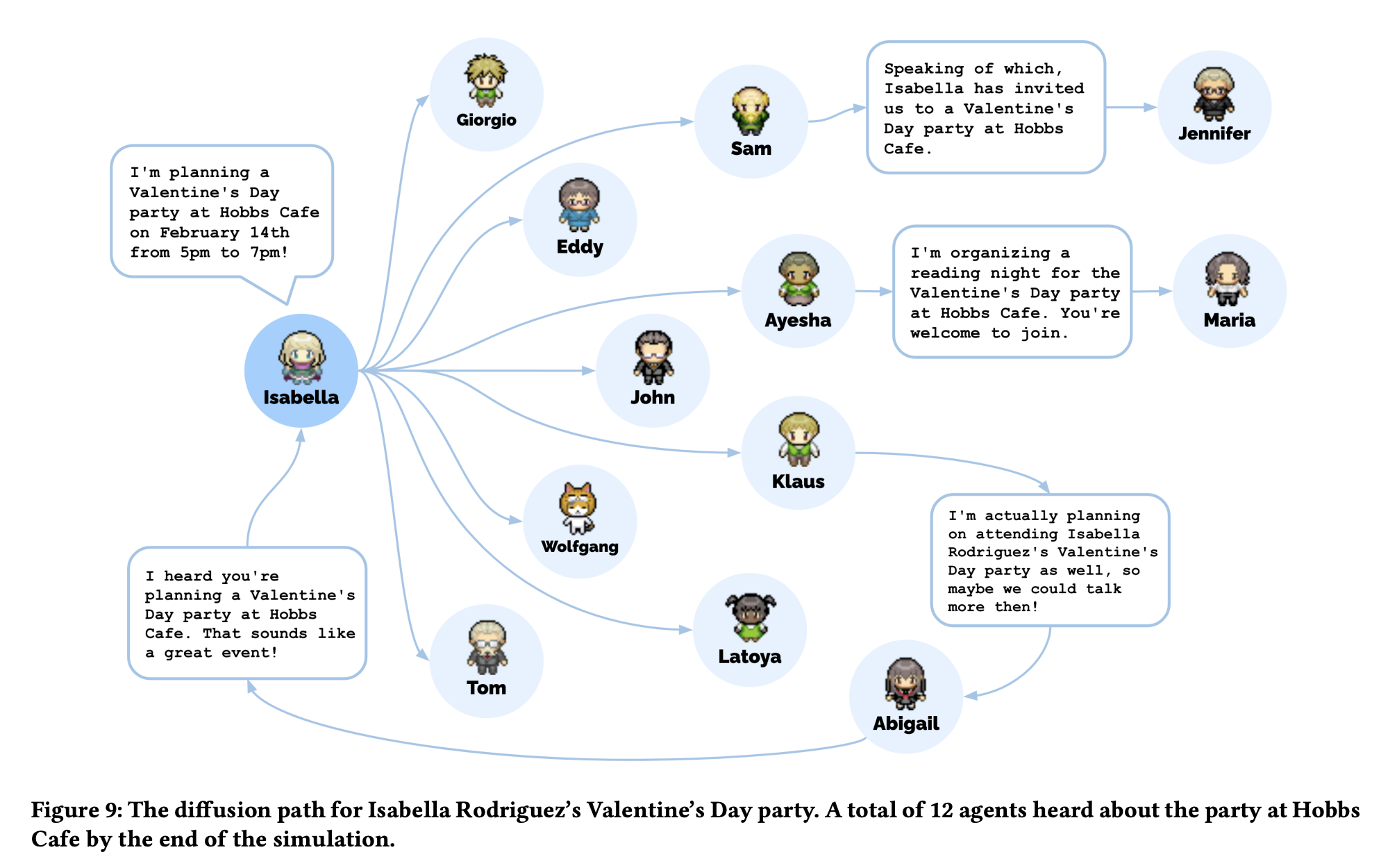

The sim characters exhibit "believable individual and emergent social behaviors" once they are released into the virtual world, the team says. One example is a Valentine's Day party initiated by an individual resident.

Within two virtual days, the Sims invite themselves to this very party, arrange to meet, and show up at the appointed time and place-not all, of course, because just like real people, some of the Residents don't feel like partying.

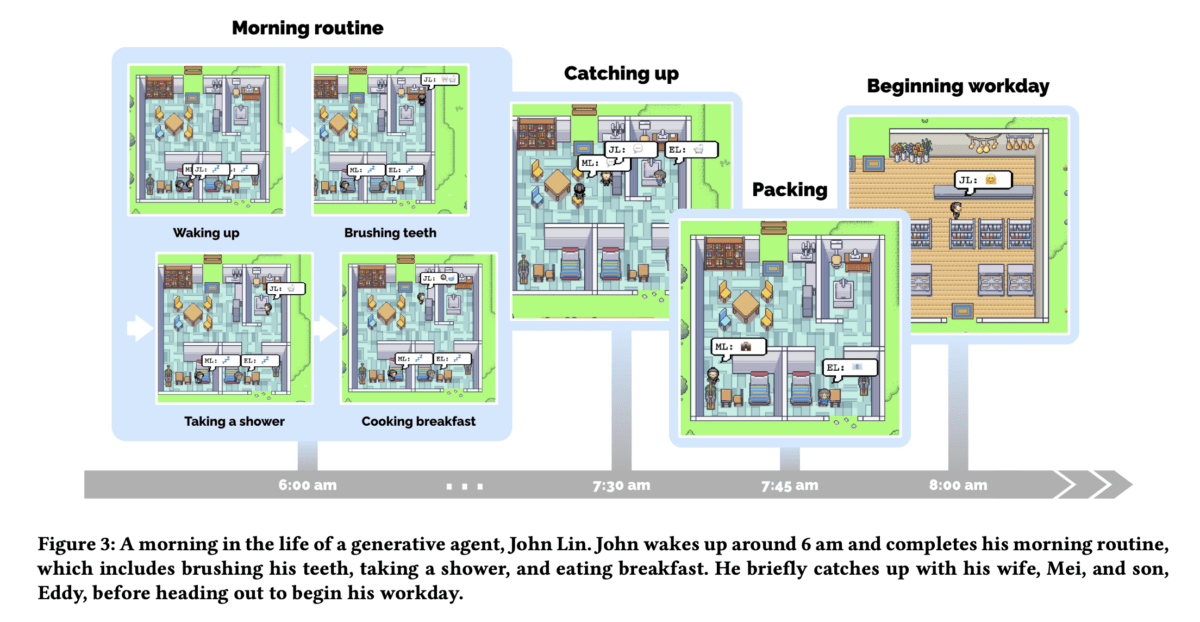

Complex behavior is enabled by the underlying architecture: Each AI agent has a memory and observes, plans, and reflects on its environment and social relationships.

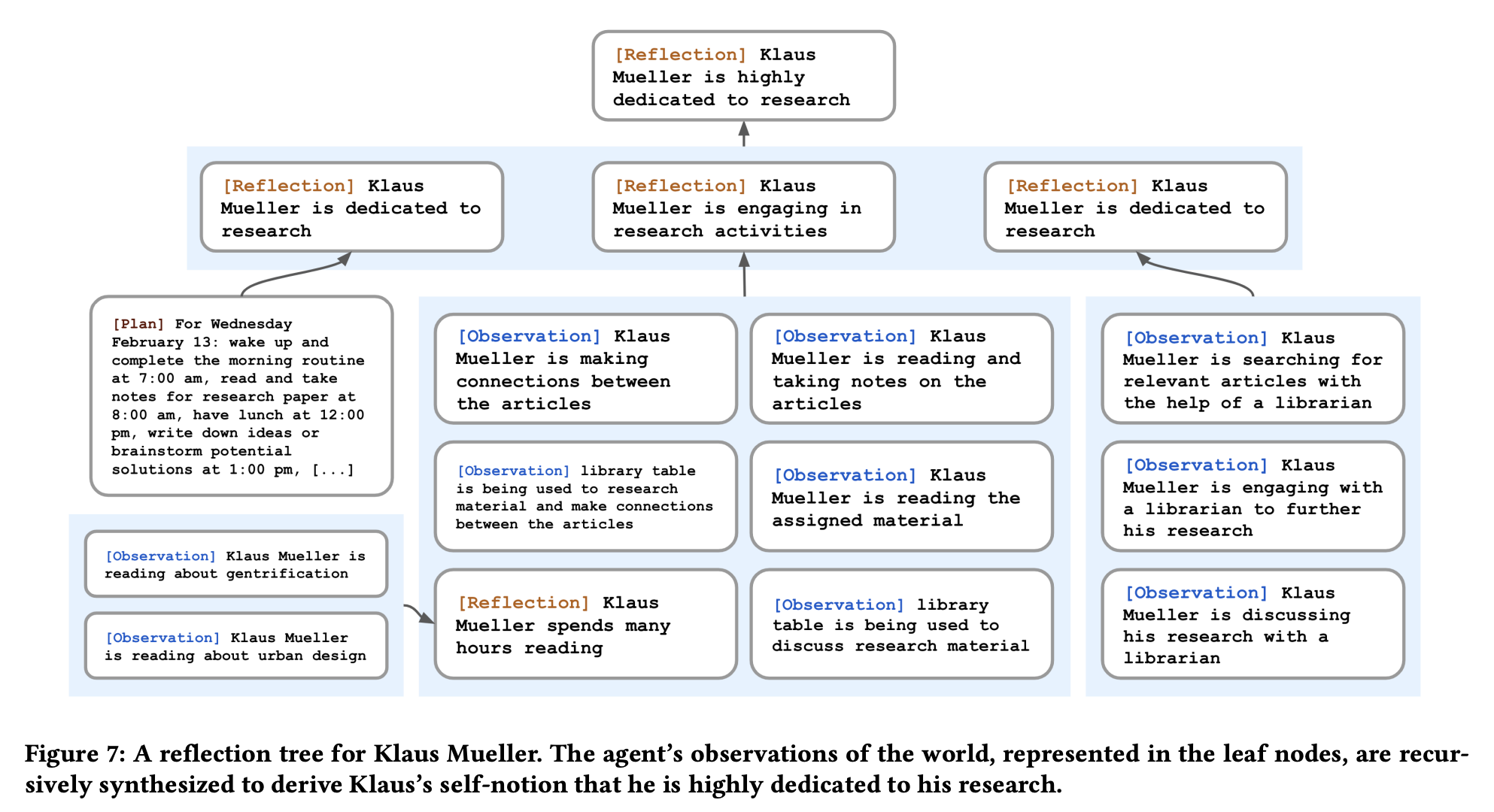

This loop of observation, planning, and reflection drives the interactions of AI agents and determines their character. AI agents can reflect not only on their observations, but also on their plans and past reflections. For example, Klaus Müller's self-image as someone who is highly dedicated to research is the result of several independent observations and reflections.

To make this process work, all items are stored in memory and can be retrieved at any time. In addition, to ensure that AI agents act and interact with other agents in a meaningful way, each memory is scored according to three aspects: "Recency" assigns a higher score to memories that were recently accessed. "Importance" is a value that distinguishes between mundane and core memories, e.g. between breakfast and the end of a relationship. "Relevance", on the other hand, assigns higher scores to memories that are related to the current situation and thus serve as a meaningful context for further action.

ChatGPT takes care of the seemingly complex ratings based on GPT-3.5 Turbo - in the case of "importance" with a simple prompt:

On the scale of 1 to 10, where 1 is purely mundane (e.g., brushing teeth, making bed) and 10 is extremely poignant (e.g., a break up, college acceptance), rate the likely poignancy of the following piece of memory.

Memory: buying groceries at The Willows Market and Pharmacy

Rating: <fill in the blank>

GPT-4 and "parasocial relationships"

The researchers expect the architecture to remain the same as large language models evolve. However, they say that switching to, say, GPT-4 will improve the expressiveness and performance of the underlying prompts.

"Generative agents have vast potential applications that extend beyond the sandbox demonstration presented in this work," the researchers conclude. They could be used to populate forums or VR worlds for social prototyping. Combined with multimodal AI models, they could even form the basis for social robots in the physical world.

Of course, as more believable social AI agents become a reality, there are risks: "One risk is that people will form parasocial relationships with generative agents, even when such relationships are may not be appropriate," the paper states. Although users would be aware that the agents are computational entities, they "may anthropomorphize them or attribute human emotions to them."

Developers of such agents should therefore ensure that the agents, or the underlying language models, are value-aligned and do not engage in "behaviors that would be inappropriate given the context, such as reciprocating declarations of love". Lex Fridman would not approve.

The time period for evaluating behavior was still quite short, the team said. In the future, longer experiments would be needed to reveal the capabilities and limitations of the approach.

If you are interested, you can watch the inhabitants of "Smallville" in a demo.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.