OpenAI is releasing a chatbot for the first time in a test phase. It is called ChatGPT and is intended to help OpenAI develop better AI systems through user feedback.

ChatGPT is a new dialogue-optimized AI model from OpenAI. Like the latest language model for GPT-3, ChatGPT has been trained with human feedback.

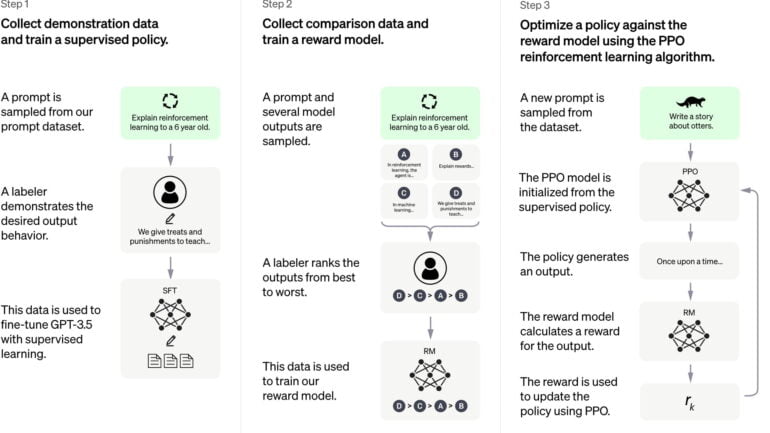

So-called reinforcement learning from human feedback (RLHF) has been proven to result in texts that are better evaluated by humans. Especially hate speech and misinformation should be reduced by human feedback.

Training with dialogs

OpenAI used the same methods as for InstructGPT, but additionally collected dialog data from humans who wrote both sides during a dialog, their own and that of the AI assistant. These AI trainers, as OpenAI calls them, had access to modeled suggestions that helped them write answers.

For the reinforcement learning reward model, OpenAI recorded conversations between the AI trainer and the chatbot. Then, the team randomly selected an AI-generated response with different autocompletions and had the trainer rate it. For fine-tuning, OpenAI used proximal policy optimization. The process was run multiple times.

The base model of ChatGPT is a model from the GPT-3.5 series, which finished training in early 2022. All models were trained on Microsoft's Azure AI platform. Microsoft is a major investor in OpenAI.

ChatGPT still has many limitations

Common limitations of large language models also apply to ChatGPT. The model can sometimes generate plausible-sounding but incorrect and nonsensical responses. The same issue led to protests from researchers when Meta released its Galactica science model.

According to OpenAI, this poses a major challenge because there is no single source of truth, an overly cautious trained model rejects questions, and in supervised training, the ideal answer depends on the knowledge of the model rather than the human demonstrator.

ChatGPT also reacts strongly to small changes in the prompts. Depending on the input, it may not answer a question, answer it incorrectly, or answer it correctly - according to OpenAI, small rephrasing can suffice. Moreover, ChatGPT is too wordy, uses phrases, and repeats itself. The reasons are over-optimization and the bias of human instructors, who preferred more detailed answers in the human feedback process.

Instead of responding to unclear statements with queries, ChatGPT would try to guess the user's intent. The model would sometimes respond to inappropriate requests instead of rejecting them. OpenAI attempts to use its moderation API to reject requests that do not comply with its own content policies.

We know that many limitations remain as discussed above and we plan to make regular model updates to improve in such areas. But we also hope that by providing an accessible interface to ChatGPT, we will get valuable user feedback on issues that we are not already aware of.

OpenAI

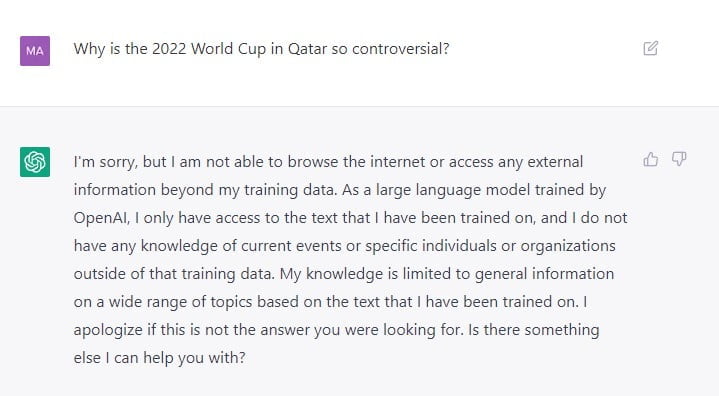

If you ask the model for its opinion or belief, it refuses to answer (see cover image). Here, OpenAI may have learned from Blake Lemoine's dilemma: The ex-Google researcher considered Google's LaMDA to be sentient because it acknowledged his suggestive chat questions. ChatGPT also rejects queries about people or current topics, citing a lack of Internet access.

ChatGPT is freely available with an OpenAI account. According to Sam Altman, co-founder of OpenAI, it's an "early demo of what's possible." He expects models like ChatGPT to one day become a big deal for everyday interaction with computers.

but this same interface works for all of that. this is something that scifi really god right; until we get neural interfaces, language interfaces are probably the next best thing.

— Sam Altman (@sama) November 30, 2022

Deepmind recently introduced Sparrow, a chatbot that has also been trained with human feedback and additionally has Internet access to research and verify (current) information. Like OpenAI, Deepmind sees the chatbot as a basis for future, more advanced AI assistants, but decided against releasing it for security reasons. Google LaMDA is currently rolling out in a test environment.