Xiaomi launches three MiMo AI models to power agents, robots, and voice

Key Points

-

Xiaomi has simultaneously released three AI models designed to form a complete platform for AI agents: a large language model, a multimodal model, and a speech synthesis model.

-

The flagship MiMo-V2-Pro nearly matches Anthropic's Claude Opus 4.6 on coding and agent tasks at a fraction of the API cost, while the multimodal MiMo-V2-Omni can see, hear, and act autonomously, for example, shopping in a browser or analyzing dashcam footage for hazards.

-

Before its official launch, MiMo-V2-Pro ran anonymously on the OpenRouter platform under the codename "Hunter Alpha," topping the rankings for days, with many users mistakenly assuming it was a new model from DeepSeek.

Xiaomi wants to build AI agents that can control software on their own, navigate browsers, and eventually run robots. To get there, the company's in-house MiMo team just shipped three models at once.

The flagship MiMo-V2-Pro runs on a Mixture-of-Experts architecture with over one trillion total parameters, 42 billion of which are active per request. That's roughly three times the size of its predecessor, MiMo-V2-Flash, which launched in December 2025. Despite the jump in scale, a hybrid attention mechanism keeps things efficient, letting the model handle context windows up to one million tokens. It also generates multiple tokens at once instead of predicting one word at a time, giving it a noticeable speed boost.

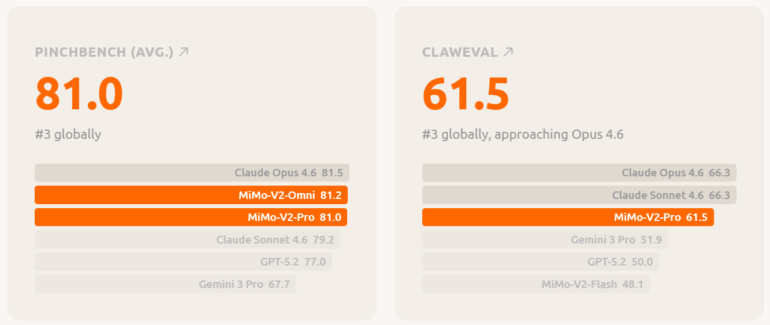

On the Artificial Analysis Intelligence Index, MiMo-V2-Pro lands at seventh place worldwide, making it the top-performing Chinese model after GLM-5 and MiniMax-M2.7. It hits 78 percent on the coding benchmark SWE-bench Verified, just a hair below Claude Opus 4.6 (80.8) and within striking distance of Claude Sonnet 4.6 (79.6). On ClawEval, the agent benchmark, it pulls 81 points, nearly matching Claude Opus 4.6's 81.5, while GPT-5.2 sits at 77.

Xiaomi undercuts Anthropic on pricing by a wide margin

Xiaomi is going after the competition hard on price. According to the platform page, MiMo-V2-Pro costs one dollar per million input tokens and three dollars per million output tokens for context lengths up to 256,000 tokens. For comparison, Claude Sonnet 4.6 runs three or 15 dollars, and Claude Opus 4.6 goes for five or 25 dollars. Xiaomi is also waiving all cache writing costs for now.

The model is live through a public API. For the launch, Xiaomi has partnered with five agent frameworks: OpenClaw, OpenCode, KiloCode, Blackbox, and Cline. Developers worldwide get free API access for one week.

MiMo-V2-Omni sees, hears, and acts in a single model

MiMo-V2-Omni folds image, video, and audio encoders into a shared backbone. The model can perceive and act on what it takes in: it natively supports structured tool calls, executes functions, and navigates user interfaces on its own.

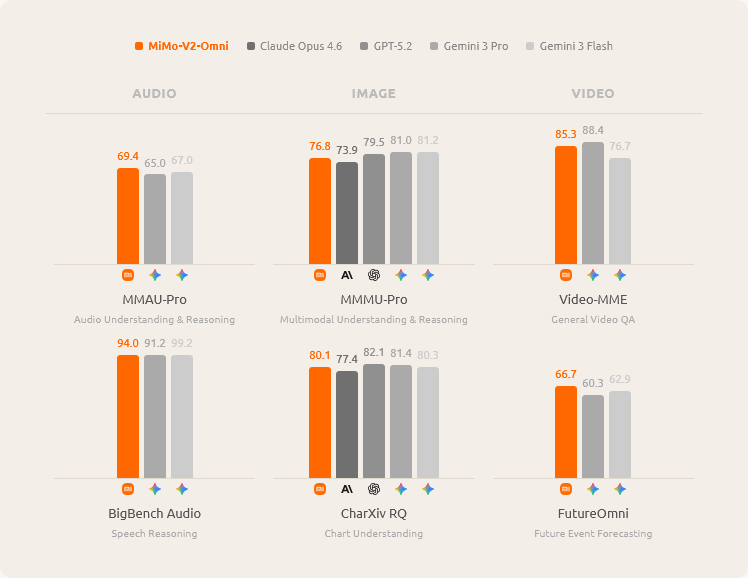

Xiaomi says MiMo-V2-Omni beats Gemini 3 Pro on audio and can record continuously for over ten hours. On images (MMMU-Pro: 76.8), it edges out Claude Opus 4.6 (73.9). The agent benchmarks tell a different story, though: on ClawEval, the Omni model scores just 54.8 - well behind Claude Opus 4.6 (66.3) and GPT-5.2 (59.6). It did outperform both Gemini 3 Pro and GPT-5.2 on the MM-BrowserComp web navigation benchmark.

For a demo, Xiaomi fed the model dashcam footage and had it flag pedestrians, oncoming vehicles, and bottlenecks as potential hazards in real time. In another scenario, MiMo-V2-Omni opened a browser on its own, looked up product reviews on the Chinese platform Xiaohongshu, compared prices on JD.com, haggled for discounts with customer service via chat, and completed the purchase.

A separate demo showed the model creating multimedia content, debugging the code behind it, and publishing the result to TikTok through the browser, all without human input. In every case, MiMo-V2-Omni handles the decision-making while the open-source framework OpenClaw takes care of the actual clicks and file operations.

MiMo-V2-TTS generates emotional speech from natural language descriptions

Xiaomi says its MiMo-V2-TTS speech synthesis model was trained on over 100 million hours of speech data. It breaks speech down into several parallel layers of discrete units, giving it finer control over sound, rhythm, and emotion than standard TTS systems.

The key difference: instead of picking an emotion from a dropdown, users describe the voice they want in plain language. "Sleepy, just woken up, slightly hoarse" sounds different from "angry, but trying to stay calm." The model also generates paralinguistic sounds like coughs, hesitations, sighs, and laughter as part of the output rather than splicing in audio clips after the fact.

According to Xiaomi, MiMo-V2-TTS is the only commercially available TTS API that natively handles both speech and singing in the same model. It reads typographic cues like capital letters or repeated characters as signals for emphasis and rhythm, so that "THIS IS IMPORTANT" comes out with real punch, not simply higher volume. Even without any style instructions, the model picks up the right tone directly from the text.

Competitive benchmarks, but Xiaomi still has ground to cover

Shipping three specialized models at once sends a clear signal: Xiaomi wants to build a full-stack platform for AI agents. The benchmarks show the models going toe-to-toe with Anthropic and OpenAI in some areas while still falling short in others. On general agent tasks in particular, MiMo-V2-Pro still has work to do before it catches Claude Opus 4.6.

Next up, the MiMo team says it's working on long-term planning across hours and days, real-time streaming, coordinated multi-agent systems, and robotics. "We believe the path to general intelligence runs through the real world," the team writes. ""A model that only reads text lives in a library. A model that sees, hears, reasons, and acts lives in the world."

The "Hunter Alpha" mystery - it wasn't Deepseek

Before Xiaomi officially pulled the curtain back, MiMo-V2-Pro was listed anonymously on the API platform OpenRouter under the codename "Hunter Alpha." Xiaomi says usage climbed steadily: the model topped the daily rankings for several days straight and racked up over one trillion tokens total. The most popular use case by far was coding.

Many users had guessed Hunter Alpha was actually Deepseek V4. But Deepseek is still a ways out - reports say the next major Deepseek model has been delayed due to its growing size.

Other Chinese AI labs aren't sitting still, though. Zhipu AI recently shipped GLM-5, an open-source model with 744 billion parameters built to compete with Claude Opus 4.5 and GPT-5.2 on coding and agent tasks. Moonshot AI's Kimi K2.5 takes a different approach with swarms of agents working in parallel, and Alibaba has been expanding its Qwen 3.5 lineup.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe now