The Pentagon-OpenAI-Anthropic fallout comes down to three words: "all lawful use"

Key Points

- OpenAI has disclosed the details of its agreement with the US Department of Defense, defining three red lines: no domestic mass surveillance, no autonomous weapons systems, and no automated high-risk decisions.

- The company took over the deal after Anthropic rejected it and the Trump administration ordered federal agencies to stop using Anthropic's technology, making OpenAI the Pentagon's new AI partner.

- The central conflict centers on the phrase "all lawful use": while OpenAI accepted that the Pentagon could use its models for "all lawful use" and pointed to technical safeguards, Anthropic rejected precisely this clause, arguing that existing laws contain loopholes that could be exploited.

After signing a deal with the Department of War, OpenAI tries to build trust by publishing the contract details. So far, it's not working.

A few days ago, President Trump ordered federal agencies to stop using Anthropic's technology after the company refused to budge on bans against US mass surveillance and autonomous weapons.

OpenAI picked up the deal within hours and says it's drawing the same red lines. But why the Pentagon accepted those restrictions from OpenAI and not from Anthropic is the question everyone is now trying to answer.

The backlash for OpenAI has been swift and measurable: users rallied behind Anthropic, pushing the Claude app to number 1 in the App Store ahead of ChatGPT. Feeling the pressure, OpenAI published a detailed blog post laying out the contract details and held an AMA on X with Sam Altman and other OpenAI employees.

"All lawful use" leaves plenty of wiggle room

In a detailed post, OpenAI lays out the specifics of its agreement with the Department of War and describes three red lines: no domestic mass surveillance, no autonomous weapon systems, and no automated high-risk decisions.

The sticking point is the phrase "all lawful purposes." OpenAI agreed to let the Pentagon use its models for "all lawful purposes" and negotiated technical safeguards in return. Anthropic rejected that exact wording because existing laws might leave loopholes.

In a CBS interview, Anthropic CEO Dario Amodei gave a concrete example: the US government could simply buy commercial datasets and analyze them with AI without it technically counting as "domestic mass surveillance" under current law. "That actually isn't illegal. It was just never useful before the era of AI," Amodei said.

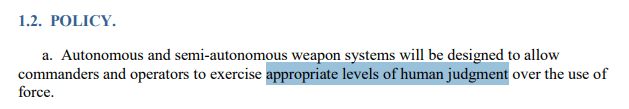

Autonomous weapons rules hinge on vague language about human control

OpenAI writes that its AI may not "independently direct autonomous weapons in any case where law, regulation, or Department policy requires human control, nor will it be used to assume other high-stakes decisions that require approval by a human decisionmaker under the same authorities."

But the relevant DoW directive doesn't actually require mandatory human approval before the use of force; it only calls for an "appropriate level of human judgment over the use of force." What counts as "appropriate" human control is left undefined. But Anthropic specifically asked for proper human oversight, which isn't guaranteed by the DoW's current phrasing.

OpenAI's technical argument is that its cloud-only architecture rules out "fully" autonomous weapons, since those would require edge deployment. But a drone system that pulls targeting decisions from an OpenAI model via a server connection wouldn't qualify as "edge deployment," and it wouldn't guarantee human control either. Talking to a server isn't the same as having a human in the decision chain.

Anthropic CEO Dario Amodei has also repeatedly said his company isn't opposed to fully autonomous weapons in principle. But he argues that current language model technology simply isn't reliable enough for that kind of use and therefore poses a risk to the US population.

OpenAI employees push back on criticism

Boaz Barak, a computer science professor at Harvard and part-time OpenAI employee, writes: "When we say that domestic mass surveillance and autonomous lethal weapons are red lines for us, we mean what we say, and are not looking for loopholes."

On the commercial dataset issue Amodei raised, Barak says the Department of War will share more details in the "coming days." Still, Barak admits: "It is true that the devil is in the details, and I and others will be looking on how this deal shapes up in practice."

The argument from Katrina Mulligan, who leads national security partnerships at OpenAI, is less convincing. She argues you can't distrust the government's interpretation of laws and contracts while also believing Anthropic's contractual restrictions would have held up.

But that misses the point. Anthropic was challenging the approach of securing red lines through "all lawful use" language and references to existing law. The company wanted explicit exclusions to narrow the room for interpretation, regardless of how "lawful" gets defined in individual cases or which directive happens to apply, but it wasn't questioning whether contracts mean anything.

Altman's de-escalation pitch doesn't hold up

Altman claims he wanted to de-escalate with the deal and create common ground for all AI labs. He says he doesn't know exactly what Anthropic was offered: "I don't know the details of what they received. If they received the same offer we did in the end, then yes I think they should have done it." He says he asked the Pentagon to offer the same terms to every company.

But the stronger move would have been for OpenAI and Google to draw the same red lines alongside Anthropic. Elon Musk's xAI would probably have been the odd one out, but it doesn't offer the best technology anyway. A collective no from the leading AI labs would have given the Pentagon far less room to maneuver. By going it alone, OpenAI undercut Anthropic's position and weakened the industry's collective bargaining power.

Whether private companies should have that kind of veto power over military decisions in a democracy is a fair question. But the current political climate in the US—where the government has repeatedly shown it doesn't care much about legal guardrails when push comes to shove—is only going to deepen mistrust of OpenAI's contract guarantees.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now