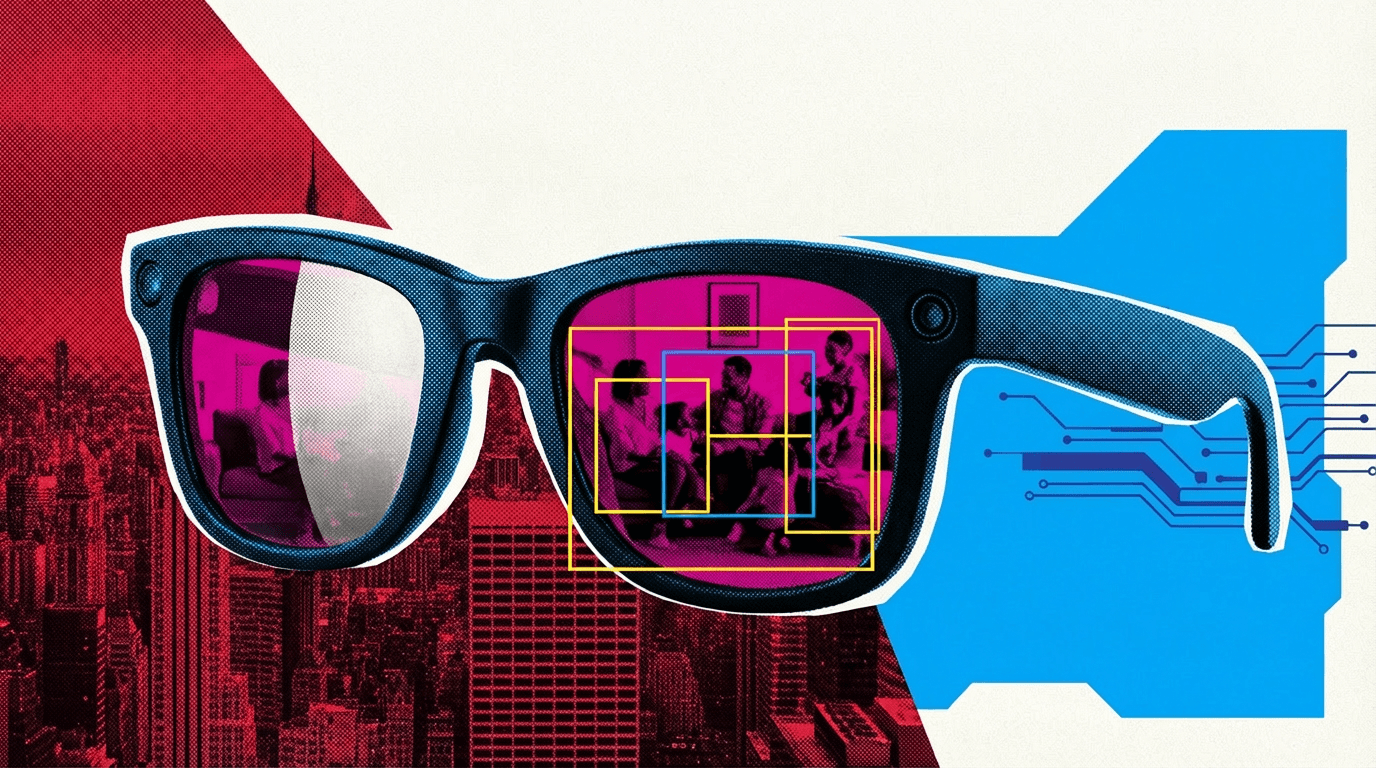

Meta sends private AI glasses footage to Kenya with few safeguards - and Europe's privacy regulators may come knocking

Key Points

- Meta has video data from its Ray-Ban AI glasses annotated by data workers in Nairobi. According to a Swedish investigation, they see nude scenes, sex videos, and bank details.

- Workers say the automatic face anonymization doesn't work reliably. Privacy organization NOYB also points out that users often don't realize the camera is recording when they talk to the AI assistant.

- Sama, the contracted data services company, has a troubled track record from previous work for OpenAI and Meta, where Kenyan workers had to label disturbing content for roughly $2 per hour.

To improve the AI in Meta's smart glasses, data workers in Nairobi review private recordings. These include nude scenes, sex videos, and banking information.

Meta markets its Ray-Ban glasses on its website as a product built "with your privacy in mind," giving wearers control over what gets shared and when.

But the terms of service for the glasses' AI features tell a different story. While the privacy policy states that voice recordings are only stored for product improvements with active consent, the AI assistant automatically processes speech, text, images, and sometimes video to function - and that data can be shared with third parties. Users can't turn this processing off.

According to an investigation by Svenska Dagbladet and Goteborgs-Posten, video data from the AI glasses ends up with data annotators at Sama, a data services company contracted by Meta, in Nairobi, Kenya. Workers there train Meta's AI systems by labeling, describing, and categorizing objects in images and videos.

Workers report seeing nudity, bank cards, and sex scenes

What shows up on their screens goes far beyond Instagram Reels and family videos. Multiple workers told the Swedish journalists about video clips showing people walking out of bathrooms naked, changing clothes, or having sex while the glasses were running. "We see everything – from living rooms to naked bodies. Meta has that type of content in its databases," one worker told the Swedish outlets.

Other recordings show accidentally filmed bank cards or people watching pornography while wearing the glasses. The work also includes reviewing transcriptions - annotators check whether the AI assistant responded correctly. In doing so, they say they come across chats about crimes, protests, and sexual content. "It is not just greetings, it can be very dark things as well," one worker told the investigators.

The workers have signed extensive non-disclosure agreements. Cameras are everywhere in the offices, and personal phones or recording devices are banned. Anyone who asks questions risks losing their job - and with it, often their only way out of poverty, according to the workers.

AI training needs human eyes, and that's becoming a problem

For Meta's glasses to recognize objects, understand speech, and interpret scenes, humans have to process the raw data first.

Former Meta employees in the US confirmed to the Swedish journalists that sensitive data is not supposed to be used for AI training. Faces in annotation data are automatically blurred.

But the data annotators in Kenya say the anonymization doesn't always work. Faces that should be obscured are sometimes visible. "The algorithms sometimes miss. Especially in difficult lighting conditions, certain faces and bodies become visible," a former Meta employee said.

Kleanthi Sardeli, a data privacy lawyer at the Vienna-based organization None Of Your Business (NOYB), which has filed multiple lawsuits against Meta, sees a clear transparency problem. Users may not realize the camera is recording when they talk to the AI assistant. The nature of the videos processed by Sama is a strong indicator of this.

"If this happens in Europe, both transparency and a legal basis for the processing are lacking," she told the Swedish outlets. Explicit consent should be required for AI training. "Once the material has been fed into the models, the user in practice loses control over how it is used."

There is currently no EU adequacy decision for Kenya. Dialogue between the EU and Kenya only began in May 2024. Meta states in its privacy policy that user data is transferred, stored, and processed globally because "Meta is a company that operates globally." Petra Wierup, a lawyer at the Swedish data protection authority IMY, made clear that if Meta is the data controller under the GDPR, protections must extend to subcontractors in third countries and cannot be weakened.

Sama has a troubled track record

Sama is no stranger to controversy. Back in 2021, the company labeled tens of thousands of text passages containing depictions of sexual abuse, violence, and hate speech on behalf of OpenAI. According to a TIME investigation, Kenyan workers were paid roughly $1.32 to $2 per hour at the time. One worker described the experience as "torture." The company also helped label data for autonomous vehicles and was involved in content moderation on Facebook.

After further reports exposed worker trauma and alleged union-busting at Sama's Nairobi office, the company ended its content moderation work for Meta in 2023 and shifted its focus to computer vision data annotation - the exact type of work now tied to the AI glasses.

The annotation work itself is increasingly AI-assisted. For training its computer vision model SAM 3, Meta developed a "Data Engine" where AI models first generate segmentation suggestions, which are then reviewed and corrected by human and AI annotators. The process is designed to speed up annotation significantly.

Chinese AI companies are also relying on external data workers in Kenya.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now