Google's new Gemini API Agent Skill patches the knowledge gap AI models have with their own SDKs

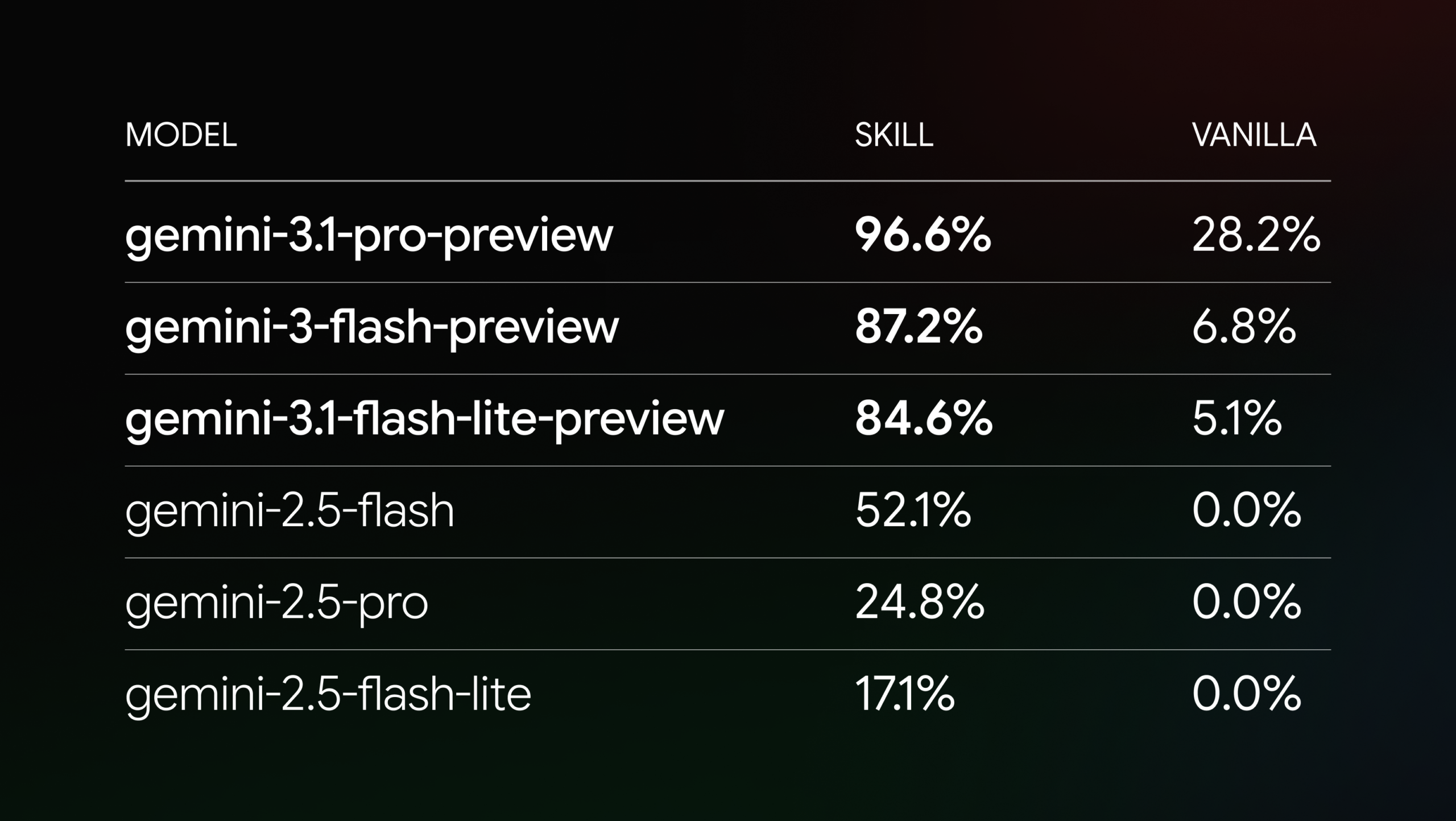

Google has built an "Agent Skill" for the Gemini API that tackles a fundamental problem with AI coding assistants: once trained, language models don't know about their own updates or current best practices. The new skill feeds coding agents up-to-date information about current models, SDKs, and sample code. In tests across 117 tasks, the top-performing model (Gemini 3.1 Pro Preview) jumped from 28.2 to 96.6 percent success rate. Skills were first introduced late last year by Anthropic and quickly adopted by other AI companies.

Older 2.5 models saw much smaller improvements, which Google says comes down to weaker reasoning abilities. Interestingly, a Vercel study suggests that giving models direct instructions through AGENTS.md files could be even more effective. Google is exploring other approaches as well, including MCP services. The skill is available on GitHub.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now