Claude Mythos is a wake-up call for Europe's AI safety apparatus

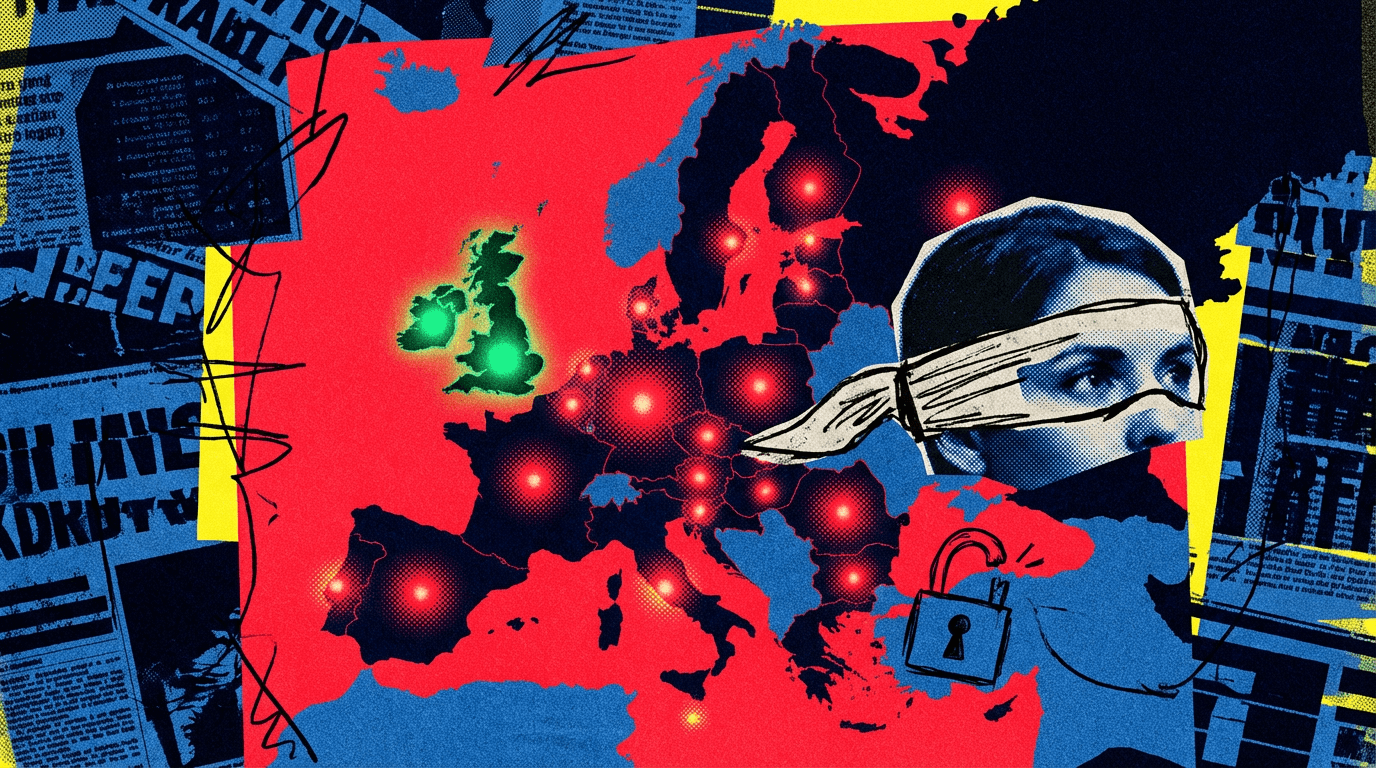

Anthropic is restricting access to Claude Mythos, an AI model it says can find security vulnerabilities better than most humans. European authorities have almost no visibility into the system, while the UK is already running its own tests. The situation exposes a deeper structural problem.

Anthropic announced last week that it would limit access to its latest AI model, Claude Mythos Preview, to a select group of technology partners. The company is giving a preview version to a handful of Big Tech and cybersecurity firms because it believes the new model could pose unprecedented cybersecurity risks and increase the likelihood of large-scale AI-powered cyberattacks.

Under a program called "Project Glasswing," Anthropic handpicked 12 US tech companies as its inner circle, including Apple, Microsoft, and Amazon. Another 40 organizations also received access, though Anthropic didn't name them.

European regulators are barely part of the picture.

Most European cyber agencies had little contact with Anthropic

POLITICO says it spoke with officials from eight national European cybersecurity agencies. Only Germany's BSI confirmed it had opened talks with Anthropic about Mythos without being able to test the model directly so far. Other European government institutions apparently had only limited visibility.

BSI chief Claudia Plattner called it an urgent question whether such powerful tools would eventually be available on the open market. The question has profound implications for national and European security and sovereignty, she told POLITICO. While the BSI is in active dialogue with Anthropic, those conversations have so far only provided meaningful insight into how the model works and not direct access to test it.

The EU's cybersecurity agency ENISA declined to comment on whether it is in contact with Anthropic. The EU Commission's AI Office does maintain a dialogue with Anthropic as part of the EU Code of Practice for AI models. Whether Mythos is part of those conversations, and whether the office has received access, went unanswered.

The UK, by contrast, is in an entirely different position. UK AI Minister Kanishka Narayan confirmed that the British AI Security Institute (AISI) recently tested Mythos and had already taken action based on its findings. On Monday, the institute published its assessment. The results strongly suggest that Mythos Preview represents a significant leap over previous frontier models in a landscape where cyber capabilities were already advancing rapidly. The AISI did note, however, that it couldn't say with certainty whether Mythos could successfully attack well-defended systems.

Without access, Europe can't assess the risks

Europe's lack of access to Mythos isn't just a governance issue. Without solid technical details, regulators can only do so much to assess risks and prioritize defenses.

Daniel Privitera, founder of the Berlin-based AI nonprofit KIRA, told POLITICO that Mythos offers an early taste of how critical access to frontier AI capabilities will be in the years ahead. Europe currently has no plan for securing that access, he said.

AI pioneer Yoshua Bengio told POLITICO he found it deeply concerning that tech companies, not regulators, are deciding how to handle these risks. It shows how important it is to create pathways for governments or third parties to review the technology, he said.

Former European Parliament member Marietje Schaake, who helped shape the EU's Code of Practice for AI developers as an advisor to the EU Commission, also called it concerning that models with far-reaching impact are controlled by a private company. Now would be a good time to agree on disclosure rules and oversight mechanisms, she said.

Independent AI researcher Laura Caroli, who was involved in drafting the EU AI Act, told POLITICO that the EU has been sidelined because the model hasn't been released on the market. If it were, Anthropic would face binding obligations under EU law. That said, according to the EU guidelines, even internal use of an AI model counts as placing it on the market if that use is essential to providing a product or service in the EU or affects the rights of individuals in the Union.

Thomas Regnier, the EU Commission's digital spokesperson, told POLITICO that the Commission is currently examining possible implications under EU legislation. Under the AI Act, providers like Anthropic must address cyber risks posed by their models, and the Cyber Resilience Act sets mandatory cybersecurity requirements for all products with digital components sold in the EU market.

Why Europe is locked out - and what that means

Is Europe's lack of access to Mythos a symptom of overregulation? The reality is more complicated. Anthropic signed the EU Code of Practice for general-purpose AI models, along with Amazon, Google, IBM, Microsoft, and OpenAI. A lack of willingness to cooperate isn't the issue.

The real problem is that Europe lacks a counterpart that can operate on the same technical level. The UK's AI Security Institute, founded in 2023 as the Frontier AI Taskforce and renamed in 2025, has 100 million pounds in public funding. In a remarkably short time, it has gained access to the AI industry by running safety tests and recruiting high-profile researchers from OpenAI and Google Deepmind. It has tested at least 16 models so far, including three frontier models before their public launch. With more than 100 technical staff and the right relationships, it has the credibility to get early access to cutting-edge models.

At the EU level, the AI Office does exist with more than 125 staff and its own safety unit. But as recently as last fall, the office was struggling with major hiring difficulties, according to Transformer News. Key leadership positions were unfilled. Rigid pay structures left little room to attract private-sector talent, according to EU staffing expert Andras Baneth. The hiring process drags on for months due to internal bureaucracy, causing candidates to take other jobs in the meantime. The UK equivalent, by comparison, can pay above standard rates.

Some EU member states have built their own structures in parallel. France launched INESIA in early 2025, an institute for AI evaluation and safety. Spain monitors safe AI deployment through AESIA.

So it seems the handling of Mythos is less evidence of overregulation and more a reflection of structural weaknesses: Europe still lags behind in compute capacity, growth capital, homegrown frontier labs, and security-focused evaluation. All of that limits the pool of talent, compute, and influence needed for this kind of work. There have been several wake-up calls like this since GPT-3. To its credit, Europe is no longer in full denial mode. Whether that's enough when the next model of this caliber comes not from Anthropic but from, say, DeepSeek—that's a different question entirely.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.