ARC-AGI-3 offers $2M to any AI that matches untrained humans, yet every frontier model scores below 1%

The new ARC-AGI-3 benchmark drops AI systems into interactive game environments that humans solve with ease. No frontier model breaks the 1 percent mark because the benchmark strips away their biggest advantages.

The ARC Prize Foundation has released ARC-AGI-3, a new benchmark that tests AI systems in interactive, turn-based game environments. Unlike its predecessors, which had models derive static patterns from input-output pairs, ARC-AGI-3 requires AI agents to explore environments on their own, form hypotheses, figure out objectives, and execute plans - all without any instructions or hints about the goal. An early version was previewed in summer 2025.

According to the accompanying technical report, all 135 environments were solved by humans with no prior knowledge and no instructions. Every frontier model tested, meanwhile, scored below 1 percent: Gemini 3.1 Pro Preview hit 0.37 percent, GPT 5.4 reached 0.26 percent, Opus 4.6 managed 0.25 percent, and Grok-4.20 scored 0.00 percent.

One important caveat: machines and humans aren't measured on the same scale.

Squared efficiency penalizes brute force, not just wrong answers

ARC-AGI-3 uses a metric called RHAE (Relative Human Action Efficiency). Instead of simply checking whether a model solves a task, it measures how many actions the model needs compared to a human. Only interactions that actually change the game state count as actions, but internal computation steps or reasoning chains don't factor in. This makes scores from ARC-AGI-1/2 and ARC-AGI-3 incomparable.

The human baseline is the second-best performer out of ten first-time players per environment. According to the scoring documentation, the top player is deliberately excluded to filter out outlier performances while still maintaining a realistic reference for competent human play. Efficiency is calculated per level using a squared formula: (human actions / AI actions)^2. So if a human needs 10 actions and the AI needs 100, the AI doesn't get 10 percent - it gets just 1 percent. This squared penalty is designed to devalue brute-force strategies. Being faster than the human earns no bonus either, since the per-level score caps at 1.0. Later levels carry more weight because they require deeper understanding. For cost reasons, the team plans to limit the maximum number of attempts for agents to five times the human attempt count.

Custom scaffolding helps on known tasks but proves nothing about general intelligence

The official leaderboard only tests models via API without custom-built scaffolding (harnesses), using an identical system prompt for all.

The ARC Prize Foundation explains this decision in the paper by arguing that the benchmark isn't meant to measure the human intelligence that went into building a task-specific system - it's meant to measure the general intelligence of the AI model itself. Future AGI systems shouldn't need external help to tackle new tasks.

Testing with Duke University revealed a striking bimodal pattern: Opus 4.6 scored 97.1 percent on a known environment using a hand-crafted harness, but dropped to 0 percent on an unfamiliar one. This shows that perceiving the game environment and the API format aren't the limiting factors. Rather, custom-built strategies simply don't transfer to unseen environments. Chollet argues on X that true AGI shouldn't need task-specific human guidance when ordinary humans can handle the tasks without any help.

There is still a separate community leaderboard for harness-driven results, where scores are self-reported. The Foundation explicitly warns against interpreting these results as evidence of AGI progress. However, it expects the best ideas from harness research to eventually make their way into the models themselves - much like chain-of-thought prompting started as an external technique and eventually became a built-in feature in OpenAI's o1.

Chollet addresses this objection on X, pushing back on the idea that the low scores are simply an artifact of missing harnesses and a basic prompt. The G in AGI stands for "general," he argues - and general intelligence doesn't mean being specifically trained for a wide range of tasks. It means facing any new task and solving it independently. If ordinary humans can do that without instructions or tools, there's no reason AGI should need special handholding and hand-crafted instructions. The way Chollet sees it, there are only two positions: either you believe AGI is possible, in which case a true AGI system will solve ARC-AGI-3 because normal humans can too - or you believe AI is merely an automation tool that will always require human intervention for every new task.

Recordings of the latest model tests can be viewed on the ARC Prize website.

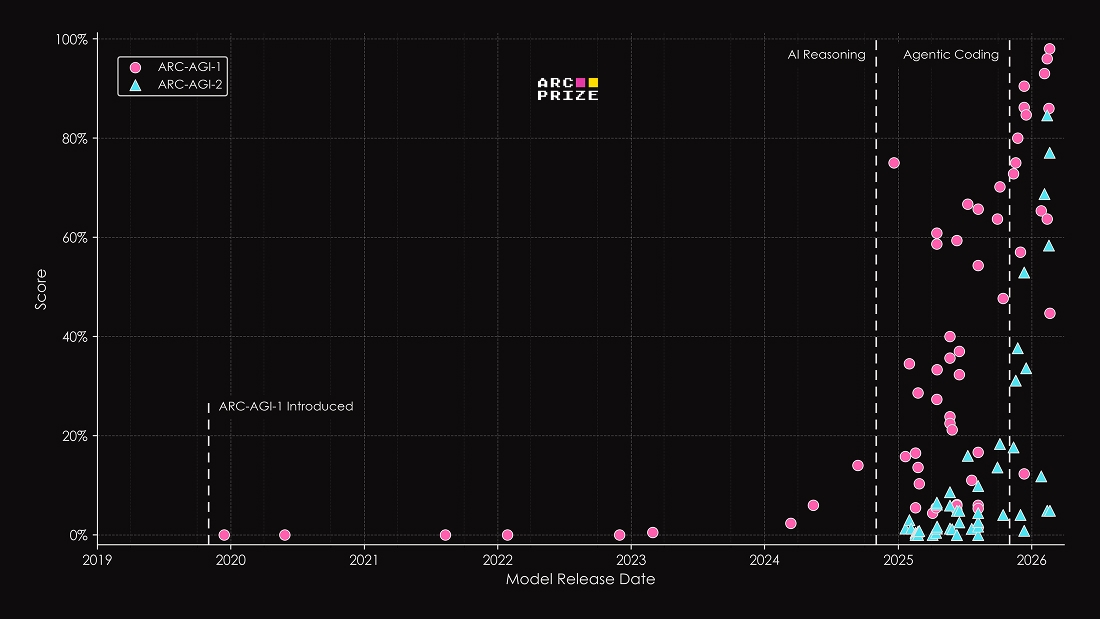

Previous ARC benchmarks predicted key AI breakthroughs before they happened

The predecessor benchmarks have repeatedly flagged turning points in AI development over the past few years. ARC-AGI-1 was likely the first benchmark to precisely identify the breakthrough of frontier AI reasoning systems like OpenAI's o3 at a time when other benchmarks were already saturated. ARC-AGI-2 then captured the rapid progress of modern reasoning models and the rise of scaffolding, which has since been deployed in production coding tools like Claude Code and Codex. By now, both benchmarks are effectively saturated, largely thanks to these scaffolding approaches.

ARC-AGI-3 aims to measure the next open gap: agentic intelligence - the ability to navigate completely unfamiliar environments without specific training. The fact that every current frontier model scores below 1 percent shows, according to the creators, just how far AI systems remain from human-like adaptability.

The ARC Prize Foundation has made 25 environments publicly available to play and is running the ARC Prize 2026 on Kaggle with $2 million in prize money.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- Over 20 percent launch discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.