95% of UK students now use AI and their experiences couldn't be more divided

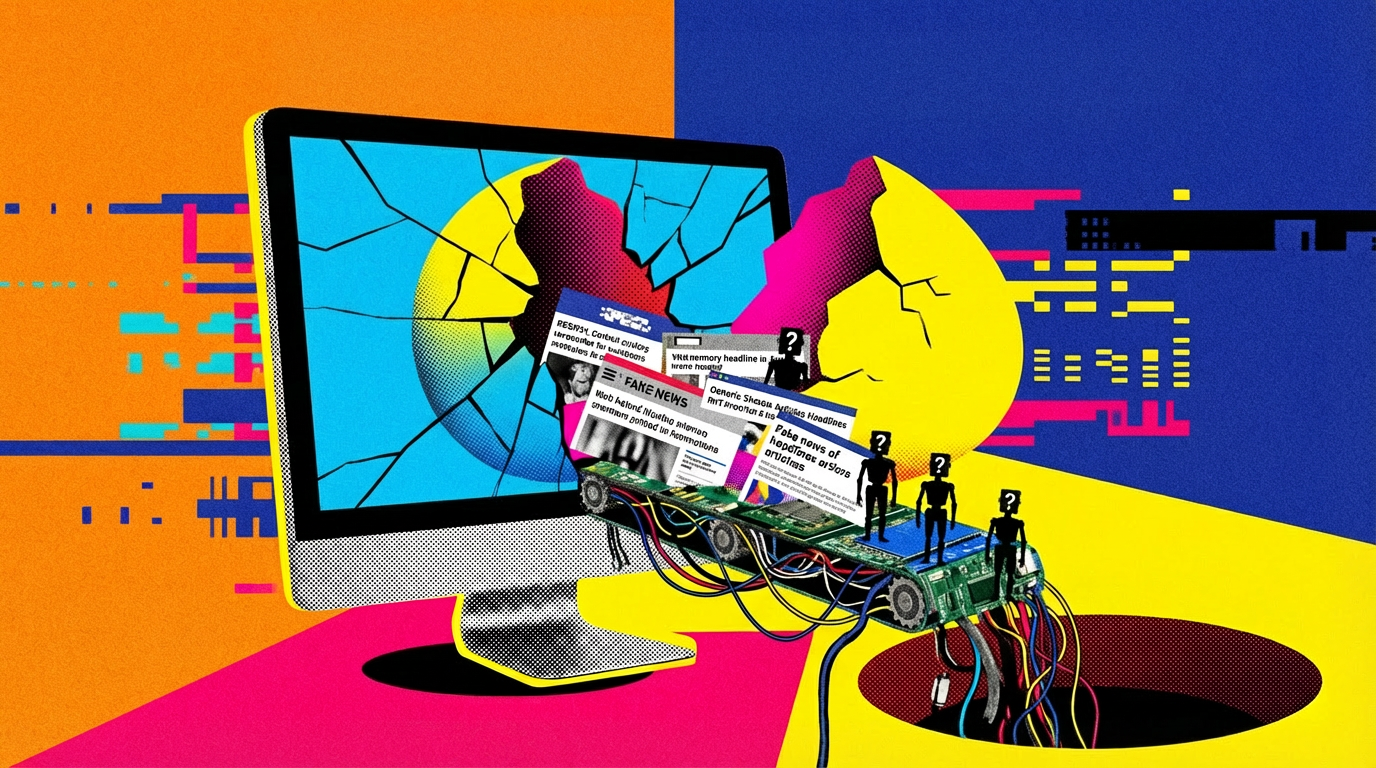

95 percent of British students use generative AI. But while some say it deepens their learning, others worry it’s replacing their ability to think for themselves. A new survey reveals a student body caught between enthusiasm, overwhelm, and universities that aren’t keeping up.