LLM text data is drying up, but Meta points to unlabeled video as the next massive training frontier

Key Points

- A single AI model can learn text, images, and video simultaneously from scratch without the different modalities interfering with each other, according to a study by Meta FAIR and New York University.

- The findings suggest that the conventional approach of using two separate visual encoders for image understanding and image generation is unnecessary, as one unified model can handle both tasks effectively.

- However, the researchers found that vision and language scale in fundamentally different ways: language capabilities grow in a balanced relationship between model size and data volume, while visual capabilities demand a disproportionately large amount of training data.

A research team from Meta FAIR and New York University systematically investigated how multimodal AI models can be trained from scratch. Their findings challenge several widely held beliefs about how these models should be built.

Language models have defined the foundation model era. But text, the researchers argue in their paper "Beyond Language Modeling," is ultimately a lossy compression of reality. Drawing on Plato's allegory of the cave, they suggest that language models have learned to describe the shadows on the wall without ever seeing the objects casting them. There's also a practical problem: high-quality text data is finite and quickly running out.

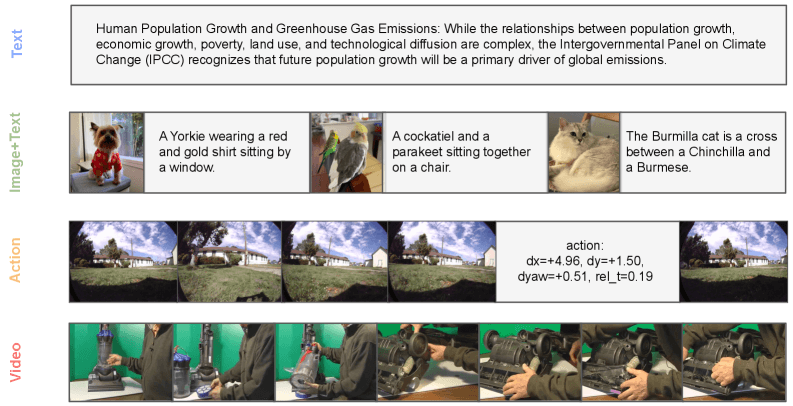

The study, which involved Yann LeCun before he left the company, trains a single model entirely from scratch. It pairs standard word-by-word prediction for language with a diffusion method called flow matching for visual data, training on text, video, image-text pairs, and action-related videos. By not building on top of an existing language model, the researchers avoid contaminating their results with previously learned knowledge.

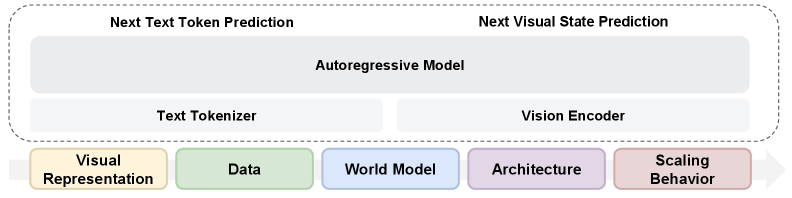

A single visual encoder can handle both understanding and generation

Previous approaches like Janus or BAGEL use separate visual encoders for image understanding and image generation. The Meta researchers found that this separation is unnecessary.

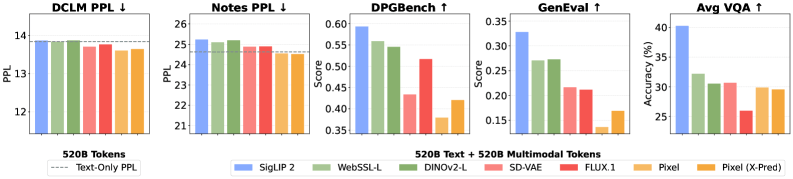

A representation autoencoder (RAE) built on the SigLIP 2 image model outperforms conventional VAE encoders at both image generation and visual comprehension, according to the study. Language performance stays on par with a text-only model.

Rather than maintaining two separate paths, one encoder handles both tasks, dramatically simplifying the architecture. This challenges the common assumption that vision and language inevitably compete inside a model. Raw video without text annotations doesn't hurt language capabilities at all, according to the study. On a validation dataset, the model trained on both text and video actually edges out the text-only baseline.

The researchers trace the slight degradation that shows up with image-text pairs to the distribution gap between normal training text and image captions, not to the visual modality itself.

The synergy is notable. Twenty billion VQA tokens (visual question answering data) supplemented by 80 billion tokens from video, image-text pairs (MetaCLIP), or plain text each outperform a model trained on 100 billion pure VQA tokens.

World modeling shows up without explicit training

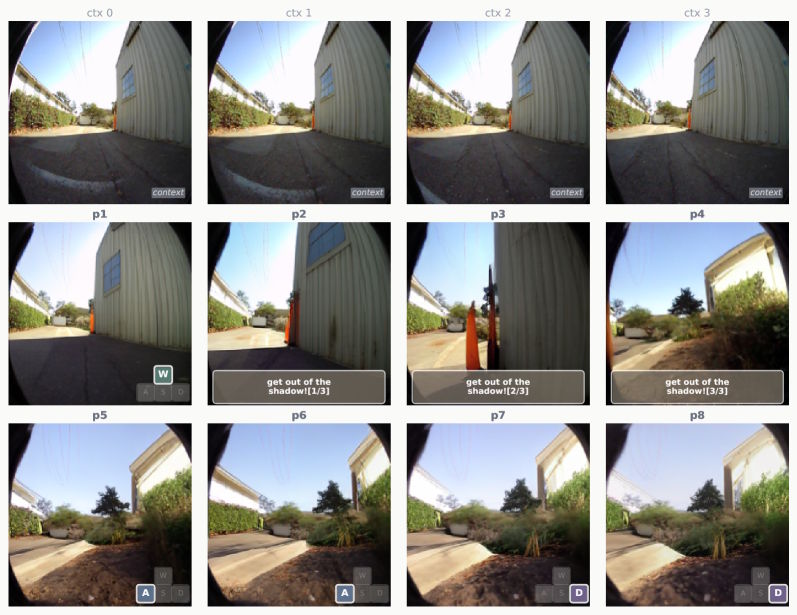

The researchers also tested whether their model could learn to predict visual states. Given a current image and a navigation instruction, the model has to predict the next visual state. Actions are encoded directly as text, so no architectural changes are needed.

According to the researchers, world modeling ability emerges mainly from general multimodal training, not from task-specific navigation data. The model hits competitive performance with just one percent of task-specific data. It can even follow natural language instructions like "Get out of the shadow!" and produce matching image sequences, despite never encountering that kind of input during training.

Mixture-of-Experts figures out capacity allocation on its own

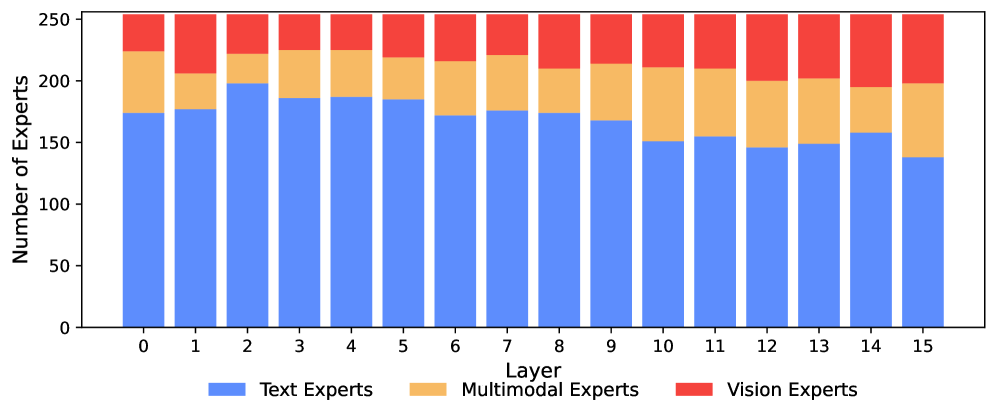

For the architecture, the researchers looked at Mixture-of-Experts (MoE), an approach where each input token gets routed to just a subset of specialized network modules instead of activating the entire model. This saves compute while boosting overall capacity.

With a model totaling 13.5 billion parameters but only 1.5 billion active per token, MoE outperforms both dense models and manually designed separation strategies, according to the study. The model figures out specialization by itself, assigning far more experts to language than to vision. Early layers are dominated by text-specific experts, while deeper layers increasingly feature visual and multimodal ones.

One standout finding is that image comprehension and image generation activate the same experts, with a correlation of at least 0.90 across all layers. The researchers see this as confirmation of Richard Sutton's "Bitter Lesson" that learning from data usually beats hand-designed solutions.

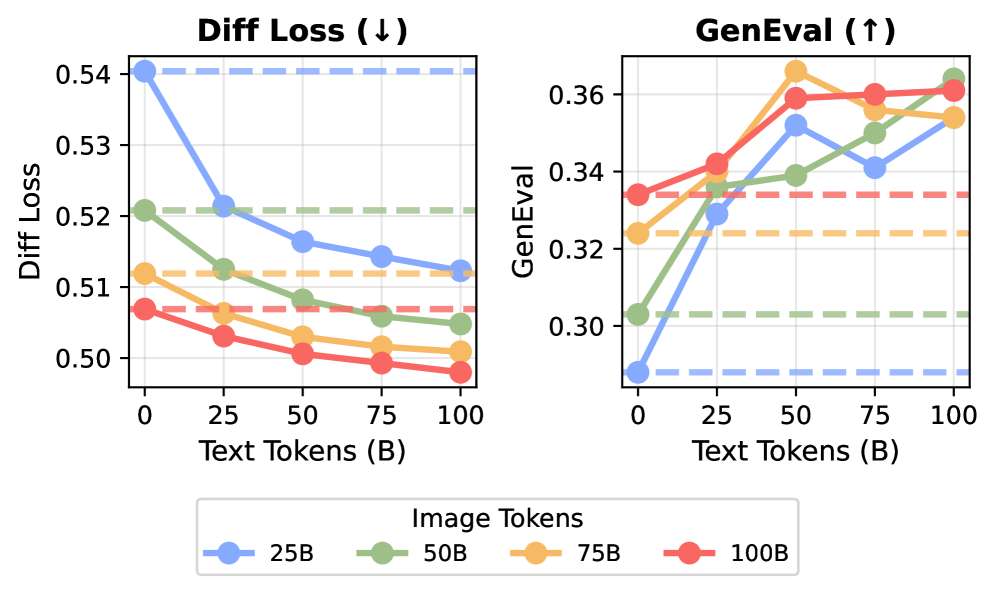

Vision needs far more data than language to scale well

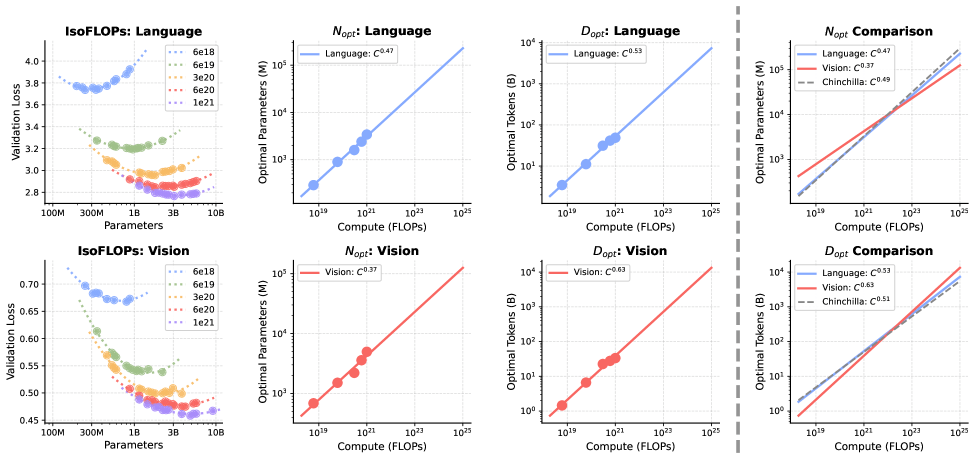

Training an AI model always involves a fundamental tradeoff in how to split a fixed compute budget. You can build a bigger model with less data, or a smaller model with more data. The Chinchilla scaling laws showed that for pure language models, both should grow at roughly the same rate.

The Meta researchers calculated these scaling laws for a joint vision-language model for the first time and found a major asymmetry. For language, the familiar equilibrium holds. For vision, the optimum shifts heavily toward data. Visual capabilities benefit disproportionately from more training data, while making the model bigger brings relatively little improvement.

The larger the model gets, the wider the gap in data requirements. Starting from a 1 billion parameter base, the relative need for vision data compared to language data grows 14-fold at 100 billion parameters and 51-fold at 1 trillion parameters, according to the study. Language scales much more modestly across this range. In conventional dense models, where every parameter is active at every step, this imbalance is nearly impossible to resolve.

The Mixture-of-Experts architecture helps close the gap. Since only a fraction of experts fire per token, the model can carry a massive total parameter count without compute costs scaling proportionally. Language gets the high parameter capacity it needs, while vision benefits from the large data volumes it requires. According to the study, MoE cuts the scaling asymmetry between the two modalities in half.

The researchers note that their work covers pre-training only and they didn't dig into fine-tuning or reinforcement learning. Still, they see their results as evidence that the boundary between multimodal models and world models is getting blurrier by the day. Huge volumes of unlabeled video remain largely untapped, and the study shows they can be folded in without hurting language performance.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now