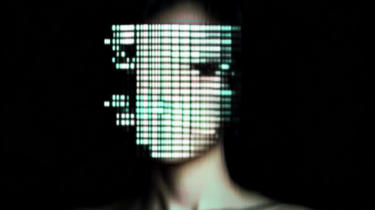

Just as AI-generated photos can look very realistic, there are now AI-generated videos that are almost indistinguishable from real video.

The latest deepfake advances have been making the rounds on X for a few days now. HeyGen, a leading provider of "digital avatars", shows its new feature "Avatar in Motion 1.0". It can lip-sync people speaking many languages while they move and gesticulate with their hands. The man in the video originally speaks German.

Video: HeyGen via X

This used to only work with people sitting pretty still in front of the camera. These talking avatars have also become more realistic. A demo made with "arcads.ai" shows a young woman talking and gesturing to the camera. The woman is real, but she never recorded the video.

Instead, AI automatically moves her lips in sync with a pre-written script. Her voice is also cloned. Two minutes of video is all it takes to create such a multimedia clone of a real person. The creator says she wrote the video's text to match the actress' avatar's facial expressions for added realism.

Video: Beck via X

The video went viral on X with two interesting effects. First, many couldn't agree on whether it was AI-generated or real. This shows that the line between fake and real has long been blurred, even for supposed AI experts. Second, the demand for the service was so high that the servers crashed.

Another new deepfake provider is Argil.ai, which says it has developed a proprietary deepfake model to achieve the amazing avatar quality shown in the video below. The team says its model can produce high-quality video up to 4K.

Video: Brivael via X

Deepfake dystopias are coming true

With the new demos, there should be no more doubt that the dark prediction of Ian Goodfellow, who invented the original deepfake tech, will happen - or already has.

We can no longer trust images, videos, and audio online. Of course, the same goes for real video. Donald Trump recently showed that the mere existence of AI fakes can be used to question everything, even his own mistakes caught on video.

This makes it all the more important to authenticate content and establish trusted channels, a traditional role of media and journalism. But the media is in a partly self-inflicted crisis, dependent on the companies that develop and deliver these technologies and content.

All of these technological advances benefit those who willfully ignore facts to make money, gain power, or spread ideology. And developments are accelerating as elections are held around the world.