Nvidia announces AI Foundations, a digital production facility for generative AI models. New is Picasso for text-to-image, video, or 3D. Adobe, Shutterstock, and Getty Images are the first customers.

"The impressive capabilities of generative AI have created a sense of urgency for companies to reimagine their products and business models," says Jensen Huang, CEO and founder of Nvidia, as he begins his deep dive into Nvidia's AI business. The topic of AI is even more prominent at this year's GTC than in previous years, in part because ChatGPT has brought much more public attention to the topic than last year. The company now sees the GTC as one of the most important AI events, with speakers such as Demis Hassabis of Deepmind and Ilya Sutskever, co-founder of OpenAI.

In addition to numerous collaborations and application examples from and with companies such as Microsoft, Amazon, BMW, BYD, AT&T, and Medtronic, Huang showed how Nvidia's various accelerators are driving the current AI boom. He spoke of an iPhone moment in AI, with numerous industries using and training AI models. For example, the state-of-the-art DGX-H100 and HGX-100 systems are now available through Amazon's Azure, and other cloud providers such as Google Cloud, Amazon's AWS, Oracle Cloud, and Baidu Cloud also rely on Nvidia's Hopper architecture, he said. Companies like Meta have also chosen H100 systems for their own supercomputers.

Nvidia launches DGX Cloud and Picasso - a service for generative AI

Nvidia DGX systems will also be available directly through DGX Cloud with support from Nvidia experts. DGX Cloud will be available through Microsoft Azure, Google Cloud, Oracle Cloud, and Equinix.

DGX Cloud is built on Nvidia's AI Enterprise Service, which provides access to a variety of AI models. These include GPT family language models via NeMo or protein structure prediction models via BioNeMo. Enterprises can refine pre-built models in the offerings or train their own models - all with their own proprietary data that never leaves the servers and is not used by Nvidia to train its own models.

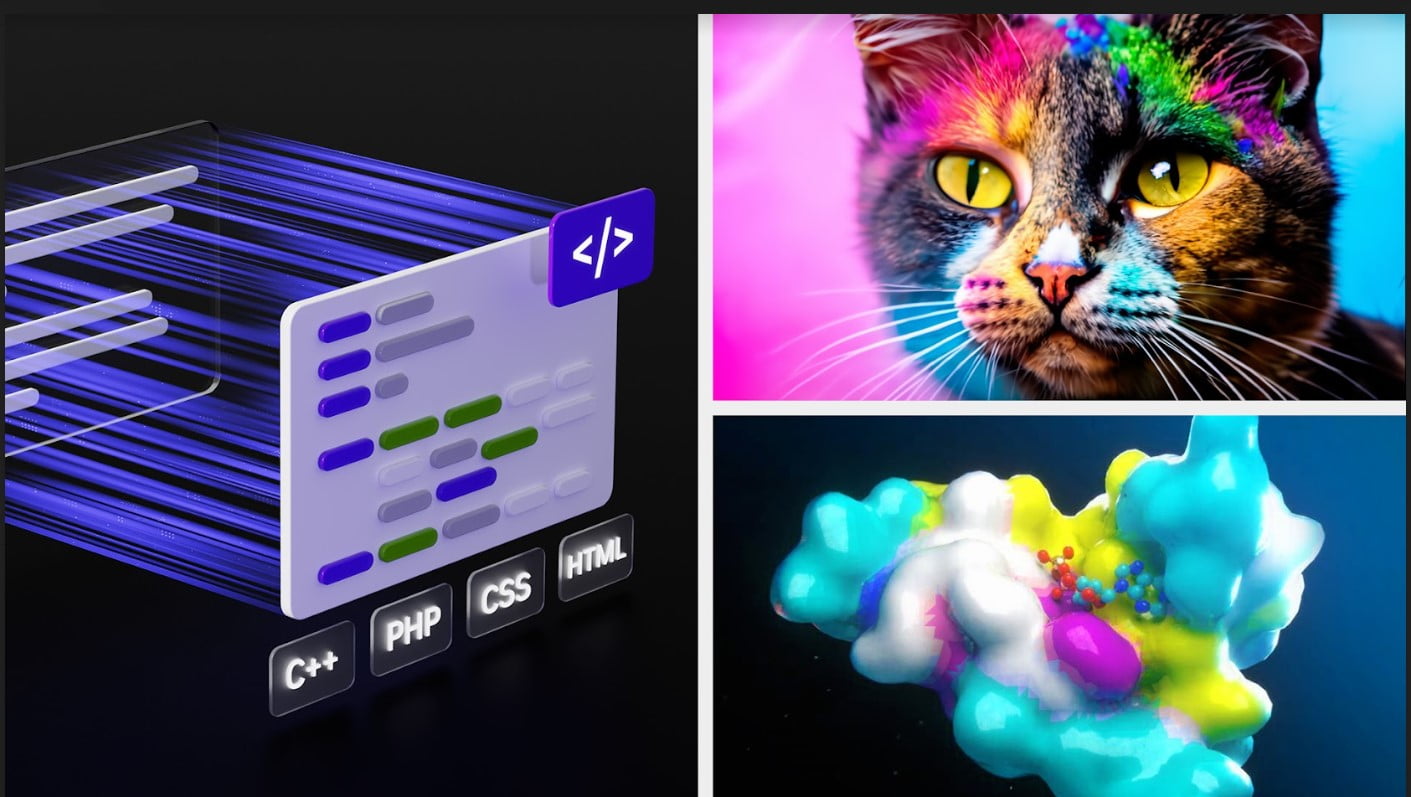

While NeMo and BioNeMo are already up and running, Huang announced Picasso, a generative AI service for images, videos, and 3D models. Nvidia is bundling NeMo, BioNeMo, and Picasso into AI Foundations, which is part of AI Enterprise. According to Huang, AI Foundations is primarily aimed at companies that don't want to access APIs to external AI models, but instead want to use their own data.

Video: Nvidia

Picasso is designed to enable companies to train their own generative AI models. Early customers include Adobe, Getty Images, and Shutterstock. According to Nvidia, Getty Images is training its own text-to-image and text-to-video models using Picasso and image data available on its own platform. Getty Images wants artists:inside to share in the revenue generated by the models.

Shutterstock, on the other hand, is working on a text-to-3D model that will simplify and speed up the creation of detailed 3D models from days to minutes. Shutterstock also plans to compensate artists through a contributor fund.

Medtronic and Nvidia bring AI-assisted colonoscopy

Huang also announced new models for BioNeMo, easier access to AI supercomputers for the models offered there, and new startups to develop drugs using BioNeMo's models. Japanese conglomerate Mitsui & Co, Ltd. also announced the construction of the Nvidia Tokyo-1 AI supercomputer for pharmaceutical research. In addition to generative AI for biomedicine, Huang also showed how AI applications are being used in diagnostics.

In this regard, Medtronic announced FDA clearance of the first AI platform for colonoscopy: GI Genius, powered by Nvidia's Holoscan and IGX. The AI-assisted endoscopy detects 50 percent more lesions that can lead to cancer. Nvidia and Medtronic plan to continue their collaboration and develop a joint AI platform for medical devices.

Nvidia also announced the Nvidia L4 and L40 GPUs, successors to the T4 GPU, and the Nvidia H100 NVL, two H100 GPUs connected via NVLink that Nvidia says can locally run large language models with up to 200 billion parameters. The L40 GPUs are available through Google Cloud, for example.