RunwayML AI video editor now available for iPhone

RunwayML is an AI-powered video editor. Its Gen 1 model is now available in an iPhone app.

The iPhone app offers features from RunwayML's Gen-1 AI model. The New York-based startup unveiled the model last February: It can give videos new styles, similar to the filter technology popularized by Instagram, but with much stronger visual intervention and greater flexibility.

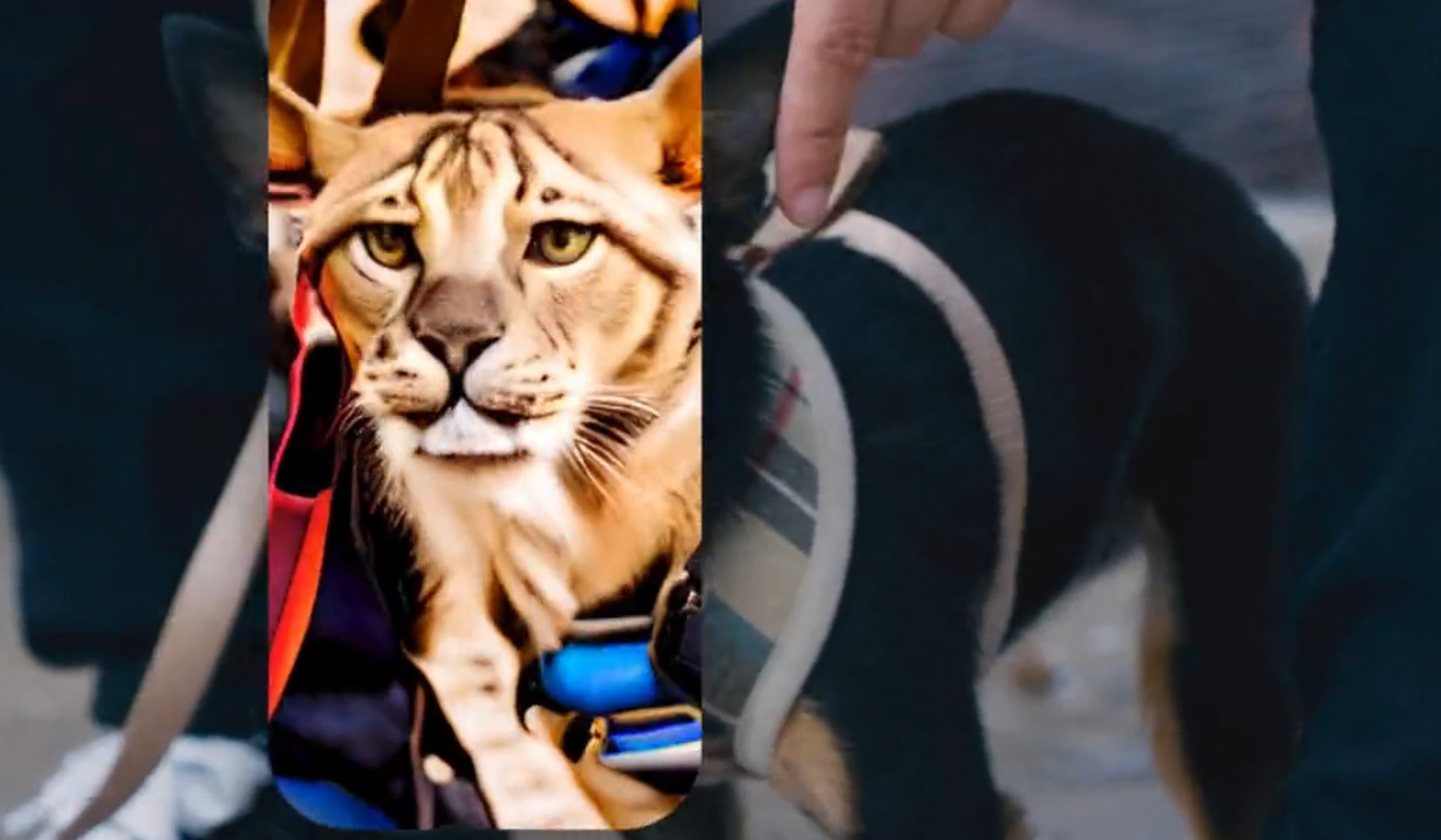

For example, a realistically photographed person can be transformed into a claymation character or a vivid drawing. The city background becomes an apocalyptic scene from a Roland Emmerich movie, and the lapdog becomes a tiger (see cover image). You can choose from a variety of styles, enter a text command, or specify a reference image to find your preferred style.

The magic of Gen-1. Now on your phone.

Download the new Runway iOS app today: https://t.co/kYfzzHfOfp pic.twitter.com/1GySmhJkby

— Runway (@runwayml) April 24, 2023

RunwayML is now available for free on the App Store for newer iPhones. Those who already have a Runway account for the web version can use it to exchange assets between smartphone and PC.

Gen-2 can already generate short videos

RunwayML is already a step ahead in terms of AI video: the newer Gen 2 model can generate short videos from text input or from a combination of image and text. Gen 2 is currently available in a beta version on the web. The first users are sharing some examples on Twitter.

My very first text to video with #Gen2

text_prompt: a woman wearing a white dress meditating, standing on water, peaceful day, the most beautiful sceneIf you have access to #Get1 Discord you can go there and find a thread and enjoy #Gen2 That's how I found it. pic.twitter.com/RErmjjHLhH

— Kris Kashtanova (@icreatelife) April 20, 2023

So practical text-to-video generation just happened.

Here's "futuristic car speeding" using Runway v2. It is a short clip, for now... pic.twitter.com/cGV4XRy4My

— Ethan Mollick (@emollick) April 20, 2023

It takes several minutes to process a few seconds of video, however, and the videos still contain glitches and are somewhat jerky. In addition to Runway, Google is working on Phenaki, a text-to-video AI system, and Meta is working on Make-a-Video. Meta has another model in the works, Dreamix, specifically for text-to-video editing.

The question will be whether the rapid development of image AI systems will also apply to video AI systems. If so, at least video databases for stock footage or short social media clips will soon face serious AI competition.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.