Update June 06, 2023:

Runway Gen-2 is now available in the browser and smartphone app for iOS. The trailer below shows some of the new features.

Original article dated March 20, 2023:

New York-based web video editing startup Runway introduces Gen-2, a new text-to-video model.

Runway first introduced its Gen-1 model in February, which can give existing videos a new look using only text prompts. For example, it transforms a realistically filmed scene into a cartoon world that retains the proportions and movements of the original scene.

This works for people and environments, and at a high level of abstraction: for example, Gen-1 can transform several notebooks standing upright next to each other into a skyline using only text prompts. All the Gen-1 features are included in Runway's new Gen-2 model.

Runway launches text-to-video model

Gen-2, however, takes it a step further by generating entirely new video scenes from a single text prompt. The following three-second video scene was generated by Runway with the prompt "Aerial drone footage of a mountain range". Audio is not yet included, but according to Runway, it is being researched.

Video: Runway

Prompt: "A close-up of an eye." | Video: Runway

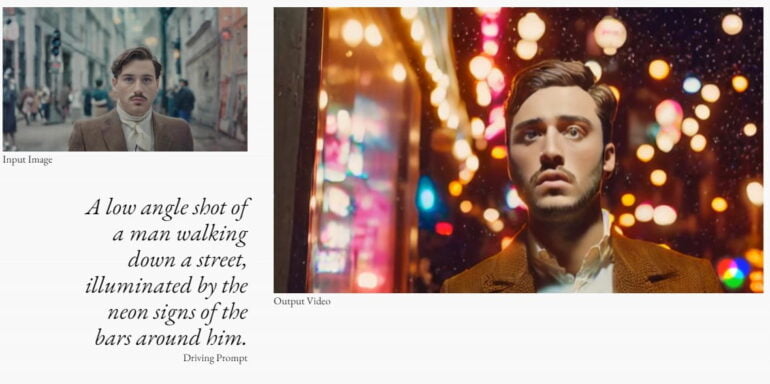

In addition, Runway can generate short video sequences from an image or from the combination of an image and a text description. On the left in the screenshot you can see the input image. It's converted into a short video animation (on the right, enlarged in the screenshot). The background scene and camera angle change according to the text prompt.

Bloomberg reports after a demonstration that the video generation happens "within minutes". But the resulting videos are only seconds long and a bit choppy. Motion sequences in particular are still a challenge for the model. Nevertheless, the generated scenes meet the content of the prompt.

Distribution via Discord waiting list

Runway is making Gen-2 available to select testers who sign up for a waiting list via Discord. The rollout is ongoing. Gen-1 currently has "thousands of users," according to Runway. The startup aims to prevent potential abuse of the video system, such as violent content, by combining AI mechanisms with the help of human moderators.

Gen-2-Trailer. | Video: Runway

In addition to Runway, Google is working on an HD text-to-video AI system, and Meta demonstrated Make-a-Video, which also turns text into short videos. Google has another model in the works, Dreamix, specifically for text-to-video editing. If the rapid advances in AI image generation translate to video, stock video databases may soon have to rethink their business model.