AI researchers from Samsung demonstrate deepfakes with megapixel resolution. The AI system can generate high-resolution human avatars in real-time.

Almost a year ago, a deepfake fooled Tom Cruise on social media with impressively realistic fake videos. Actor Miles Fisher and visual effects specialist Chris Umé were behind it. By their own account, they both put weeks of work into each video to achieve exceptionally high quality.

In addition to the technology, Fisher's close resemblance to the real Tom Cruise is also crucial to the top-notch deepfakes. That's because current deepfake technologies work particularly well when the facial shape, hair, and other features of the real and fake heads are similar. The same goes for deepfake Margot Robbie.

Samsung Labs shows off high-resolution human avatars

AI researchers at Samsung Labs are now demonstrating an AI system that can create high-resolution avatars from a single still image or painting.

In addition to the high resolution, the team focused primarily on reducing the dependence of the quality of deepfakes on a similar appearance between the human and his avatar. A convincing Cruise deepfake would then no longer require a human doppelganger like Fisher; the trick would work with any person.

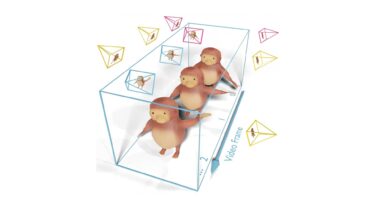

Samsung Labs calls its new deepfake system MegaPortraits (short for "megapixel portraits"). The base model captures the appearance of the source image as well as the movement of the source and target images.

Appearance and motion are processed separately by the model and transferred to the target image. For this purpose, the information is first merged in a 3D convolution generator and then transformed into the target image by a 2D convolution generator. Then, each target image is further enhanced by a separate HD model.

Samsung's MegaPortrait can do deepfakes in real-time

MegaPortrait's results are impressive and show significant improvements over older methods, the researchers say. The team shows examples of Mona Lisa or Brad Pitt becoming a real-time deepfake avatar for a person filmed by a video camera.

Video: Samsung

Video: Samsung

In addition to the base model, the team also trained a smaller model running in real-time at 130 frames per second on an Nvidia RTX 3090, linking the identities of the 100 neural avatars it contains to predefined source images.

Such real-time operation and proven identity assurance through linking to stored source images are essential for many practical applications for face-focused avatar systems, the researchers write.

The team still sees weaknesses in shoulder movements, head movements that are not frontally aligned, and a slight flicker on the skin caused by the static HD images in the training footage. The researchers plan to address these issues in an upcoming paper.

More examples are available on the MegaPortraits project page.