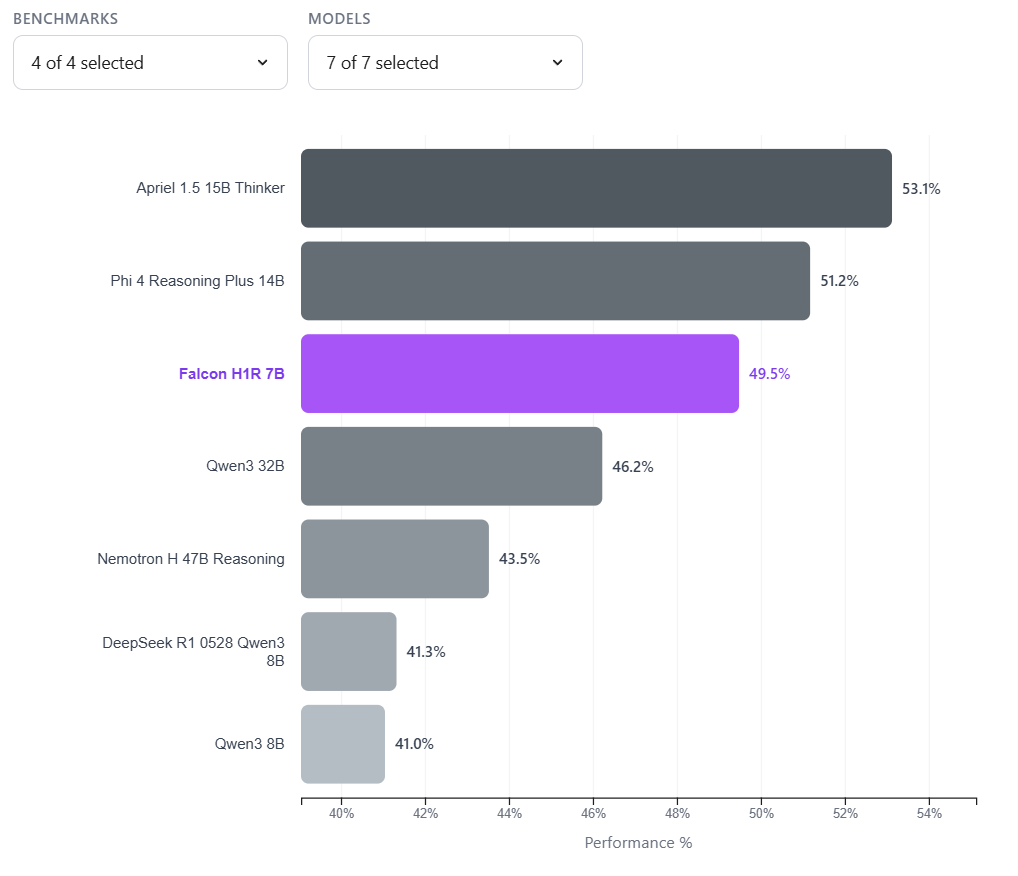

Meta is planning to release versions of its new AI models as open source, according to Axios. These would be the first models developed under the leadership of Alexandr Wang, who joined Meta in 2025 as part of a nearly $15 billion deal with Scale AI.

Unlike its approach with the Llama models, though, Meta plans to keep some components proprietary and review safety risks before releasing anything. The largest models won't be made publicly available either.

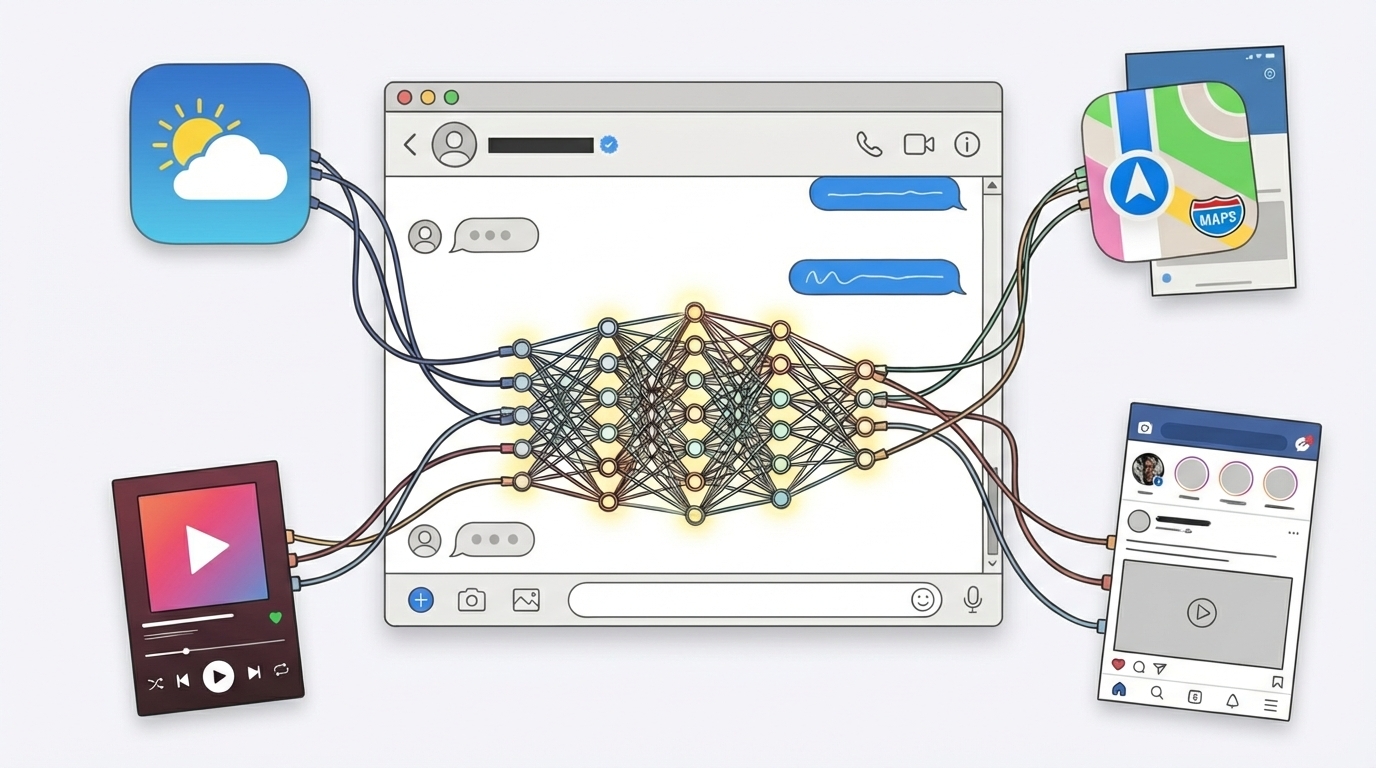

According to the report, Wang sees Meta as a counterweight to Anthropic and OpenAI, which focus more heavily on government and enterprise customers. Meta's strategy instead centers on consumer reach through WhatsApp, Facebook, and Instagram. Axios's sources say Meta already knows the new models won't match the competition in every area.