Yann LeCun wants to replace the AGI concept with "Superhuman Adaptable Intelligence"

A new paper by researchers from Columbia University and NYU, including Yann LeCun, argues that AGI is a flawed concept. Human intelligence isn't general but specialized. They propose Superhuman Adaptable Intelligence instead.

The term AGI dominates the AI debate, but a new paper by researchers from Columbia University, NYU, and the startup Distyl argues it's more of an obstacle than a guiding star. Prominent AI researcher Yann LeCun is among the co-authors.

Their central thesis is straightforward. Human intelligence isn't general at all but highly specialized through evolution, and we simply can't see our own blind spots. The researchers illustrate this with chess world champion Magnus Carlsen: "Magnus Carlsen is not objectively good at chess, he is good at chess with respect to human performance levels." Measured against computers, his abilities simply reflect the limits of human performance, they argue. "Our perception of his ability is colored by the limitations of humanity."

The paper finds that common AGI definitions don't hold up to their own standards

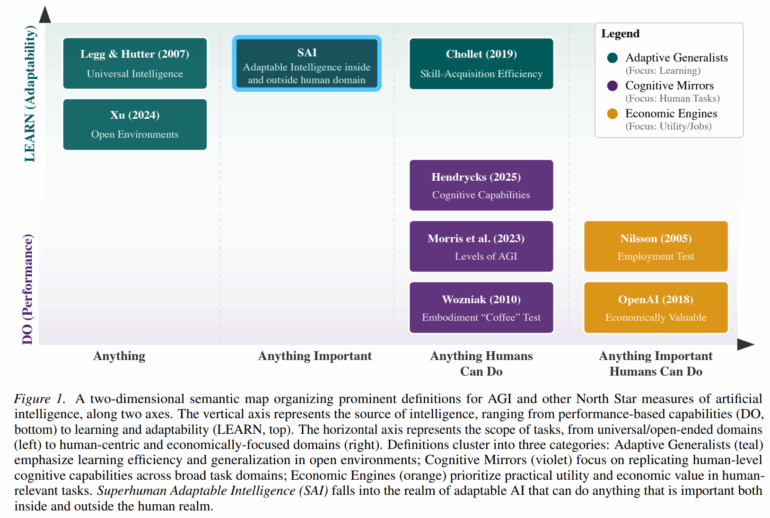

The authors systematically pick apart common AGI definitions and conclude that none of them meet their own criteria. Definitions that claim true generality run into the No Free Lunch theorem, which states that no single algorithm can perform optimally across all problems. Definitions limited to human capabilities aren't general by definition. Others, like those from OpenAI or DeepMind CEO Demis Hassabis, are either impossible to measure or internally inconsistent, according to the researchers.

Hassabis and Elon Musk have previously pushed back on similar arguments, saying it conflates General Intelligence with Universal Intelligence. Hassabis argued that "brains are the most exquisite and complex phenomena we know of in the universe (so far), and they are in fact extremely general." The researchers disagree. Even under idealized conditions, the human brain covers only "an infinitesimal fraction" of possible problems, they write. "We feel general because we can't perceive our blind spots, not because we lack them."

The authors propose adaptability over generality

Instead of AGI, the authors propose "Superhuman Adaptable Intelligence" (SAI), an intelligence "that can learn to exceed humans at anything important that we can do, and that can fill in the skill gaps where humans are incapable." What matters in their view isn't a checklist of skills but how fast a system can adapt to new tasks.

On the technical side, the paper points toward self-supervised learning and world models as the most promising paths, not autoregressive language models. The researchers argue that errors in such models "diverge exponentially with prediction length." They also see the current monoculture of GPT-style architectures as slowing progress. "Homogeneity kills research."

The paper calls for embracing specialization rather than fighting it, and for judging AI advances "by how quickly and reliably they produce new competence, rather than by how closely they imitate human behavior."

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- Over 20 percent launch discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.