Anthropic uses a questionable dark pattern to obtain user consent for AI data use in Claude

Anthropic’s new data policy raises legal concerns with its use of questionable dark patterns.

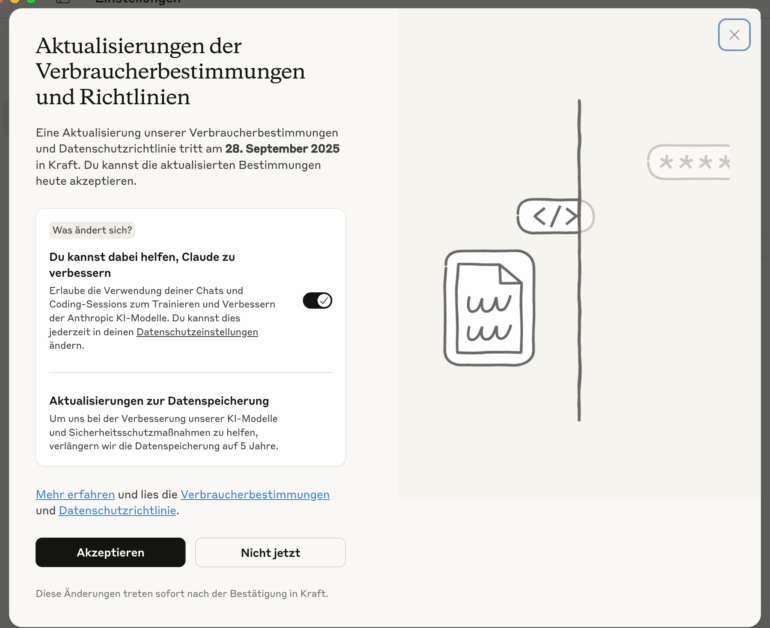

Anthropic is changing its privacy policy for the Claude AI chat platform, requiring users of its consumer products (Claude Free, Pro, Max, and Claude Code) to make a decision: Anyone who does not actively opt out by September 28, 2025, will allow the company to use both new and resumed chats for AI training. If users agree, the retention period for their chat data will also increase from 30 days to up to five years. Business, education, government, and API accounts are not affected by this policy.

Anthropic is using a manipulative interface design known as a dark pattern for this privacy update. Existing Claude users see a pop-up with a prominent black "Accept" button, while the toggle to allow data for AI training is small, pre-activated by default, and easy to miss. The design, as shown in the screenshot, encourages quick, unthinking acceptance from users.

The principle behind this approach - defaulting to data sharing with an opt-out - is not new. OpenAI uses a similar strategy for ChatGPT, where the setting to use user input for model training is enabled by default and must be manually turned off. While OpenAI quietly established this pattern from the start, Anthropic is only now introducing the use of training data and must obtain active consent from its users.

New policy, dark patterns

Claude’s update relies on a striking pop-up with a large, black "Accept" button. The data sharing toggle is tucked away, switched on by default, and framed positively ("You can help..."). A faint "Not now" button and hard-to-find instructions on changing the setting later complete the manipulative design.

These interface tricks, known as dark patterns, are considered unlawful under the General Data Protection Regulation (GDPR) and by the European Court of Justice when used to obtain consent for data processing. Pre-checked boxes do not count as valid consent under these rules.

The European Data Protection Board (EDPB) has also stressed in its guidelines on deceptive design patterns that consent must be freely given, informed, and unambiguous. Claude’s current design clearly fails to meet these standards, making it likely that Anthropic will soon draw the attention of privacy regulators.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.