Anthropics prompt caching makes your long prompts much cheaper

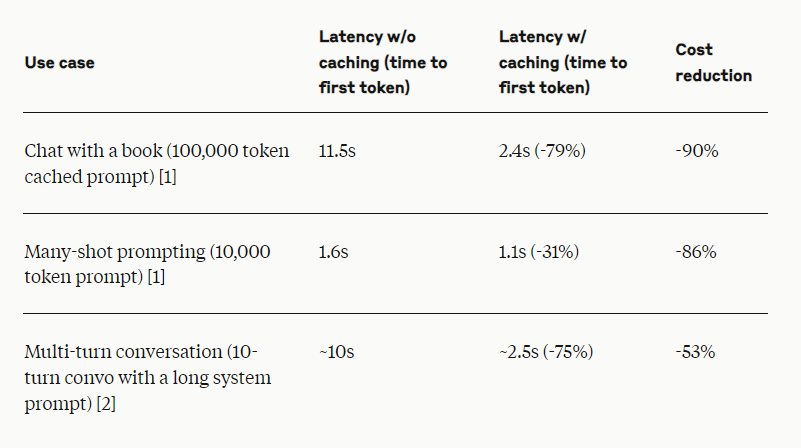

Anthropic's prompt caching feature can cut the cost of long prompts by up to 90% and reduce latency by as much as 85%. The technology lets developers cache frequently used context between API calls, giving Claude more background knowledge and examples to work with. Prompt caching is now in public beta for Claude 3.5 Sonnet and Claude 3 Haiku models, with support for Claude 3 Opus on the way. The feature is a good fit for chat agents, coding assistants, long document processing, detailed instruction sets, agent-based search and tool usage. It also works well for answering questions about books, papers, documentation, and podcast transcripts, Anthropic says. Google also offers prompt caching.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.