OpenAI is switching to usage-based pricing for Codex in ChatGPT Business and Enterprise. Admins can enable free Codex access across their workspace and pay only for actual usage; no upfront licenses required. Eligible Business customers can also claim up to 500 dollars in promotional credit per workspace as part of a limited-time promotion.

The move is designed to lower the barrier for enterprise adoption, OpenAI says. Coding tools typically spread from individual developers to full teams. "This model gives organizations a simpler way to support that motion inside a managed workspace," the company writes. OpenAI is likely betting that hands-on experience will drive long-term lock-in. It's a direct shot at GitHub Copilot and Cursor, which still charge per seat.

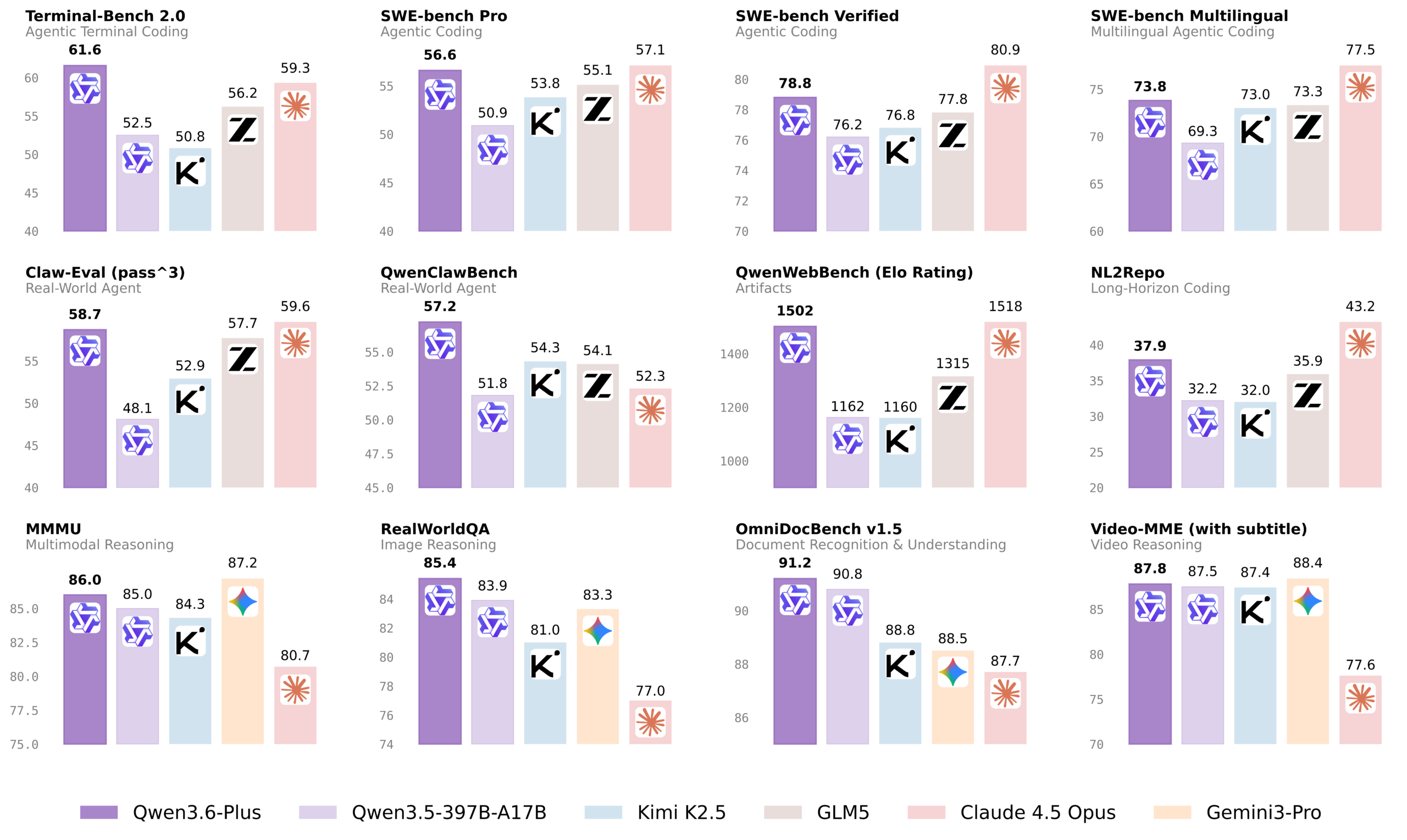

OpenAI says over two million developers use Codex weekly, with Business and Enterprise usage growing sixfold since January. The company's biggest competitor in this space is Anthropic with Claude Code.