Jonathan Kemper

Jonathan writes for THE DECODER about how AI tools can improve both work and creative projects.

Read full article about: OpenAI's Codex gets a plugin marketplace for Slack, Notion, Figma, and more

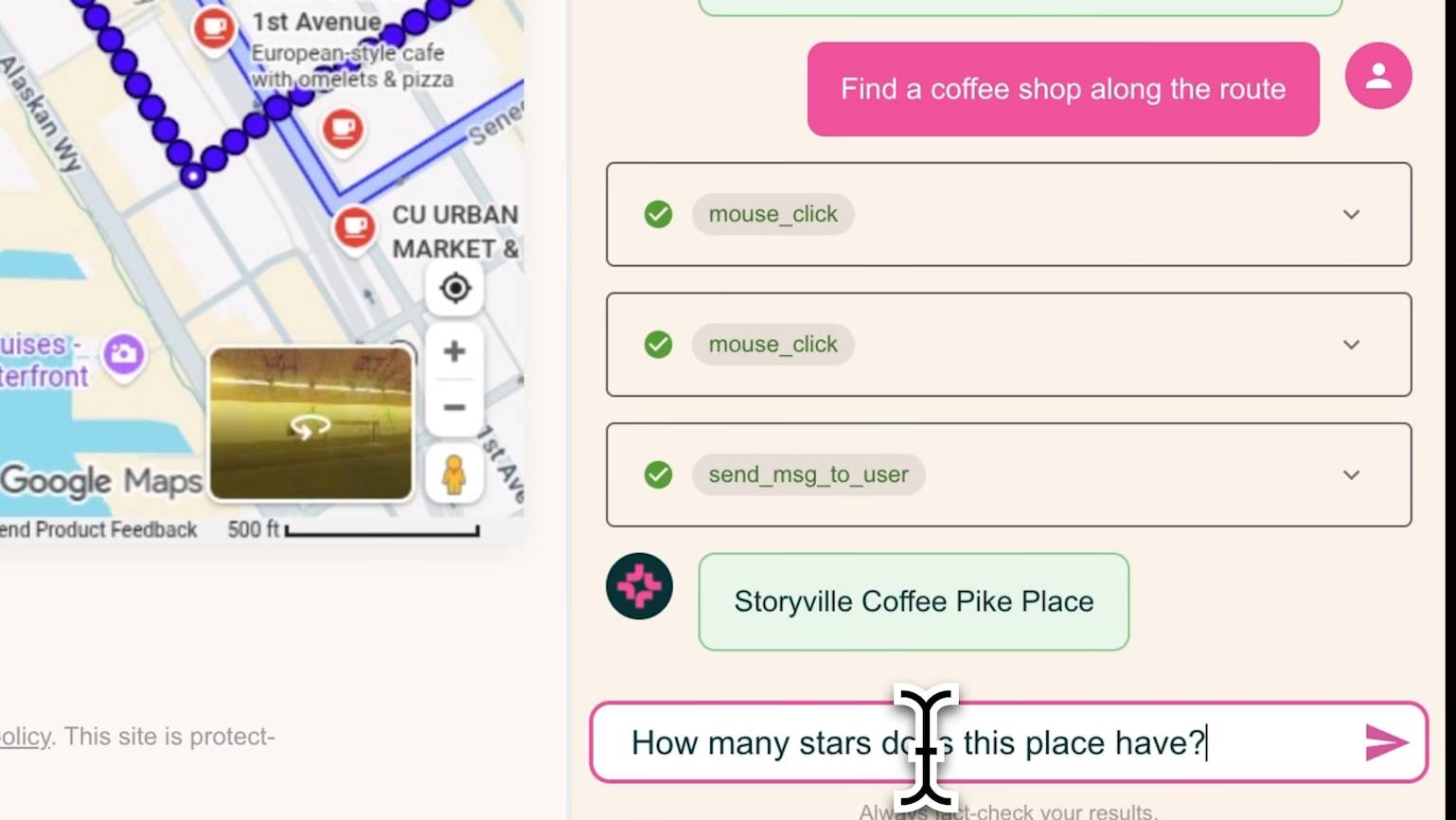

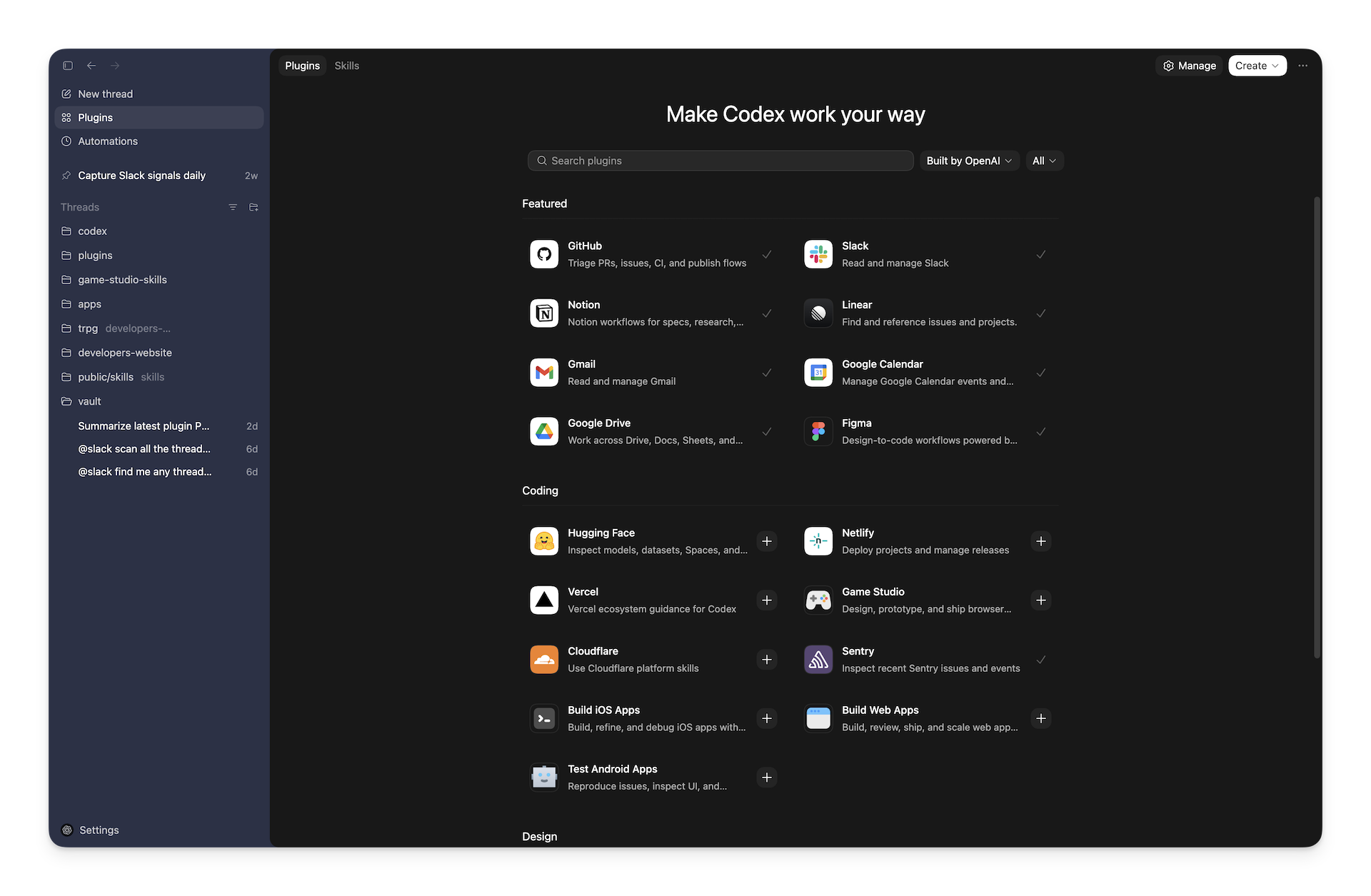

OpenAI is adding plugins to Codex that integrate with popular work tools like Slack, Figma, Notion, Gmail, and Google Drive. The plugins go beyond coding - OpenAI says they also help with planning, research, and coordination. Under the hood, plugins bundle predefined prompt workflows ("skills"), app integrations, and MCP server configurations into installable packages, similar to ChatGPT integrations. They work across the Codex app, command line, and IDE extensions. Developers can build their own and distribute them through local or team-wide "marketplaces." An official curated directory is already live, with self-publishing coming soon.

The move is part of OpenAI's broader push into coding tools and enterprise customers, which includes a planned "super app" combining ChatGPT, Codex, and the Atlas browser. Codex now has over 1.6 million weekly active users, with a Windows version shipping just recently.

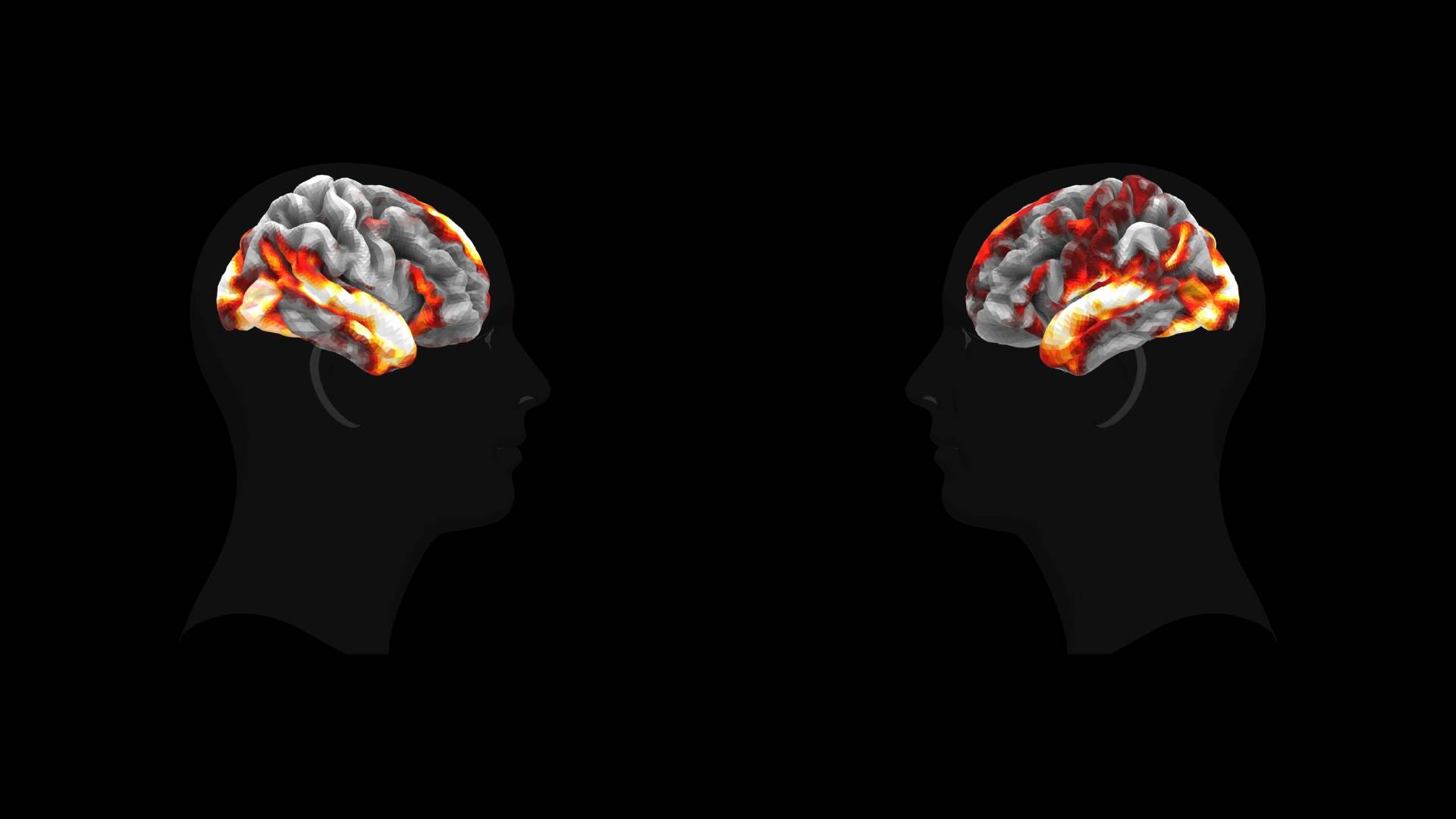

Math needs thinking time, everyday knowledge needs memory, and a new Transformer architecture aims to deliver both

A German research team lets Transformer models decide for themselves how many times they think about a problem. Combined with additional memory, the approach outperforms larger models on math problems.

95% of UK students now use AI and their experiences couldn't be more divided

95 percent of British students use generative AI. But while some say it deepens their learning, others worry it’s replacing their ability to think for themselves. A new survey reveals a student body caught between enthusiasm, overwhelm, and universities that aren’t keeping up.