AI researcher François Chollet sees "transformative" progress in AI - but not one in developing a human-like AI. Is his frustration justified?

Leading AI companies like Deepmind or OpenAI are constantly developing AI models that set new standards. This is how they drive the development of AI and ultimately its use for AI applications in our everyday lives.

Chollet, for example, works at Google in research: probably no other company uses AI so fundamentally in its own products. The researcher himself developed the Keras deep learning library and contributed significantly to the TensorFlow framework for machine learning.

Chollet: Not a step further in general AI

The real research goal of Deepmind and OpenAI, however, is a step above pragmatic everyday applications: The companies want to create general AI, an AI with human-like intelligence that can learn on its own and apply its knowledge to many tasks - unlike today's specialist systems. This general AI would be arguably the greatest possible breakthrough in AI research and the dawn of a new technological age.

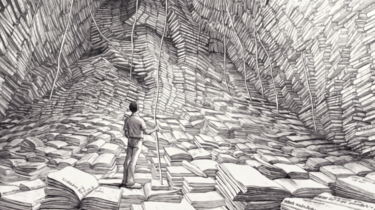

On Twitter, Chollet, who is himself researching general AI, expresses his admiration for the efficiency of human learning, saying he is always amazed at how quickly young children learn. One thing they do for the first time, they can immediately generalize "in an infinite number of variations and in an infinite number of different situations".

Current AI systems, on the other hand, must be trained in this capacity for abstraction, if it can be called such in AI, with countless examples. For Chollet, however, abstraction and logical thinking are essential building blocks of intelligence.

I know I am not the first, nor the last, to feel like this. pic.twitter.com/3hWbtsT8rQ

- François Chollet (@fchollet) December 23, 2021

It was frustrating, Chollet said, that no progress had been made towards more general artificial intelligence in the last decade. "When I started looking at this question, I expected that by now we would have at least the beginnings of an answer. We have nothing," Chollet says.

The path to general AI is also a matter of perspective

Chollet says that his observation is not intended to diminish the progress of AI research, specifically the deep-learning successes of the past decade. These are admirable and "transformative in many ways," he said. They just don't have any relevance to the development of AI in general.

In doing so, Chollet is also directly taunting his colleagues at Deepmind, some of whom believe that their research is on the right path to the development of general AI and place great hopes in it, for example in the fight against climate change.

Chollet's statement also brushes aside recent advances in multimodal AI, i.e., artificial intelligence that is trained with different types of data such as text and images and can therefore be used more flexibly, in the context of general AI.

The last two years have also seen a particular focus on large-scale AI models. On the one hand, these models show that more data training usually leads to better AI performance. For another, they also show that smaller, more specialized AI models can be derived from large, pre-trained AI models for new applications with relatively little effort.

Again, depending on one's perspective, this finding could be seen as progress towards more general AI: a large AI model that can cover many use cases with fine-tuning. Chollet obviously sees it differently.