Google announces new AI integrations and products at Google I/O. An overview.

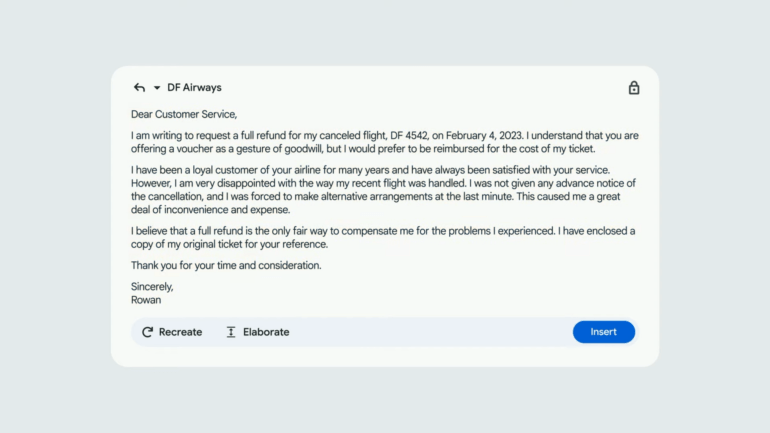

Gmail "Help me write"

Google is integrating generative text AI into Gmail as part of its Workspace updates, which can generate detailed response suggestions. Google is demonstrating this with the example of a refund request to an airline, with an elaborate and detailed email designed to increase the chances of a full refund.

Immersive View Routes for Google Maps

Last year, Google introduced Immersive View, an AI-generated 3D view of famous landmarks around the world. Google is now rolling out this view more broadly for 3D routes in cities. The service is expected to be available by the end of the year for Amsterdam, Berlin, Dublin, Florence, Las Vegas, London, Los Angeles, New York, Miami, Paris, Seattle, San Francisco, San Jose, Tokyo, and Venice.

Magic Editor for Google Photos

Magic Editor is a generative image AI for your photos. It allows you to move elements around in the image and fill in the missing information. Google demonstrates this using a photo of a child holding balloons that are cut off at the edge of the image. By dragging the child to the center, the balloons are automatically inserted at the edge. The new editor will be released for select Pixel smartphones later this year, and Google is aware that it probably won't always deliver the expected results.

Bard gets PaLM-2 support and new features

Google has announced enhancements to its Bard chatbot, including features for images, coding and application integration, as well as an expansion of global access. Bard will transition to PaLM 2, a comprehensive language model with enhanced mathematical, logical, and programming capabilities. PaLM 2 is significantly better than the PaLM model announced in April 2022, according to Google.

The AI tool, which was previously only available in the U.S. and U.K., will now be offered in more than 180 countries and territories. Support for Japanese and Korean has been added, and 40 languages will soon be supported.

Bard will soon support answers and prompts with images, made possible by the integration of Google Lens into Bard, allowing users to use images along with text in their prompts.

New code updates and export features include more accurate source citations, a dark design, and an "export" button that allows developers to export and run code with Replit, starting with Python. Users can also create emails and documents directly in Gmail and Docs.

Future plans for Bard include integrations with Google apps and services such as Docs, Drive, Gmail, and Maps, as well as services from across the web, including Adobe Firefly, Adobe's generative AI model, and other partners such as Kayak, OpenTable, ZipRecruiter, Instacart, Wolfram, and Khan Academy.

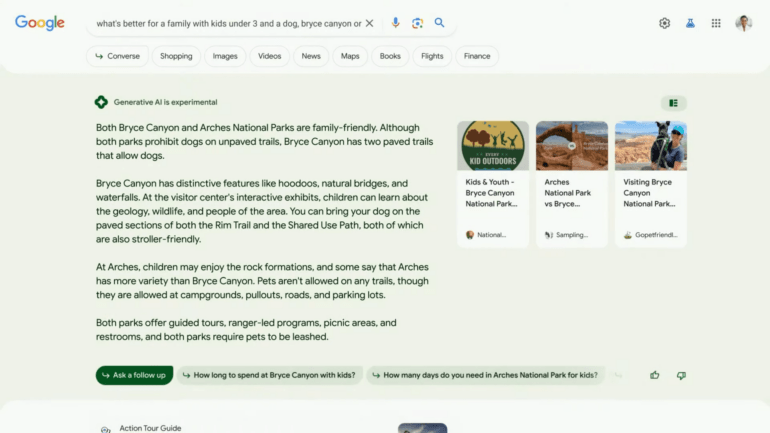

"AI Snapshot": AI Answers in Google Search

As previously announced, Google is integrating AI-generated answers directly into Google Search. The experimental answers will appear before traditional Google search results. The new search interface enables follow-up questions and chatbot conversations based on AI suggestions, and integrates links from publishers, businesses and social media.

"We know that people value other people's opinions," said Cathy Edwards, Google's head of search. However, the new AI search view takes up a lot of screen real estate and is likely to impact traffic for publishers.

Generative AI capabilities are also being extended to Google Shopping search. AI provides a comprehensive snapshot of products, including notable factors, relevant ratings, reviews, prices and product images, all based on the Google Shopping Graph of more than 35 billion product listings.

AI responses are only provided when the Google algorithm deems it appropriate. For now, the system will avoid sensitive topics such as health and finance.

Access to AI Snapshots is available by joining the Search Generative Experience program, which is part of the new Search Labs feature. Access to the program should be available in the coming weeks, Search Labs is available now.

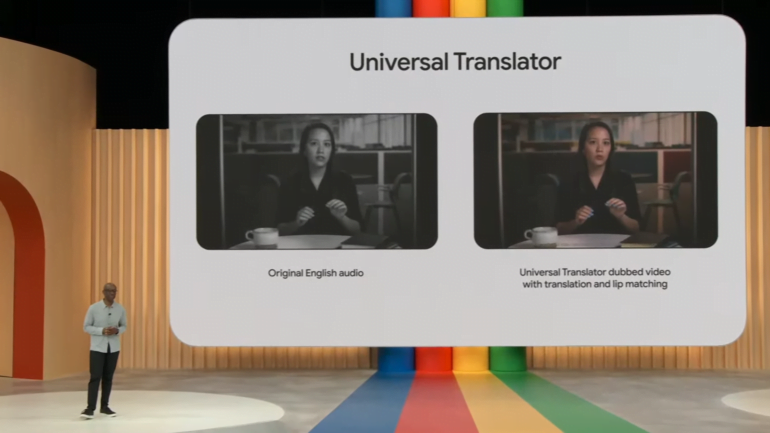

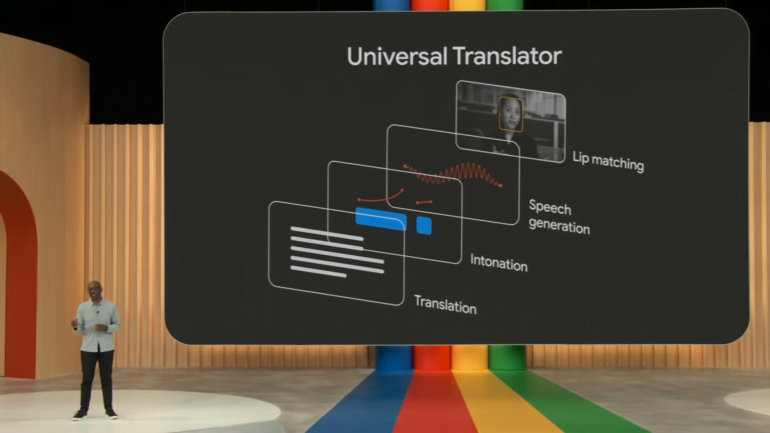

Universal Translator

Universal Translator is an audiovisual translation tool based on the latest AI translation models, according to Google. It can translate spoken language in a video and output it as a voice by synchronizing the lip movements of the person speaking. Due to the risk of deepfakes, Google is initially only making the tool available to select partners.

Generative AI for Android: Messages and Wallpapers

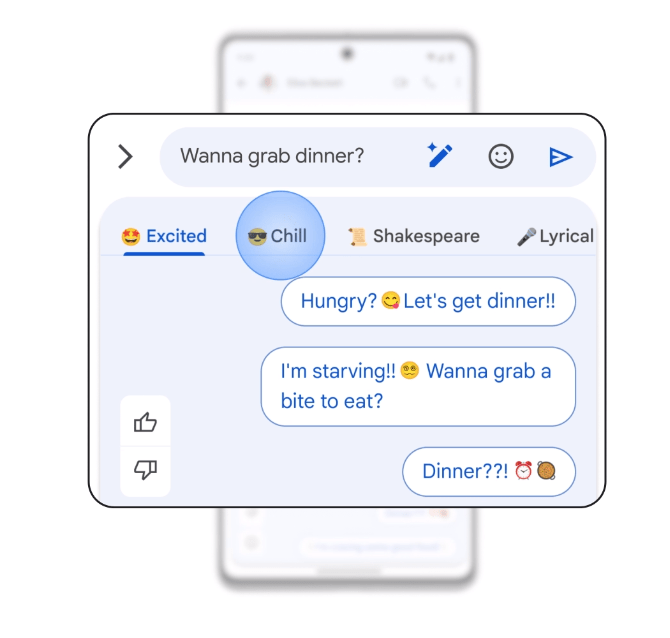

For Android, Google has announced "Magic Compose", a kind of elaborate autocomplete for messages. The generative AI uses the context of the conversation and is also supposed to adopt certain styles, such as a communication that sounds businesslike, Shakespearean, or particularly chill.

Emoji Wallpaper allows users to customize their device's background with their favorite emoji combinations, patterns, and colors. Cinematic Wallpaper turns user-selected photos into moving images that come to life when the device is unlocked or tilted, using local AI on the device.

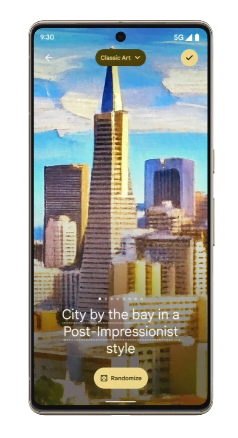

Also new is the Generative AI Wallpaper feature. Users can describe their creative vision and the phone will generate unique wallpapers using Google's text-image diffusion model. The prompts are pre-structured so that you can make good-looking wallpapers without having to hire a prompt engineer.

These new wallpapers will be available next month, starting with Pixel devices. They are based on Google's Material You design framework and automatically adjust the device's color palette to match the selected wallpaper.

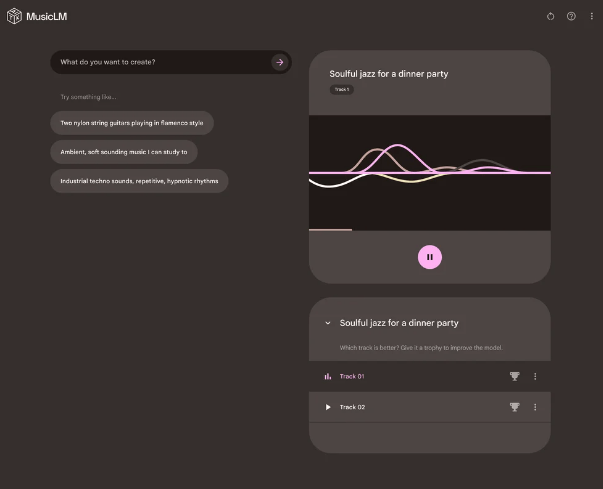

MusicLM launches

In January, Google announced the MusicLM text-to-music model, which is now being rolled out in the AI Test Kitchen for Web, Android and iOS. In response to a lyrical description of a particular musical style, MusicLM generates two songs, one of which may be deemed better for further AI training.