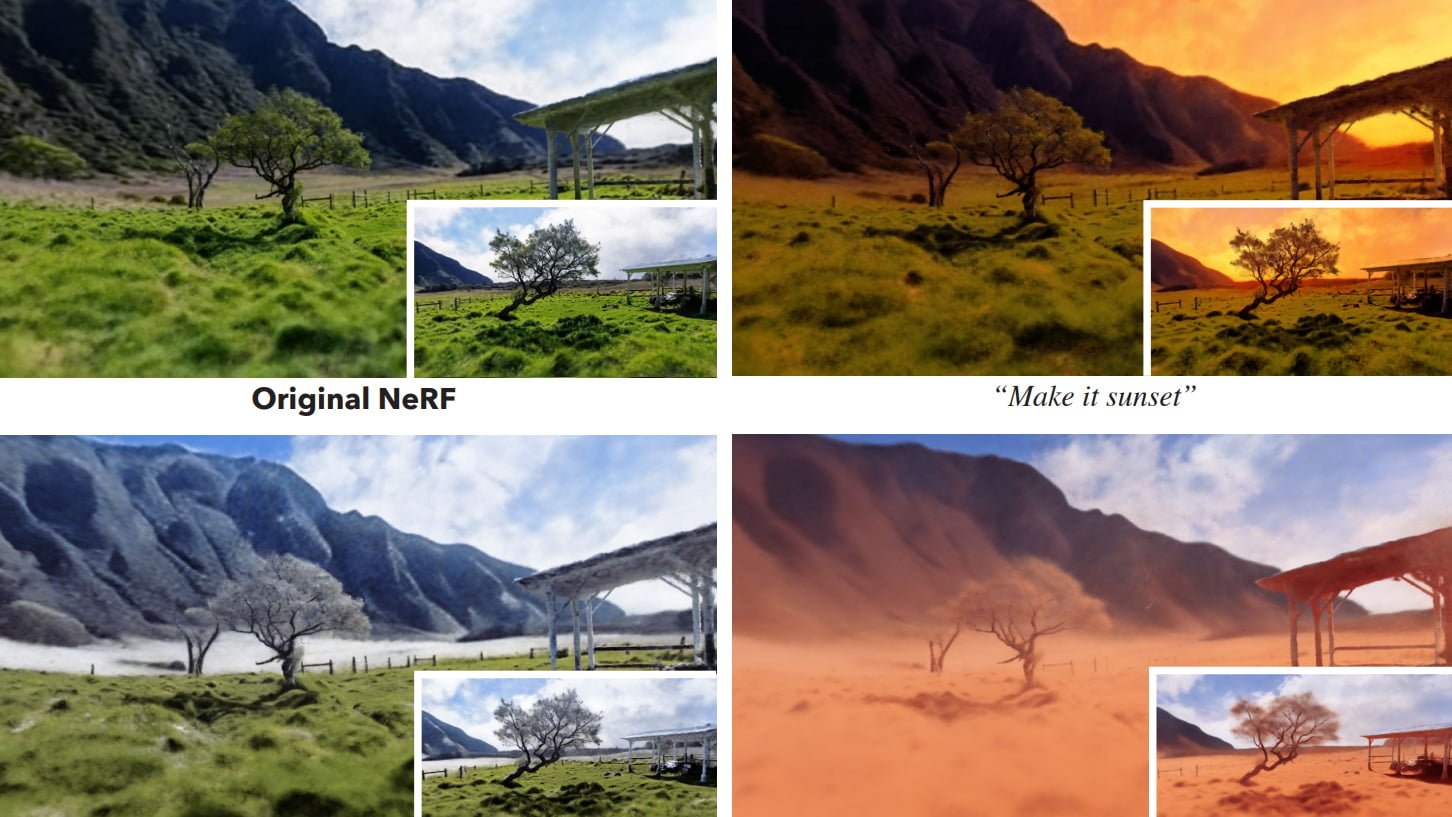

Instruct-NeRF2NeRF uses methods of generative AI models and can edit 3D scenes according to text input.

Earlier this year, researchers at the University of California Berkeley demonstrated InstructPix2Pix, a method that allows users to edit images in Stable Diffusion using text instructions. The method makes it possible to replace objects in images or change the style, for example.

Now some of the researchers have applied their method to editing NeRFs. Starting with a trained NeRF and the images used for training, Instruct-NeRF2NeRF can edit the training images one by one according to a text prompt and re-train the NeRF with these edited images.

Instruct-NeRF2NeRF supports simple objects and real scenes

The team shows how this method can be used to give a head a cowboy hat, turn it into a 3D oil painting, turn it into Batman, or turn it into Albert Einstein, for example.

Video: Haque et al.

Another example is changing the season, time of day, or weather of a nature shot; another is changing a person's clothes.

Video: Haque et al.

Video: Haque et al.

According to the team, the method is "able to edit large-scale, real-world scenes, and is able to accomplish more realistic, targeted edits than prior work."

Instruct-NeRF2NeRF updates the NeRF based on the iteratively edited images. This task is performed by InstructPix2Pix, which is conditioned by text input.

Instruct-NeRF2NeRF requires between 10 and 15 gigabytes of video memory

The team is releasing three different versions of Instruct-NeRF2NeRF that require between 15 and 10 gigabytes of RAM video memory.

The largest version gives the best results. The researchers cite the lack of ability to perform "large spatial manipulations" and the appearance of artifacts such as double faces as limitations.

More examples, code, and models are available on the Instruct-NeRF2NeRF project page. To learn how to create your own NeRFs, check out our no-code tutorial for Instant-NGP. Here we show you how to view NeRFs in VR.