Researchers introduce ORBIT-Surgical, a simulation environment that can be used to train robots for surgical tasks.

ORBIT-Surgical is a new open-source framework for simulating surgical robots, developed by scientists from the University of Toronto, UC Berkeley, ETH Zurich, Georgia Tech, and Nvidia. It aims to facilitate and accelerate research in the field of machine learning for robot-assisted surgery. The framework uses Nvidia's robotics simulation platform Isaac Sim for GPU-accelerated physics and the 3D development tool Omniverse with ray tracing rendering.

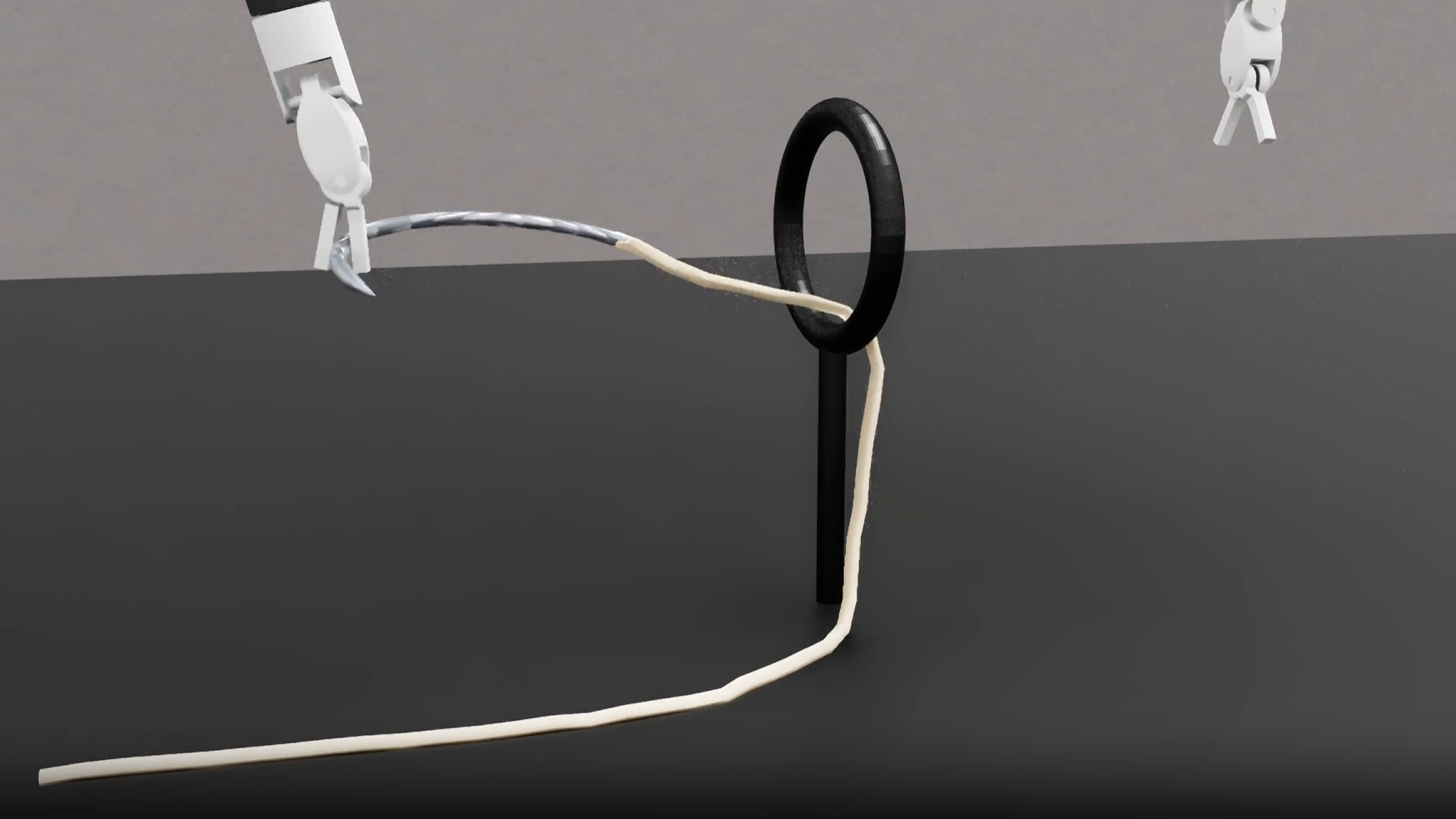

The simulation environment includes detailed models of two surgical robot platforms: the da Vinci Research Kit (dVRK) and the Smart Tissue Autonomous Robot (STAR). In addition, ORBIT-Surgical offers 14 benchmark tasks that represent basic surgical skills. These include simple movements as well as interaction with rigid and deformable objects such as needles and tubes.

Through GPU parallelization, up to 8,000 simulations can be run simultaneously on a single graphics card. This enables efficient training of reinforcement learning algorithms within a few hours. Classical CPU-based simulators require days or weeks for this, the team says.

Moreover, ORBIT-Surgical supports various input devices such as VR controllers and the control unit of the dVRK system. This allows human experts to control the simulated robots in real-time. The recorded movements can then be used to train algorithms for imitation learning.

Photorealistic simulation enables synthetic data for further training

Another advantage of the platform is the ability to generate photorealistic synthetic images. Combining such data with real images more than doubled the performance of a model for segmenting surgical needles in an experiment, the researchers report.

The researchers also demonstrated the transfer of motion sequences and trained reinforcement learning models from simulation to a real dVRK robot. However, the success rate of only 50 percent still leaves room for improvement. Among other things, the team attributes this to the fact that some stretching effects are not yet taken into account.

Future versions of ORBIT-Surgical are also expected to support the simulation of incisions in soft tissue and algorithms for more complex tasks such as suturing. With the platform, the researchers want to advance learning-based robot-assisted surgery. The goal is to develop systems that support and relieve surgeons during challenging procedures, they say.

ORBIT-Surgical is now available on GitHub.