Startup Perplexity.ai, known for its AI search engine, is making its online language models available through an API.

Standard LLMs struggle to provide up-to-date information and often give incorrect answers. Perplexity's online LLMs, pplx-7b-online and pplx-70b-online, aim to solve this problem by using knowledge from the Internet to provide more accurate and up-to-date answers.

This allows the models to answer time-sensitive questions that are difficult for offline models to answer, such as sports scores from the night before. Perplexity's LLM-powered online search provides the same experience implemented in a web interface.

Perplexity models are not your standard LLM

Perplexity's online LLMs are based on the open-source mistral-7b and llama2-70b models. The company uses its own search, indexing and crawling infrastructure to feed the LLMs with the most relevant and up-to-date information.

The search index prioritizes high-quality Websites that are not optimized for search engines and provides snippets of Web pages for better answers, Perplexity writes.

In addition, the models are fine-tuned using large, high-quality and diverse training datasets compiled by Perplexity's in-house data engineers.

This optimization process is designed to ensure that the models perform well in terms of helpfulness, factual accuracy, and freshness.

Perplexity outperforms GPT-3.5 and Llama 2

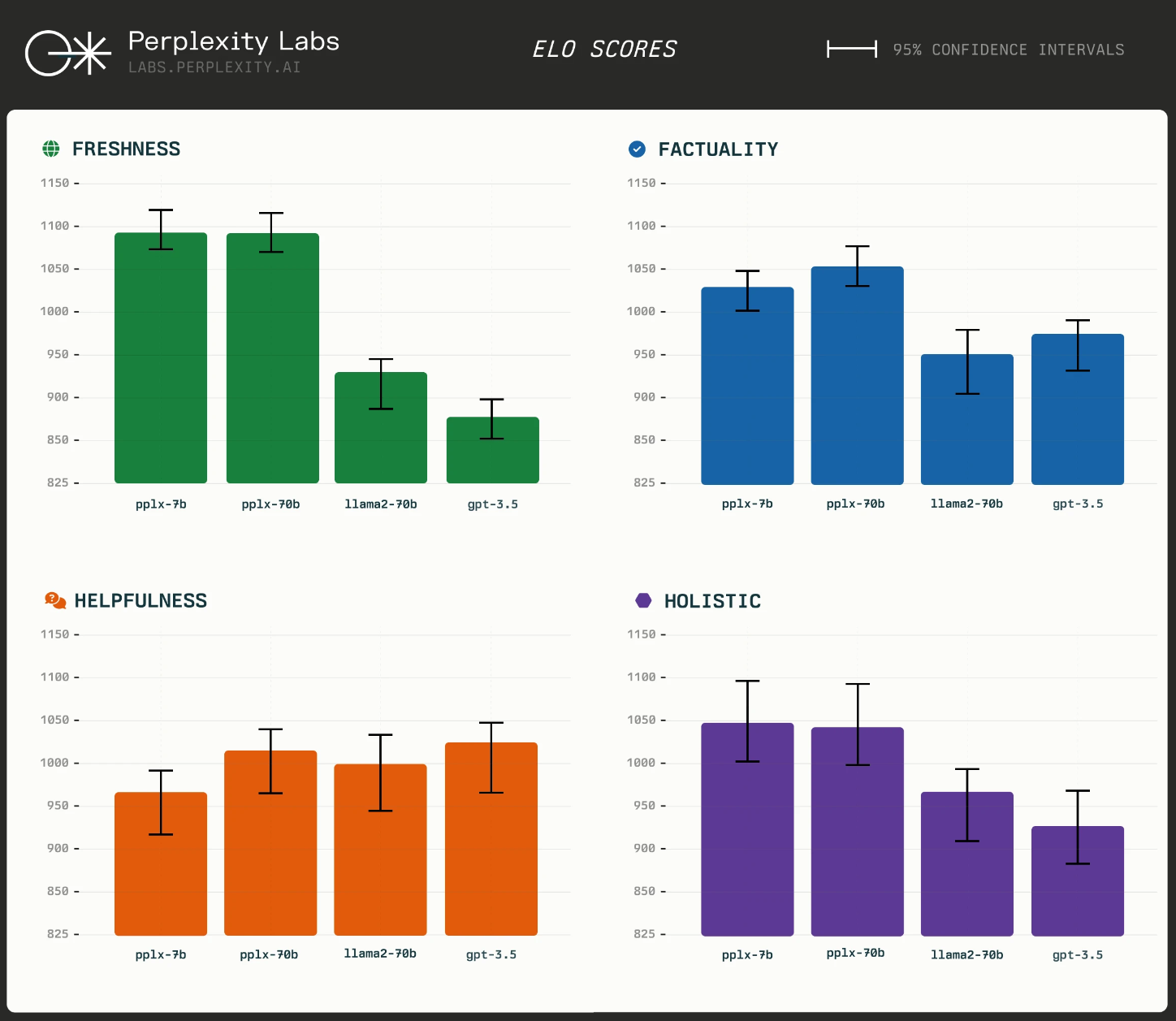

Perplexity benchmarked its online LLM against OpenAI's GPT-3.5 and Meta's Llama2-70b. The evaluation is based on three criteria: Helpfulness, Factuality, and Freshness.

Human testers were asked to select their favorite response for each attribute from the pre-generated responses and provide an overall rating of the responses. The testers received the prompt and the different responses from the models in random order.

The results show that Perplexity's pplx-7b-online and pplx-70b-online outperform GPT-3.5 and Llama2-70b on all three individual criteria as well as on the holistic criteria. Human raters prefer them for their accuracy and up-to-dateness, according to the company.

Perplexity models now available to all via API

Perplexity also announces the transition of its pplx API from beta to general release, making the pplx-7b-online and pplx-70b-online models available to all for the first time.

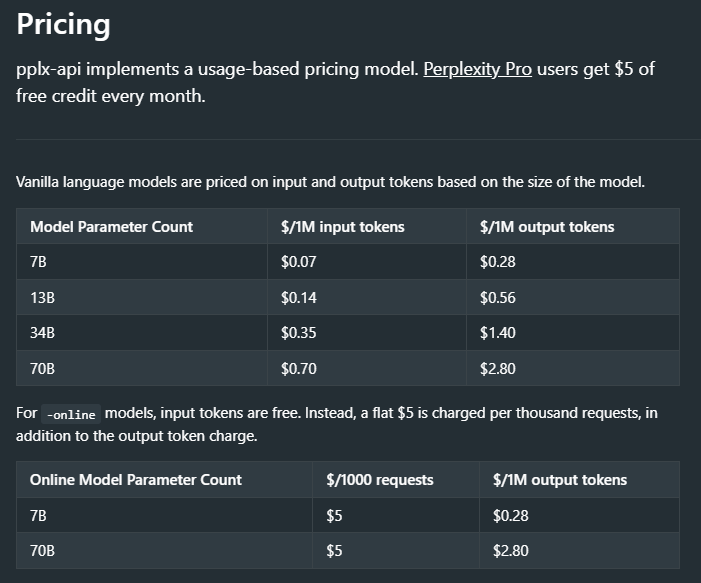

The company is also introducing a pay-per-use pricing structure, where Pro users of Perplexity.ai will receive a monthly pplx API credit of five US dollars. Otherwise, API costs are usage-based.

You can try out the models for free at Perplexity Labs. Commercial users should contact Perplexity directly via email. More information about the API, including documentation and FAQs, is available here.