Study compares ChatGPT's and Google's search performance and user experience

Chatbots like ChatGPT are already complementing and, for some, replacing the role of traditional search engines. A recent study tried to find out more about how they compare in terms of efficiency and quality.

A recent study compares the search performance and user experience of ChatGPT and Google search. The study, conducted by researchers from the US and Hong Kong, shows that ChatGPT users spend less time on tasks and rate the quality of information they receive higher. The study also points out ChatGPT's weaknesses in fact-checking tasks.

Although the study meets scientific standards, its validity is limited by the relatively small number of participants (95). Participants were randomly assigned to one of two groups, ChatGPT or Google, and then completed a questionnaire on ease of use, usefulness, enjoyment, and satisfaction with the tool. The survey was conducted entirely online.

- In Task 1, participants were asked to find the name and age of the first woman in space.

- In Task 2, participants were asked to list five URLs that could be used to book a flight between Phoenix and Cincinnati in the United States.

- In Task 3, participants were asked to read an excerpt from a news article and check three highlighted statements.

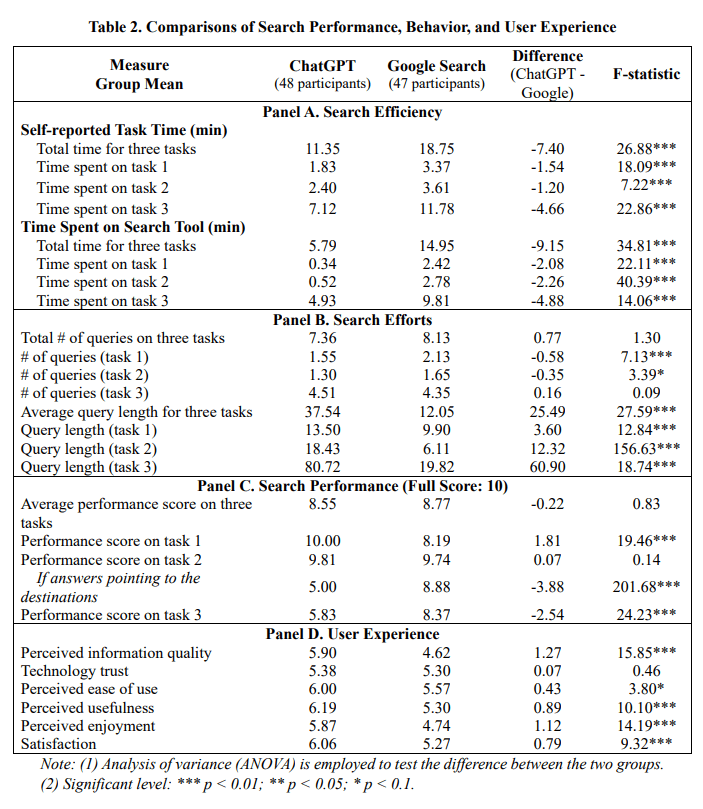

The ChatGPT users group took an average of 11:21 minutes to complete the three tasks, while the Google searchers group took significantly longer at 18:45 minutes. Participants self-reported their time per task.

The researchers attribute this difference to the fact that Google Search required users to formulate their queries multiple times. It was a trial-and-error process to get the results. ChatGPT, on the other hand, allows users to ask a question in natural language. The summarized answer eliminates the need for further reading.

In contrast, participants in both groups required a similar number of inputs for the three tasks, but the inputs were longer for ChatGPT. ChatGPT had the largest speed advantage in the first task (name and age of the first woman in space), which involved finding specific information.

In terms of search performance, i.e. the correctness of the answers, the researchers awarded up to ten points per task. Here, ChatGPT with 8.55, and Google with 8.77 are not far apart, so the difference is statistically negligible. But this also means that Google users take significantly longer to achieve similar quality.

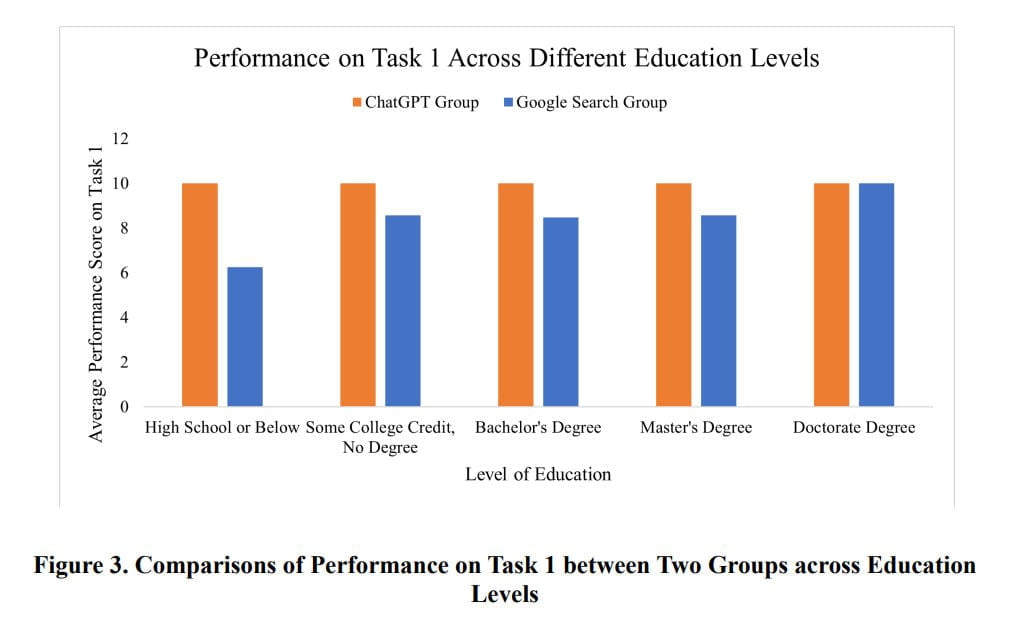

There were sometimes significant differences from task to task. For example, the researchers found it noteworthy that in Task 1, all participants scored full points with ChatGPT, indicating that ChatGPT is very effective in finding facts. Google users made several mistakes here, with an average score of only 8.19.

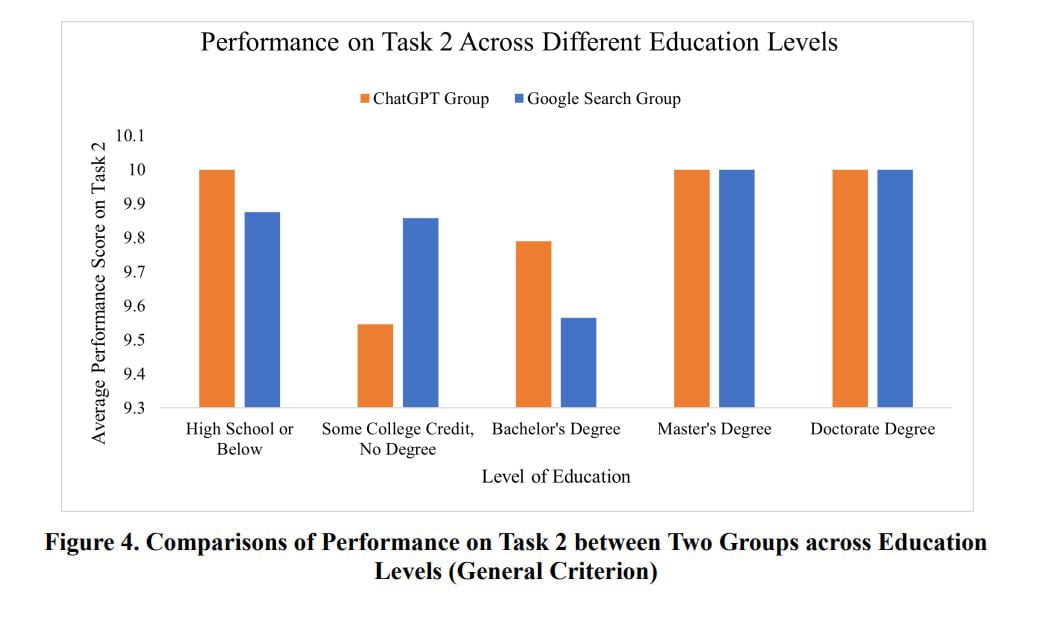

For the second task (flight booking sites), both groups scored close to the maximum. Google seemed to be slightly more helpful, directing users to pages for flights between Cincinnati and Phoenix, while ChatGPT only directed the group to general booking pages.

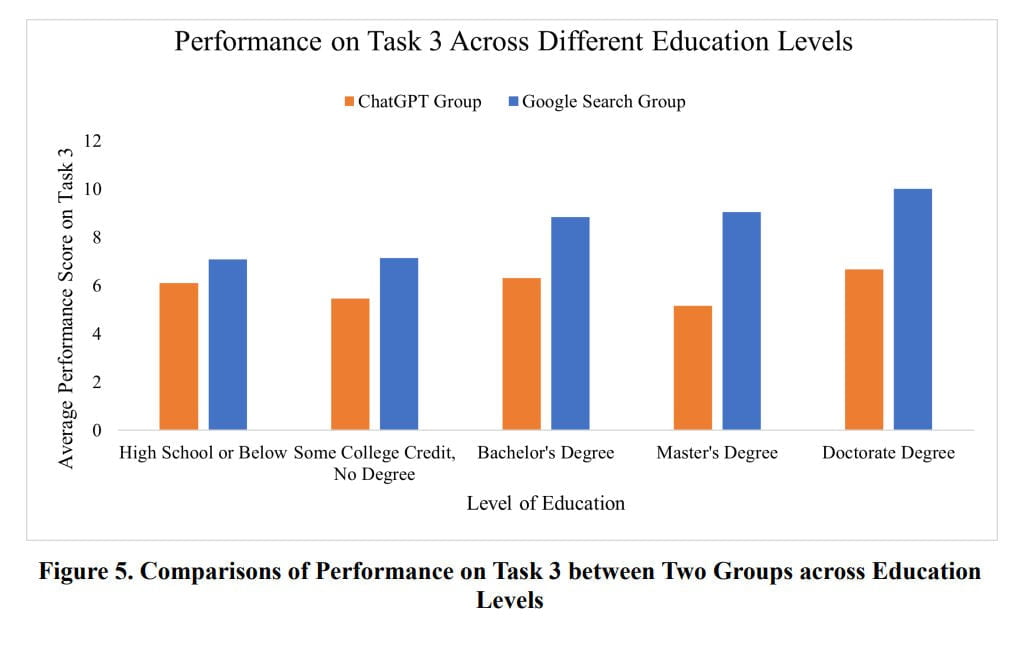

In contrast, subjects' performance on Task 3 (fact-checking a news story) was significantly worse in the ChatGPT group (5.83) than in the Google search group (8.37). The wording of the ChatGPT prompt made a difference: when asked to judge the truthfulness of a statement, ChatGPT was off. However, the answer was correct when asked specifically about the accuracy of the information itself.

Of course, since the sample size is so small, this has little to no representativeness, and the researchers suggest that user confidence in ChatGPT may be the real problem: "Participants often demonstrate a lack of diligence when using ChatGPT and are less motivated to further verify and rectify any misinformation in its responses. According to our observations, 70.8% of the participants in the ChatGPT group demonstrate an overreliance on ChatGPT responses by responding with 'True' for the first statement."

ChatGPT has an edge in quality, trust in both tools is equal

The ChatGPT group rated the quality of answers higher than the Google search group (5.90 vs. 4.62). This is likely because ChatGPT provides more accessible information in complete statements. The level of confidence in both technologies is basically the same.

In terms of educational background, the researchers found no differences among ChatGPT participants, but users with higher education showed more competence in using Google.

Participants tend to accept the responses as provided and exhibit a lack of inclination to question the information sources from both tools. While participants display a similar level of trust in using both tools, Google Search users may need to exert more effort and spend additional time browsing webpages to locate relevant information. Therefore, their perceived information quality is lower.

In contrast, ChatGPT’s convenience may discourage participants from further exploring and verifying information in its responses, resulting in subpar performance in fact-checking tasks. In addition, participants in the ChatGPT group find it to be more useful and enjoyable and express greater satisfaction with the tool compared to those in the Google Search group.

Perceived ease of use is relatively higher in the ChatGPT group than in the Google Search group, but the difference is not significant at the 5% level. This may be attributed to people’s existing familiarity with Google, and the tasks in our experiments may not pose a significant challenge for them.

From the paper

Google's Search Generative Experience might offer the best of both worlds

The results of the study are not surprising. When it comes to specific information (Task 1), ChatGPT summarizes it more compactly. This is faster than opening individual pages.

On the other hand, for real-time services such as booking a flight (Task 2), Google provides more precise results via deep links to specific offers.

OpenAI repeatedly emphasizes that users should not rely on ChatGPT's fact-checking, and the results of Task 3 seem to support this thesis. However, only one case was checked, and it was also prompt-sensitive, so the result is only anecdotal and not at all representative.

Large language models are being discussed as a possible alternative to traditional web search. With its AI-based search prototype Search Generative Experience, Google is currently demonstrating that generative AI can solve some search tasks better than linking to other pages on the web.

Google is already combining the advantages of chat search (direct, individual answers to questions, queries) and classic search (real-time integration of services into the AI answers, up-to-date information). OpenAI is trying to provide a similar service with ChatGPT plugins but is still lagging in terms of technical implementation and overall user experience.

AI News Without the Hype – Curated by Humans

Subscribe to THE DECODER for ad-free reading, a weekly AI newsletter, our exclusive "AI Radar" frontier report six times a year, full archive access, and access to our comment section.

Subscribe nowAI news without the hype

Curated by humans.

- More than 16% discount.

- Read without distractions – no Google ads.

- Access to comments and community discussions.

- Weekly AI newsletter.

- 6 times a year: “AI Radar” – deep dives on key AI topics.

- Up to 25 % off on KI Pro online events.

- Access to our full ten-year archive.

- Get the latest AI news from The Decoder.