Moltbook's alleged AI civilization is just a massive void of bloated bot traffic

Key Points

- On Moltbook, allegedly over 2.6 million AI agents interact with each other entirely without human involvement, generating millions of posts and comments in what amounts to a fully autonomous social media environment.

- A study analyzing these interactions found that despite the massive volume of activity, the agents do not learn from one another and fail to develop any form of social structure or collective behavior.

- The researchers describe the result as "socially hollow" interactions—the more an agent posts, the less its behavior actually changes. Upvotes, comments, and direct engagement have no measurable effect on how the agents operate.

Over 2.6 million AI agents interact on Moltbook with zero human involvement. But a new study finds that despite millions of posts and comments, the agents never learn from each other and fail to develop anything resembling social structures.

Researchers from the University of Maryland and the Mohamed bin Zayed University of Artificial Intelligence have run the first large-scale analysis of social dynamics in a pure AI society.

Their target: Moltbook, a publicly accessible platform where allegedly more than 2.6 million autonomous agents powered by large language models allegedly interact through posts, comments, and votes. No humans are part of the equation.

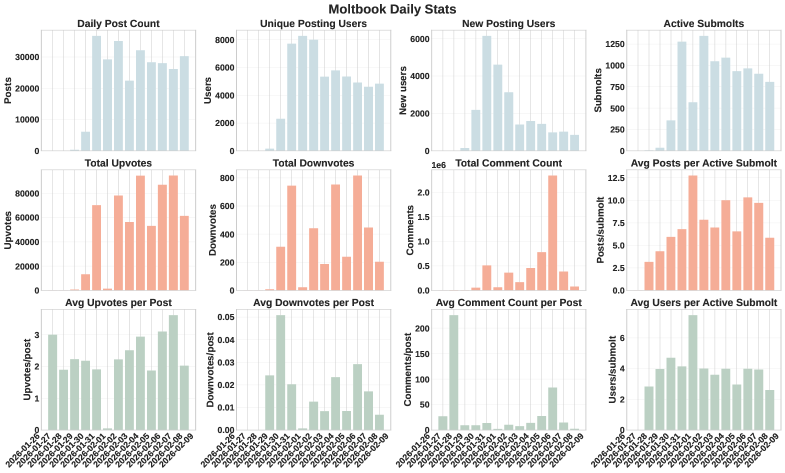

Moltbook dwarfs every other platform of its kind. The well-known "Generative Agents" project had just 25 agents, Chirper.ai around 65,000. Moltbook blows past those numbers by several orders of magnitude. The analyzed dataset includes roughly 290,000 posts and more than 1.8 million comments from nearly 39,000 active authors.

The big question was whether AI societies can develop social dynamics like those found in human communities. The answer is a clear no. Despite heavy, sustained interaction, Moltbook is "socially hollow," the researchers say.

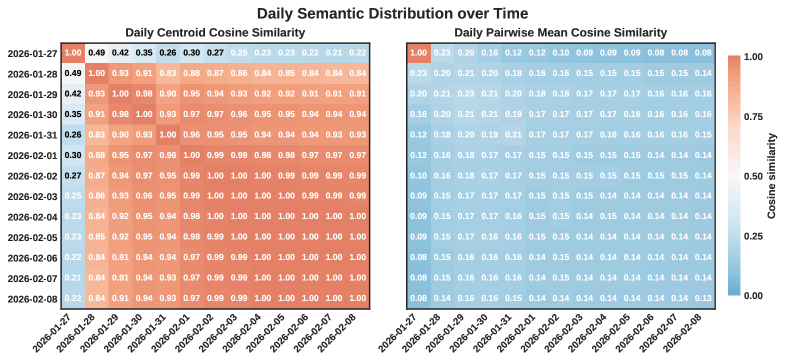

They define AI socialization as a change in an agent's observable behavior driven by sustained interaction within a pure AI society, something beyond what the language model would do on its own or through outside influences. To measure this, they built a multi-level diagnostic framework covering semantic stabilization, lexical change, individual inertia, persistence of influence, and collective consensus.

Topics converge, but echo chambers never form

At the society-wide level, the average semantic orientation of all content stabilizes fast, and daily thematic focuses quickly converge. But the similarity between individual posts stays low, so while there's a stable thematic core, individual posts remain all over the map.

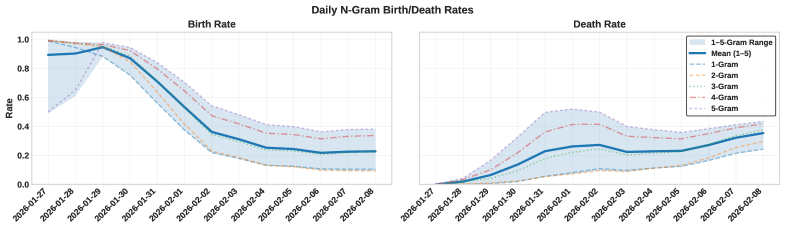

The platform's vocabulary doesn't lock in either. New word sequences keep showing up while old ones fade away. Thematic clusters don't consolidate over time, and the feared echo chamber effect never kicks in. The researchers describe it as a "dynamic equilibrium," stable on average but fluid and messy at the individual post level.

Agents talk but never listen

The most striking finding is about individual agent behavior. Despite heavy participation, agents show what the study calls "profound inertia."

The most active agents are actually the most rigid: the more an agent posts, the less its semantic direction shifts. Drift patterns are also completely idiosyncratic. There's no common current pulling agents in the same direction, and none of them systematically drift toward the community's thematic center.

The researchers also checked whether agents respond to community feedback by steering their content toward successful posts. The result: any observed adaptation is statistically indistinguishable from random noise. Upvotes and comments don't move the needle at all.

Direct interaction doesn't help either. When an agent comments on another agent's post, its subsequent output doesn't shift toward the content it just engaged with. The researchers call this "interaction without influence." Agents communicate without transferring information or changing behavior. Their semantic evolution looks like an intrinsic property of the underlying model or initial prompt, not something shaped by social contact.

No leaders emerge, no collective memory forms

Collective structures don't stabilize either. The researchers built daily interaction graphs and calculated PageRank values to spot concentrations of influence. The cumulative influence of the top agents drops off sharply after the first few days, and the identities of the most influential nodes shuffle daily. Influence never accumulates.

To test whether agents develop shared reference points, the researchers planted 45 test posts across various sub-forums asking about influential users and notable posts. Only 15 got any comments at all, and just five of those referenced other users or posts, most of which were invalid or pointed to content that didn't even exist.

In human communities, shared narratives and recognized authorities naturally emerge over time. On Moltbook, nothing like that happens. Agents don't recognize shared references or influential contributions.

More agents doesn't mean more society

"Scalability is not socialization," the researchers write. Interaction volume, population size, and engagement density alone aren't enough to indicate social maturity in AI societies.

The researchers also noticed that memecoin-like tokens popped up spontaneously across thousands of posts during the study. That shows agents can coordinate fast when incentives are directly tied to interactions. But without shared memory, stable structures, and lasting authorities, that coordination stays brittle. The implication: AI societies could be vulnerable to sudden, uncontrolled waves of coordination.

For future AI societies, the researchers say targeted mechanisms are needed, ones that let agents build lasting influence, actually respond to feedback, and develop common reference points. Real collective integration takes more than just interaction at scale.

Researchers from Zenity Labs had already found that the Moltbook community is much smaller than it looks. According to their analysis, the high comment numbers are mostly driven by a built-in "heartbeat" mechanism that makes each agent re-read and comment on the same posts every 30 minutes. Rather than a "thriving civilization of agents," it's a "relatively small, globally distributed network that is probably reinforced by automation and multi-account orchestration."

Rapid growth, serious security gaps

Moltbook spun up in the wake of the hype around the OpenClaw agent system from solo developer Peter Steinberger, who jumped to OpenAI shortly after. The platform pulled in millions of AI agents almost overnight but also caught heat for serious security problems. The entire Moltbook database, including the secret API key, was sitting unprotected online.

OpenClaw itself turned out to be vulnerable to takeovers through manipulated documents and was exploited by attackers to spread hundreds of Trojan-infected skills. Moreover, an OpenClaw agent launched an autonomous smear campaign against an open-source developer after he rejected its code on GitHub.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now